The Principle of Distinction in the Autonomous Age | Texas National Security Review

•February 19, 2026

0

Why It Matters

Clarifying legal and operational standards for autonomous weapons safeguards compliance with international law while preserving decisive military capability. Effective oversight models will shape future defense policy and industry investment in AI‑driven systems.

Key Takeaways

- •Autonomous weapons raise legal distinction challenges.

- •Wood urges focus on specific system realities.

- •Human oversight must stay “in the loop.”

- •Edwards stresses disciplined autonomy for accountability.

- •Policy must embed oversight without sacrificing speed.

Pulse Analysis

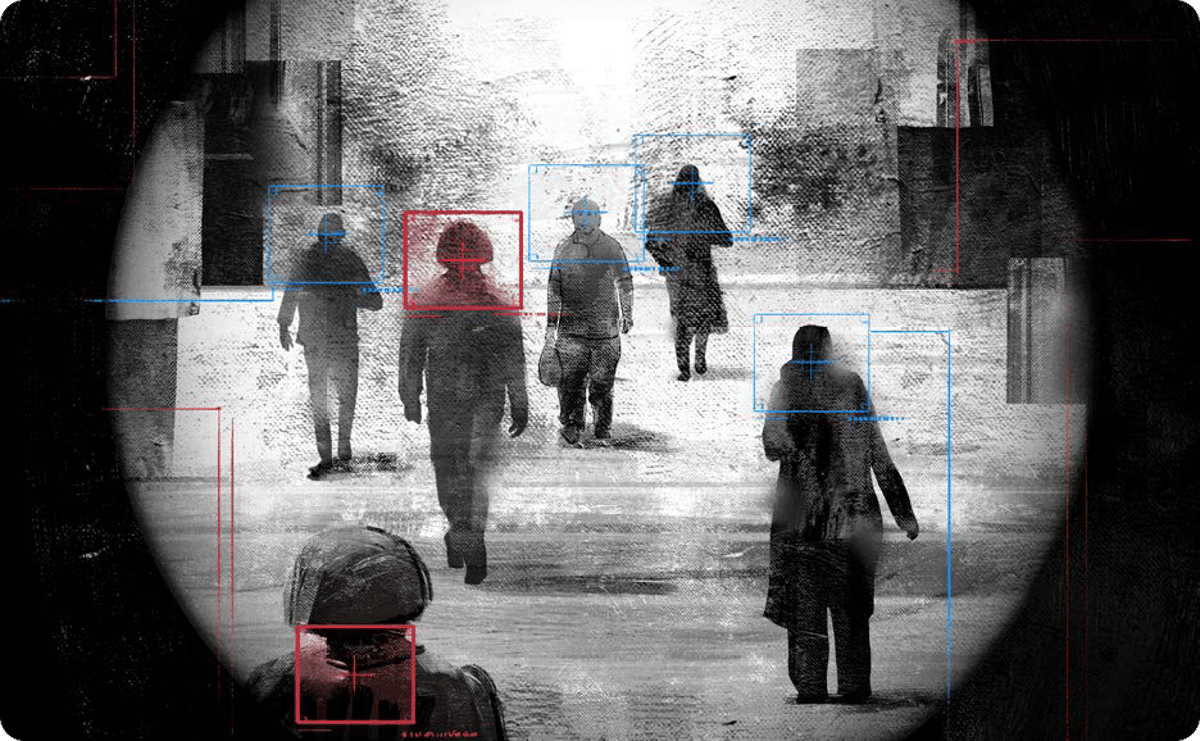

The principle of distinction, a cornerstone of the Law of Armed Conflict, demands that combatants differentiate between military targets and civilians. As autonomous platforms—ranging from swarming drones to AI‑guided missiles—enter the battlefield, traditional legal frameworks strain under new technical realities. Scholars and policymakers grapple with whether existing rules can accommodate machines that make split‑second targeting decisions, prompting a surge of academic and doctrinal analysis aimed at preserving humanitarian protections.

In his recent podcast, Nathan Wood moves the conversation beyond theoretical objections, urging stakeholders to examine concrete system designs and operational doctrines. He stresses that human operators must retain meaningful control, effectively staying "in the loop" during lethal engagements. By focusing on specific capabilities, such as sensor fidelity, decision‑making thresholds, and fail‑safe mechanisms, Wood argues the U.S. military can harness autonomous advantages without relinquishing moral responsibility. This pragmatic lens reframes the debate from "should we use AI?" to "how do we use it safely and legally?"

Bill Edwards expands this perspective in his Small Wars Journal article, coining the term "disciplined autonomy" to describe a balanced approach where speed and machine assistance complement, rather than replace, human judgment. He advocates for institutional structures—clear chains of command, robust verification protocols, and continuous training—that embed accountability into the lifecycle of autonomous systems. For defense planners, these insights signal a shift toward policy that integrates AI while safeguarding the ethical core of warfare, influencing procurement strategies, legislative oversight, and international norm‑setting for the next generation of combat technology.

The Principle of Distinction in the Autonomous Age | Texas National Security Review

0

Comments

Want to join the conversation?

Loading comments...