Disaggregating LLM Inference: Inside the SambaNova Intel Heterogeneous Compute Blueprint

Key Takeaways

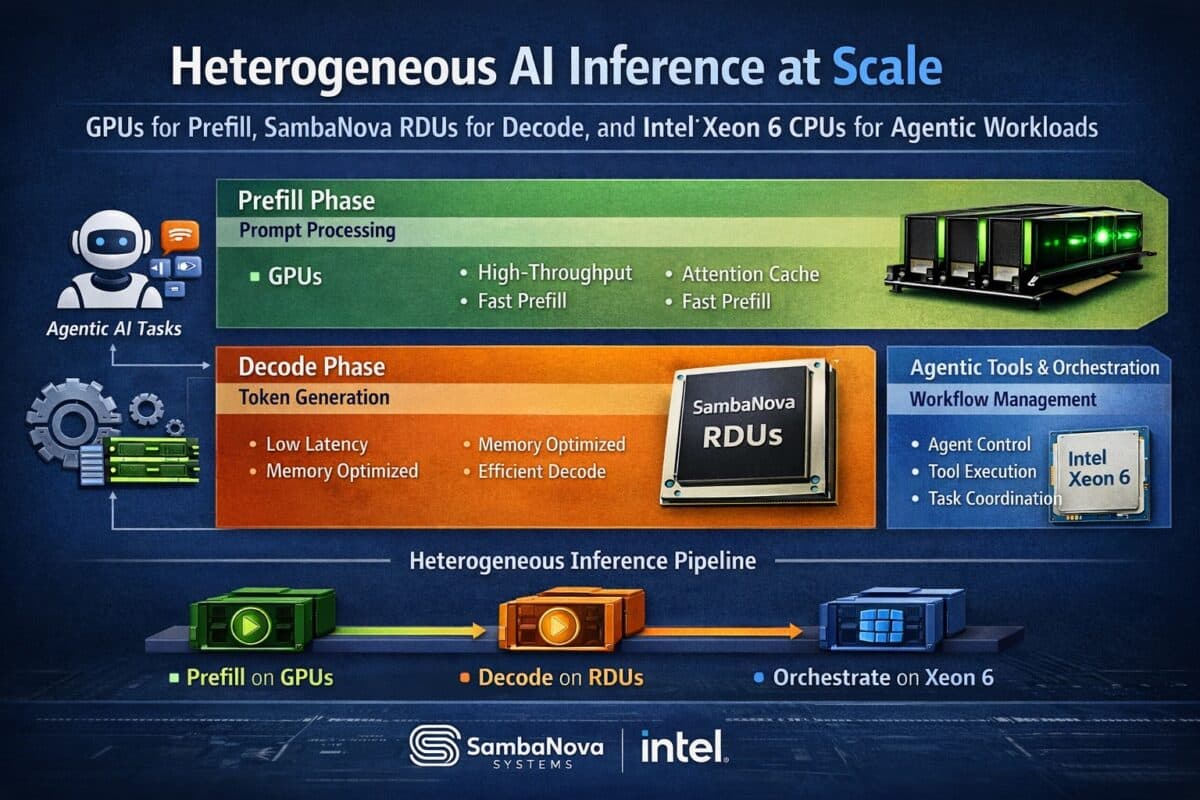

- •GPUs handle prefill, boosting first-token latency.

- •SambaNova RDUs accelerate decode, cutting token generation time.

- •Intel Xeon 6 CPUs manage agentic tool orchestration.

- •Heterogeneous design improves utilization and reduces GPU overprovisioning.

- •Modular scaling lets data centers adjust GPU, RDU, CPU resources.

Pulse Analysis

The rapid rise of large‑language‑model applications has exposed the limits of homogeneous accelerator clusters. Traditional deployments rely on GPUs for the entire inference pipeline, but the workload is split into a compute‑heavy prefill and a sequential, memory‑bandwidth‑bound decode. This mismatch leads to underutilized GPU cycles during token generation and higher latency for agents that must repeatedly call external tools. A disaggregated approach, where each phase runs on hardware optimized for its specific demands, addresses these inefficiencies and aligns with emerging composable‑infrastructure trends.

Intel’s blueprint leverages three distinct layers: GPUs for the dense matrix operations of prefill, SambaNova’s RDUs for the decode stage, and Xeon 6 CPUs for orchestration and tool execution. GPUs excel at parallel tensor math, delivering fast first‑token responses. RDUs, built for data‑flow execution, provide low‑latency access to attention caches, dramatically improving token‑per‑second rates in long‑context scenarios. Meanwhile, Xeon CPUs handle API calls, database queries, and workflow logic—tasks that require large memory footprints and mature software ecosystems. This separation ensures each processor operates within its optimal performance envelope, boosting overall system throughput.

For enterprises, the blueprint translates into tangible business value. By avoiding GPU overprovisioning for decode and orchestration, data centers can reduce capital expenditures and power consumption. The modular scaling model lets operators expand GPU, RDU, or CPU pools independently, matching investment to workload patterns. As agentic AI moves from experimental demos to production‑grade services, the ability to deliver responsive, cost‑effective inference will become a competitive differentiator. The Intel‑SambaNova partnership signals a broader industry shift toward heterogeneous, composable AI infrastructure that can keep pace with the evolving complexity of next‑generation AI workloads.

Disaggregating LLM Inference: Inside the SambaNova Intel Heterogeneous Compute Blueprint

Comments

Want to join the conversation?