NVIDIA’s Blackwell Ultra GB300 Sets New Standards in Long-Context Inference and Significantly Outperforms GB200

•February 23, 2026

0

Why It Matters

The GB300’s speed and latency improvements enable more responsive, high‑throughput AI services, crucial for next‑gen agentic applications. Its adoption could reshape data‑center economics despite uncertain cost implications.

Key Takeaways

- •GB300 achieves 226.2 tokens/sec throughput.

- •Latency improves ~1.58× over GB200.

- •Prefill‑decode disaggregation reduces bottlenecks.

- •Dynamic chunking optimizes large context processing.

- •Total cost of ownership remains uncertain.

Pulse Analysis

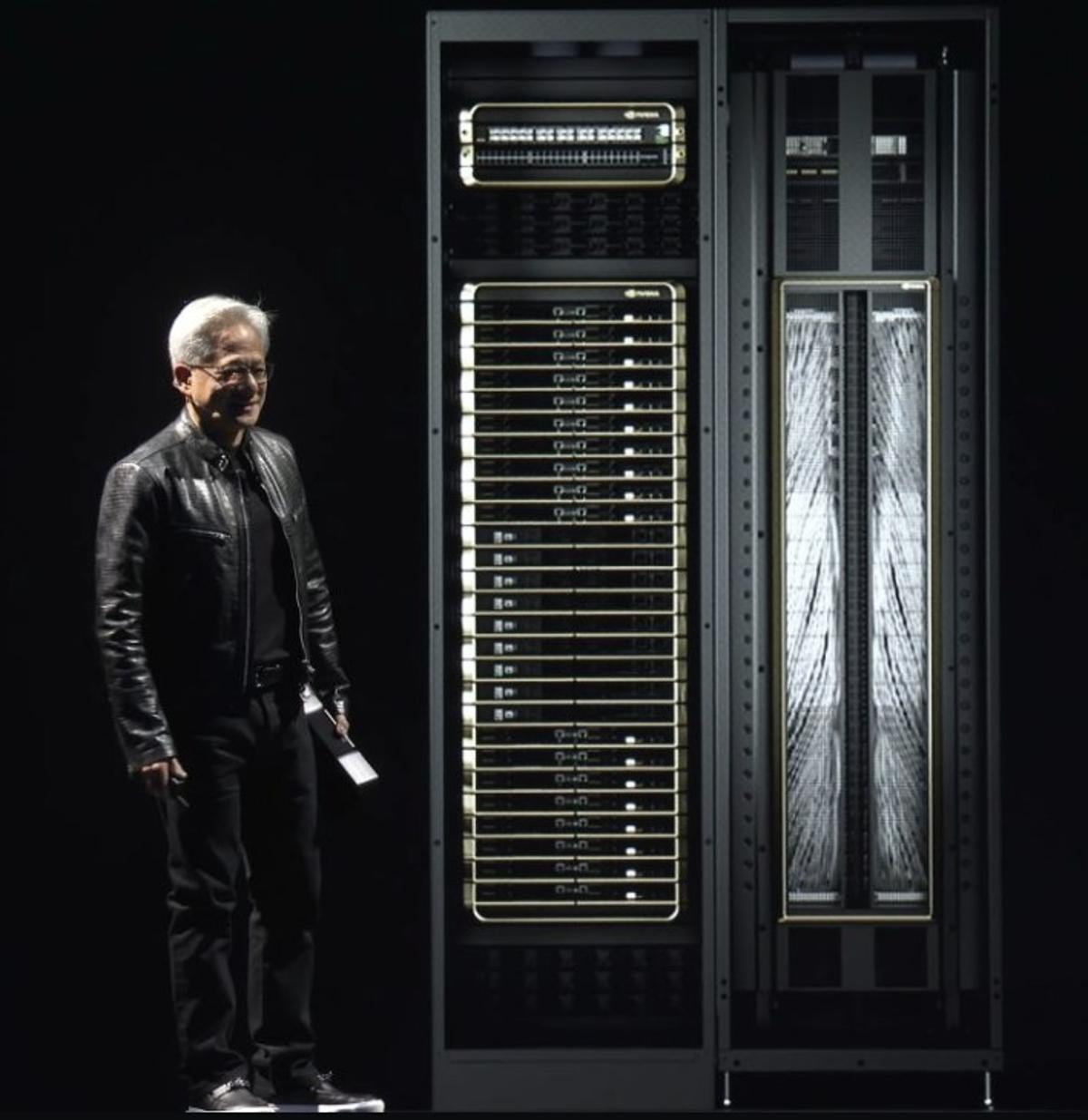

The AI inference market is rapidly shifting toward workloads that require processing massive context windows, such as autonomous agents and multi‑step reasoning systems. Traditional GPU platforms struggle when token sequences stretch into the thousands, creating bottlenecks in memory bandwidth and cache management. NVIDIA’s Blackwell Ultra generation, embodied by the GB300 NVL72 rack, directly addresses this gap by delivering a 1.5× performance boost and a record 226.2 tokens‑per‑second throughput, positioning it as a premier solution for latency‑sensitive, high‑volume token generation.

Under the hood, the GB300 leverages a suite of software‑hardware co‑optimizations. Prefill‑decode disaggregation separates prompt ingestion from token generation, allowing distinct hardware nodes to specialize and eliminate contention during long‑context processing. Dynamic chunking further slices prompts into manageable segments, while refined key‑value cache handling reduces memory pressure. Combined with expanded VRAM capacity and higher memory bandwidth, these innovations translate into tangible gains: up to 1.58× lower latency and markedly higher per‑GPU throughput, benefits that are especially pronounced in multi‑token prediction scenarios.

For hyperscalers and emerging neocloud providers, the GB300 promises to accelerate AI service delivery and improve queries‑per‑watt metrics, potentially offsetting its higher power density and rack complexity. However, the lack of transparent total‑cost‑of‑ownership data means enterprises must model operational expenses against the performance premium. If NVIDIA can demonstrate scalable efficiency at competitive TCO, the GB300 could become the de‑facto platform for the next wave of agentic AI, reinforcing NVIDIA’s dominance in the high‑end AI hardware segment.

NVIDIA’s Blackwell Ultra GB300 sets new standards in long-context inference and significantly outperforms GB200

0

Comments

Want to join the conversation?

Loading comments...