CPU Requirements for AI Workloads Are Multiplying, Driving Intensifying Shortages and Price Hikes — Intel Already Shifting Production From Consumer Chips to Xeon as Inference Workloads Drive Server CPU Ratios Back Toward Parity with GPUs

Companies Mentioned

Why It Matters

Tighter CPU‑to‑GPU ratios make CPUs a critical cost driver in AI infrastructure, reshaping vendor supply strategies and influencing overall AI deployment economics.

Key Takeaways

- •CPU-to-GPU ratio tightened from 1:8 to 1:4, may reach 1:1

- •Server CPU prices rose 10‑20% since March, lead times ~6 months

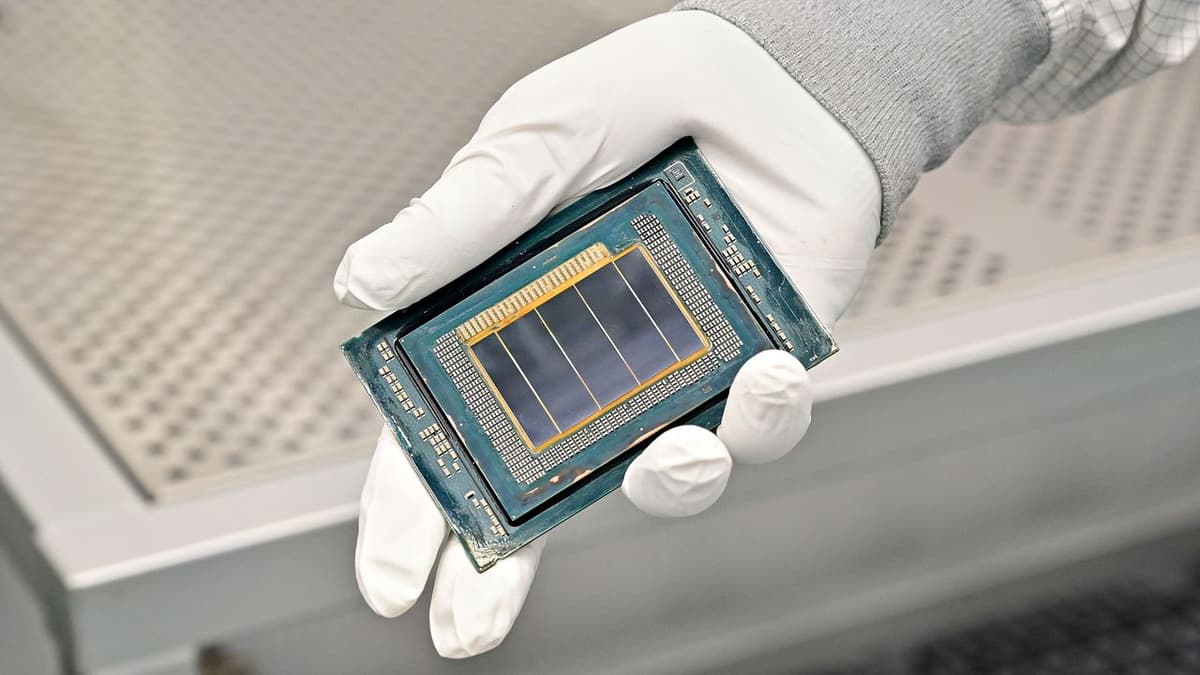

- •Intel shifted wafer capacity from consumer chips to Xeon data‑center CPUs

- •Q1 data‑center AI revenue hit $5.1 billion, up 22% YoY

- •Analysts forecast another 8‑10% server CPU price hike in H2 2026

Pulse Analysis

The AI landscape is moving beyond massive training clusters toward inference‑heavy deployments that power real‑time applications such as chatbots, recommendation engines, and autonomous agents. Unlike training, which can be GPU‑centric, inference workloads require frequent data movement, low‑latency orchestration, and higher per‑core performance, driving a surge in CPU demand. As a result, the traditional 1:8 CPU‑to‑GPU balance in server racks is collapsing toward 1:4 and could eventually reach 1:1, fundamentally altering the cost structure of AI infrastructure.

Supply chains are feeling the strain. Intel, the dominant Xeon supplier, announced a strategic reallocation of its silicon fabs, diverting capacity from consumer‑grade CPUs to data‑center chips. This pivot has already lifted server CPU average selling prices by up to 20% and stretched order fulfillment to six months, while consumer CPU prices have risen 5‑10%. The price pressure is feeding through to cloud providers and enterprises, prompting them to reassess budgeting for AI workloads and explore alternative architectures or multi‑vendor strategies.

Looking ahead, the tightening CPU‑to‑GPU ratio could accelerate competition among silicon vendors. AMD’s EPYC line and emerging ARM‑based data‑center solutions may gain traction if they can offer comparable performance with better price‑performance ratios. Meanwhile, Intel’s focus on higher‑core Xeon offerings and potential price adjustments aims to stabilize supply, but analysts warn of continued volatility through the second half of 2026. Companies planning AI expansions should monitor capacity forecasts, diversify hardware sourcing, and factor in rising CPU costs when modeling total cost of ownership.

CPU requirements for AI workloads are multiplying, driving intensifying shortages and price hikes — Intel already shifting production from consumer chips to Xeon as inference workloads drive server CPU ratios back toward parity with GPUs

Comments

Want to join the conversation?

Loading comments...