Visual Intelligence Powering Next-Generation Robotics

Why It Matters

By embedding metrology‑level perception into robots, manufacturers gain flexibility, reduce scrap and accelerate time‑to‑market for complex, low‑volume products. The shift also creates new value streams around data‑driven quality and adaptive automation.

Key Takeaways

- •Vision now provides sub‑millimeter measurement for robots

- •3D imaging enables real‑time geometry verification

- •AI-driven vision detects complex defects and learns over time

- •Inline vision controls processes, reducing scrap rates

- •Collaborative robots use vision for safety and dynamic workspaces

Pulse Analysis

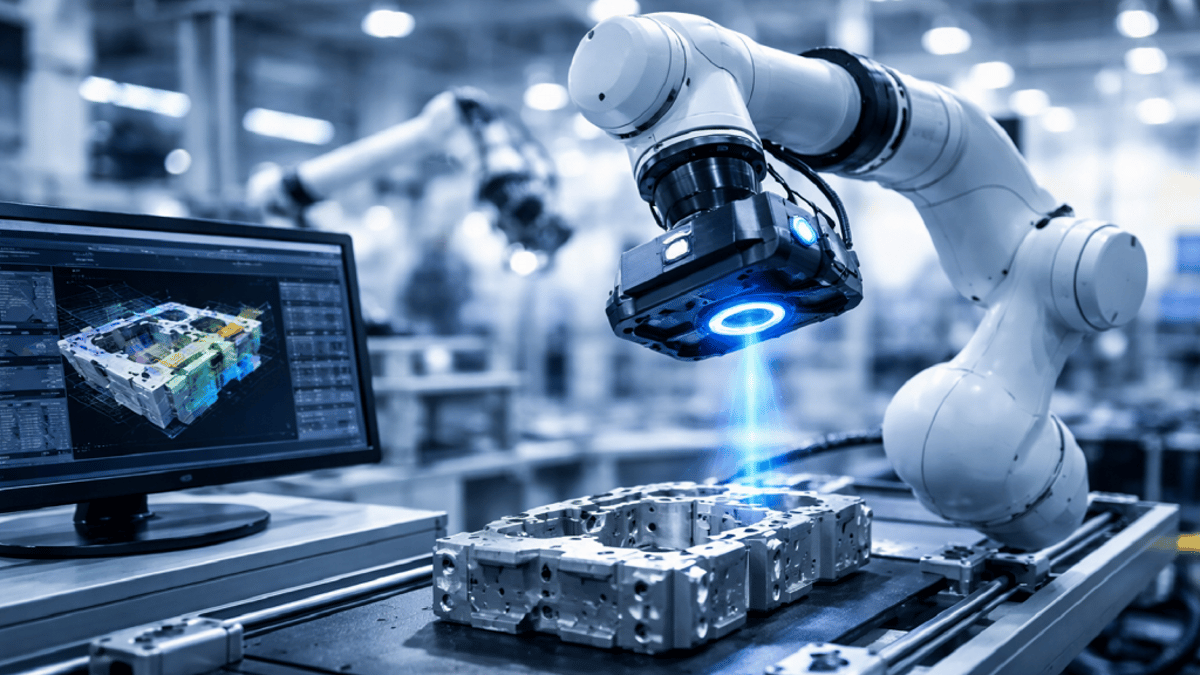

The rise of machine vision in robotics reflects a broader convergence of perception and action. Modern cameras deliver megapixel detail while structured‑light and time‑of‑flight sensors generate dense 3D point clouds. Coupled with edge AI, these data streams produce sub‑millimeter positioning and defect classification that rival traditional coordinate‑measuring machines. As a result, robots are no longer confined to pre‑programmed motions; they continuously measure, adjust and execute, delivering metrology‑grade accuracy on the shop floor.

Flexibility is the new competitive edge in manufacturing, especially for high‑mix, low‑volume production. Vision‑guided robots can locate randomly oriented parts, re‑orient them on conveyors, and adapt tool paths on the fly, eliminating costly fixturing. Industries such as electric‑vehicle battery assembly, aerospace composite fabrication, miniaturized electronics and precision medical devices are leveraging this adaptability to shorten changeover times and maintain tight tolerances despite part variability. Integrated with digital twins and manufacturing execution systems, vision data becomes a live thread that aligns design intent with actual production outcomes.

Despite its promise, deploying vision‑centric robotics faces practical hurdles. Variable lighting, reflective surfaces and high‑resolution data streams strain edge processors, while calibrating multi‑sensor arrays to traceable standards remains complex. Moreover, the workforce must blend optics, AI, data science and robotics expertise—a skill set traditionally siloed. Looking ahead, tighter fusion of vision with force and tactile sensing, self‑calibrating algorithms and globally shared AI models will further dissolve the boundaries between robotics, vision and metrology, cementing visual intelligence as the primary sense for next‑generation automation.

Visual Intelligence Powering Next-Generation Robotics

Comments

Want to join the conversation?

Loading comments...