Video Premiere: Beyond This Point Performs “TacocaT” By Julie Zhu

•March 10, 2026

0

Key Takeaways

- •TacocaT blends GPT-2 generated text with live percussion

- •Julie Zhu's Deep Drawing lab explores AI-driven sound creation

- •Beyond This Point commissions experimental AI‑music collaborations

- •Performance uses contact mics and Gaussian processes for texture

- •Upcoming Routledge book examines musicians building their own AI tools

Summary

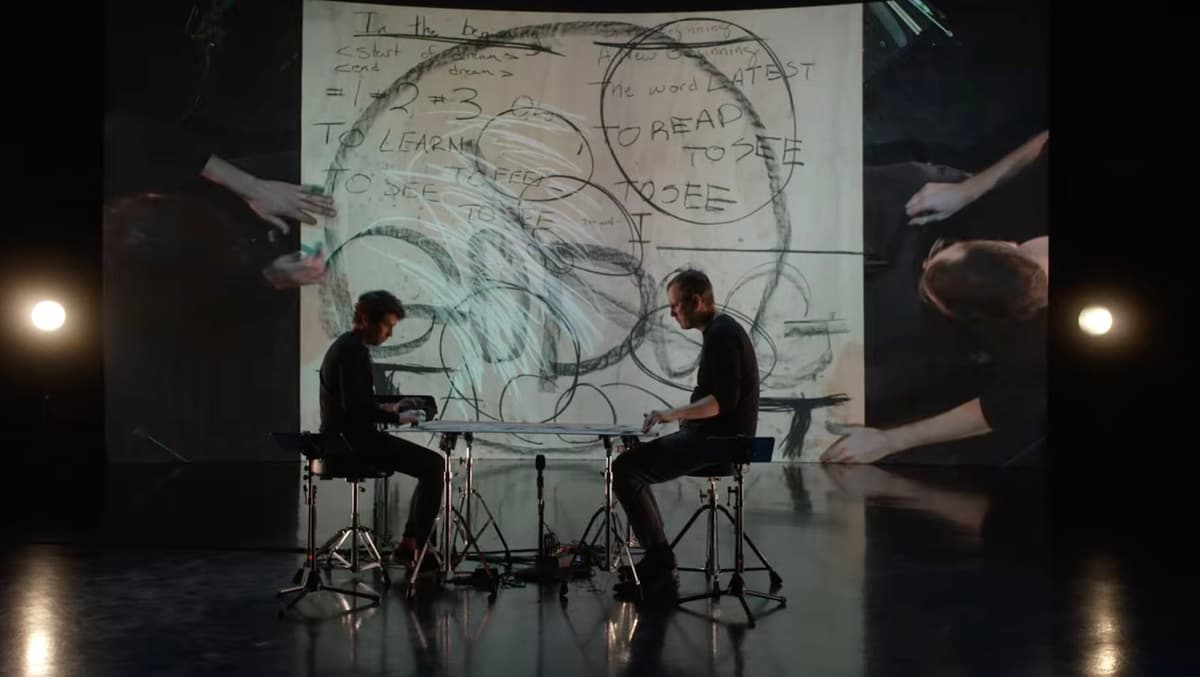

Julie Zhu, composer and head of the Deep Drawing AI lab, premiered her 22‑minute piece “TacocaT” at Chicago’s Frequency Fest in February 2025, commissioned by the experimental ensemble Beyond This Point. The work fuses GPT‑2‑generated text—fine‑tuned on Abbott’s *Flatland*—with live percussion, contact‑mic captured board sounds, and Max‑based Gaussian processes. Performers Adam Rosenblatt and John Corkill manipulate writing, tapping, and electronic reverberation to create a kinetic dialogue between human gesture and machine learning. Zhu’s interdisciplinary practice continues to push the boundaries of AI‑augmented music composition.

Pulse Analysis

The integration of artificial intelligence into music composition is no longer a speculative curiosity; it is an evolving practice that challenges traditional notions of authorship. Julie Zhu’s career exemplifies this shift, as she leverages machine learning models not merely as tools but as co‑creators. By fine‑tuning a GPT‑2 model on the 19th‑century novella *Flatland*, she generates text that feeds directly into the sonic fabric of *TacocaT*, blurring the line between literary source material and auditory output. This approach reflects a broader trend where composers treat data sets and algorithms as compositional instruments, expanding the palette of sounds available to contemporary creators.

In *TacocaT*, the technical architecture is as much a part of the performance as the musicians themselves. Contact microphones capture the raw timbre of a plywood board being written upon, feeding the signal into a Max patch that applies Gaussian processes to sculpt texture and diffusion. The resulting soundscape reacts in real time to the percussive gestures of Adam Rosenblatt and John Corkill, creating a feedback loop where human rhythm shapes, and is shaped by, algorithmic processing. Such immersive, sensor‑driven setups illustrate how live electronics can become an interactive medium, allowing performers to manipulate AI‑generated material on the fly.

Beyond the artistic novelty, the piece underscores significant industry implications. Academic institutions like the University of Michigan are embedding AI into composition curricula, while ensembles such as Beyond This Point provide platforms for experimental works to reach broader audiences. Zhu’s forthcoming Routledge volume, co‑edited with Constantin Basica, promises to codify best practices for musicians building their own AI tools, potentially democratizing access to sophisticated generative technologies. As AI continues to permeate creative workflows, projects like *TacocaT* serve as both proof of concept and a catalyst for future collaborations between technologists, composers, and performance ensembles.

Comments

Want to join the conversation?