Roadside Radar Sensors Could Reduce Blind Spots in AV Operations

•March 3, 2026

0

Why It Matters

Embedding radar in roadways fills critical perception gaps, enhancing AV reliability and accelerating regulatory acceptance. The technology also creates a new revenue stream for municipalities through sensor‑as‑a‑service models.

Key Takeaways

- •Roadside radars extend AV perception beyond vehicle-mounted sensors

- •Low‑power millimeter‑wave design enables dense urban deployment

- •Luneburg lens eliminates heavy computation for angle estimation

- •Integrated sensing‑communication reduces network traffic and latency

- •Faster direction resolution improves safety in adverse weather

Pulse Analysis

The push toward fully autonomous fleets has exposed a fundamental limitation: vehicle‑mounted sensors can only see what lies within their own line of sight. Cameras and lidar falter in rain, fog, or low‑light conditions, while traditional radar suffers from signal scattering that leaves blind spots at intersections and around large obstacles. By relocating part of the sensing workload to the roadway itself, manufacturers can create a distributed perception network that continuously feeds high‑resolution data to any equipped vehicle, dramatically reducing the risk of missed hazards.

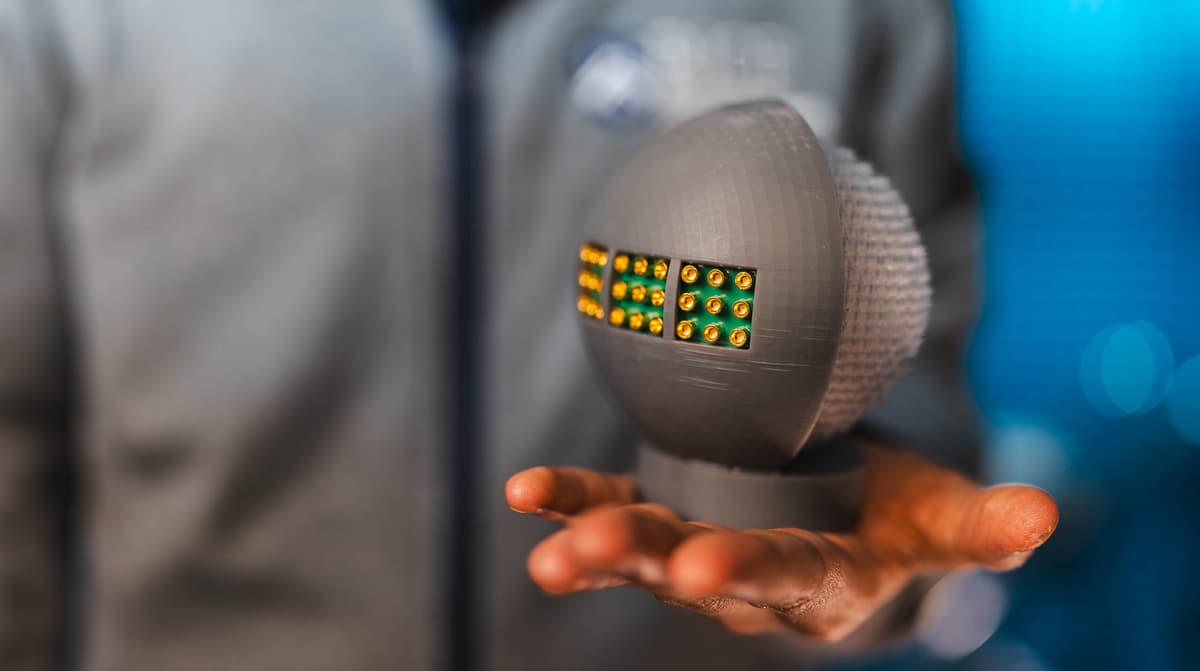

Eyedar’s innovation lies in its physical‑layer design. A 3D‑printed Luneburg lens, composed of over 8,000 gradient‑index elements, focuses incoming millimeter‑wave energy onto a compact antenna array that acts like a retinal sensor. This architecture sidesteps the computationally intensive angle‑finding algorithms typical of conventional radars, delivering direction estimates up to 200 times faster while consuming minimal power. Because the device alternates between absorbing and reflecting radar pulses, it can encode information without generating additional emissions, effectively merging sensing and communication into a single, low‑cost node.

The implications extend beyond autonomous cars. Municipalities could deploy these sensors on streetlights, traffic signals, and even bike lanes, creating a city‑wide safety mesh that supports drones, delivery robots, and wearable devices. Such a network promises real‑time, weather‑agnostic situational awareness, opening new business models for infrastructure‑as‑a‑service and accelerating the rollout of Level‑4 and Level‑5 autonomy in dense urban corridors. As standards evolve, roadside radar could become a cornerstone of the smart‑city ecosystem, aligning transportation safety with broader IoT initiatives.

Roadside radar sensors could reduce blind spots in AV operations

0

Comments

Want to join the conversation?

Loading comments...