Why It Matters

High‑precision tool calling unlocks modular AI ecosystems, enabling businesses to combine best‑in‑class specialist agents without monolithic models, accelerating innovation and reducing operational risk.

Key Takeaways

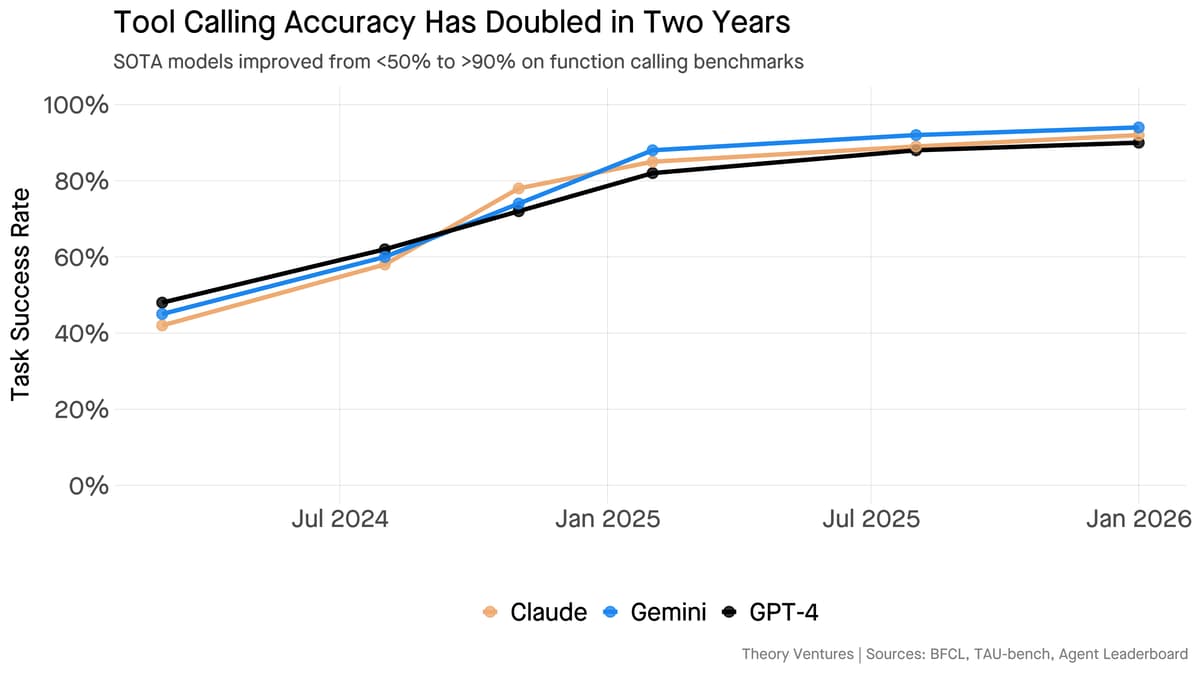

- •Tool-calling accuracy now exceeds 90% on benchmarks

- •Trillion-parameter models still outperform smaller action models

- •Distillation creates 40% smaller, 60% faster models

- •Frontier models orchestrate specialist agents across vendors

- •Startups can thrive building niche tool-specific agents

Pulse Analysis

The breakthrough in tool‑calling reliability marks a turning point for enterprise AI adoption. Benchmarks such as the Berkeley Function Calling Leaderboard now report over 90% success, reflecting not just better training data but also refined prompting strategies and tighter integration with APIs. This reliability reduces the friction that previously forced developers to embed all capabilities within a single monolithic model, opening the door for more flexible, plug‑and‑play architectures.

While the accuracy gains stem from massive, trillion‑parameter models, economic realities are driving a parallel push toward efficiency. Recent distillation techniques shrink model footprints by roughly 40% and accelerate inference by 60%, preserving up to 97% of the original performance. This balance allows organizations to deploy powerful orchestrators in production environments without prohibitive compute costs, while still leveraging the deep world knowledge that only large-scale pre‑training can provide.

The new ecosystem resembles a constellation of specialist agents, each excelling in a narrow domain—search, retrieval, business intelligence, or web interaction. A frontier model acts as the executive, dynamically routing requests to the most suitable specialist, regardless of vendor. This modularity creates fertile ground for startups that focus on niche tool agents rather than competing on raw model size. Investors and enterprises alike are beginning to value these plug‑in capabilities, reshaping the AI market toward a layered, interoperable future.

AI Managing AI

0

Comments

Want to join the conversation?

Loading comments...