Building a Healthcare Robot From Simulation to Deployment with NVIDIA Isaac

Why It Matters

By tightly integrating simulation, training, evaluation and deployment, the workflow aims to cut development time, reduce costly real‑world trials, and accelerate safe Sim2Real adoption in medical robotics.

Summary

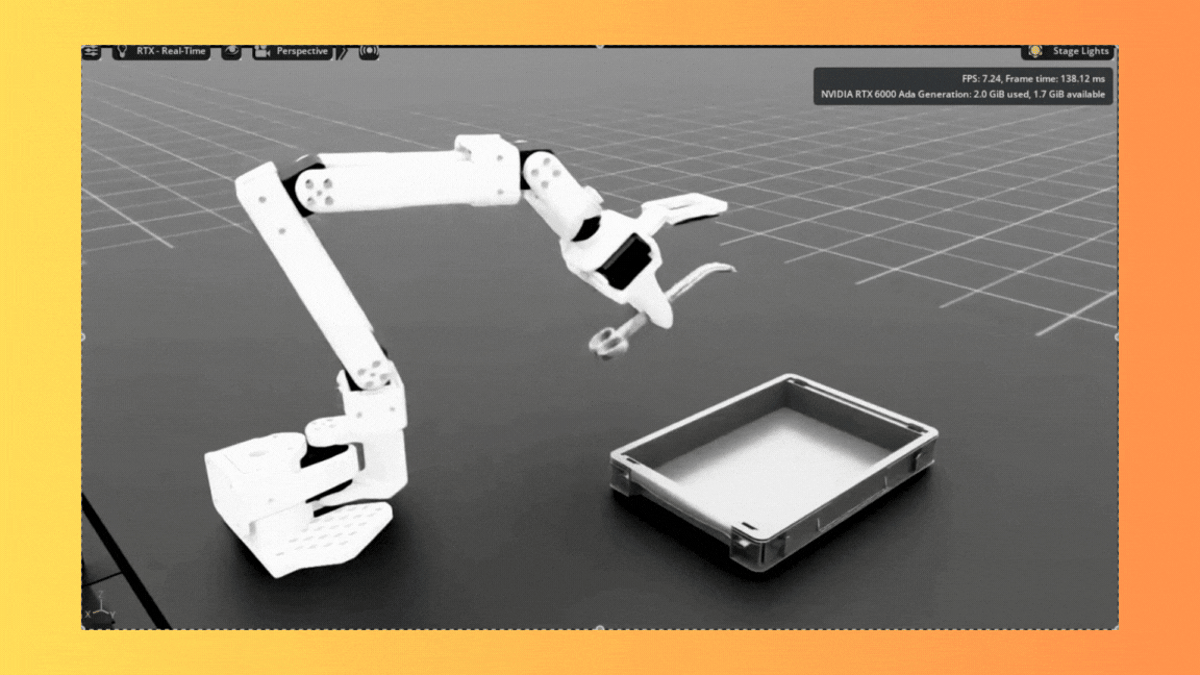

NVIDIA released Isaac for Healthcare v0.4 with an end‑to‑end SO‑ARM starter workflow that takes developers from mixed simulation and real‑world data collection through fine‑tuning and real‑time deployment of surgical assistant robots. The pipeline fine‑tunes GR00T N1.5 on predominantly synthetic data (over 93% synthetic) using roughly 70 simulated and 10–20 real episodes, supports dual‑camera vision and TensorRT optimization, and requires Ampere‑class GPUs (≥30 GB VRAM) and 6‑DOF SO‑ARM hardware. By tightly integrating simulation, training, evaluation and deployment, the workflow aims to cut development time, reduce costly real‑world trials, and accelerate safe Sim2Real adoption in medical robotics.

Building a Healthcare Robot from Simulation to Deployment with NVIDIA Isaac

Comments

Want to join the conversation?

Loading comments...