Explainable AI Achieves 83.5% Accuracy with Quantized Active Ingredients and Boltzmann Machines

•January 20, 2026

0

Key Takeaways

- •QBMs achieve 83.5% accuracy on binarized MNIST.

- •Classical Boltzmann Machines reach only 54% accuracy.

- •QBMs produce lower attribution entropy (1.27 vs 1.39).

- •Hybrid quantum‑classical circuits use entangling layers for richer representations.

- •Saliency maps and SHAP show QBMs’ clearer feature importance.

Summary

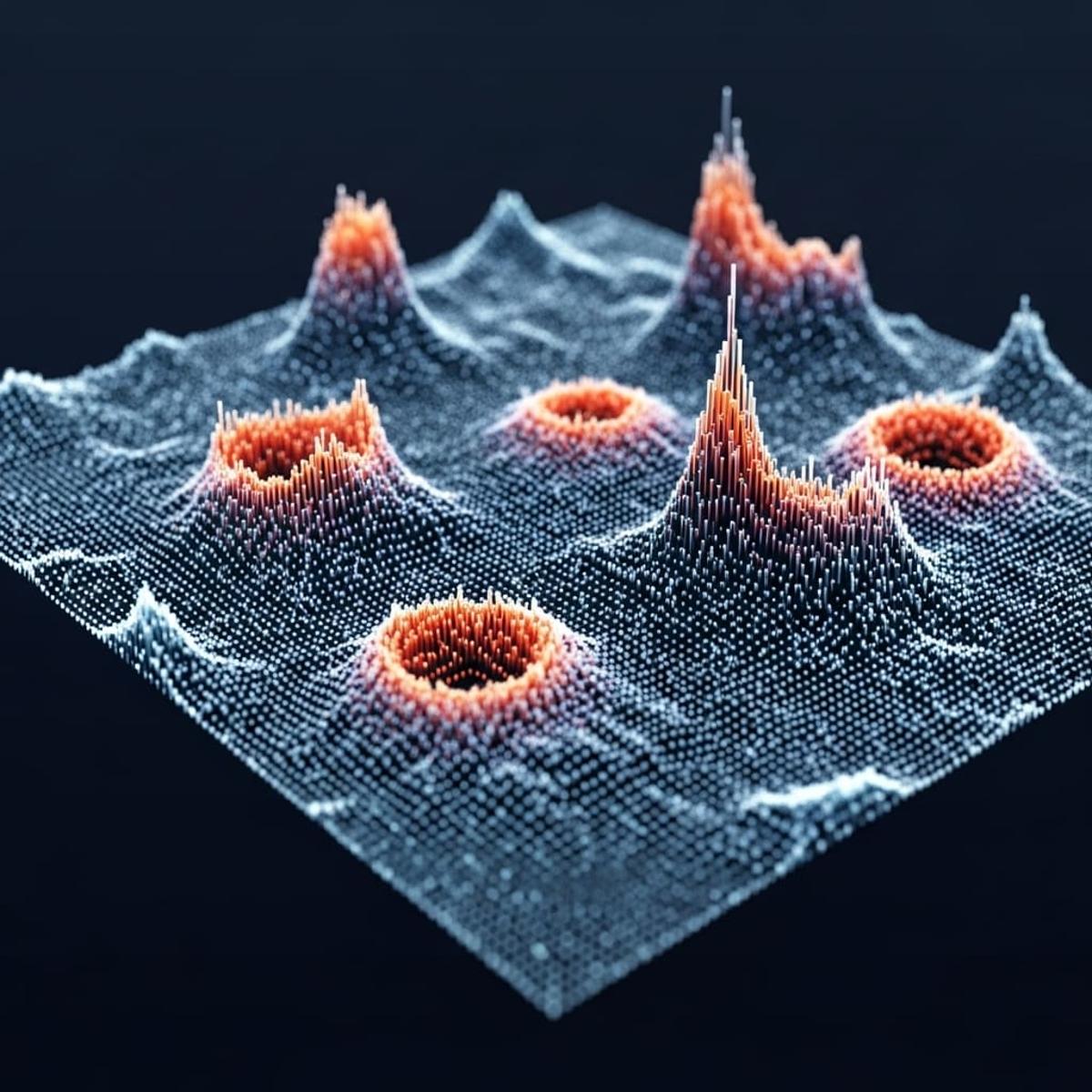

Researchers introduced a hybrid quantum‑classical framework that uses Quantized Boltzmann Machines (QBMs) to improve both performance and transparency in AI decision‑making. Tested on a binarized, PCA‑reduced MNIST subset, QBMs reached 83.5% classification accuracy, far surpassing the 54% of classical Boltzmann Machines (CBMs). The study employed gradient‑based saliency maps for QBMs and SHAP values for CBMs, revealing more concentrated feature‑attribution distributions (entropy 1.27 vs 1.39). These results suggest quantum‑enhanced models can deliver more trustworthy, explainable AI.

Pulse Analysis

The surge of interest in explainable AI reflects growing regulatory and ethical pressures, especially in domains where opaque decisions can have life‑changing consequences. While classical techniques like SHAP and LIME have advanced interpretability, they often trade off predictive power. Quantum‑enhanced models, particularly Quantized Boltzmann Machines, introduce a new dimension by leveraging superposition and entanglement to capture complex data correlations that classical networks may miss. This capability translates into higher classification accuracy, as demonstrated by the 83.5% result on a reduced MNIST benchmark, positioning QBMs as a compelling alternative for tasks demanding both precision and clarity.

Beyond raw performance, the study highlights a measurable improvement in interpretability through entropy analysis of feature‑attribution distributions. Lower entropy indicates that QBMs concentrate importance on fewer, more decisive features, simplifying the narrative for stakeholders. Gradient‑based saliency maps applied to the quantum layer provide visual explanations that align closely with SHAP values from classical baselines, yet with sharper focus. This convergence suggests that quantum models can meet, and potentially exceed, current explainability standards without sacrificing speed or scalability, especially as quantum hardware matures.

Looking ahead, the integration of hybrid quantum‑classical architectures could reshape AI deployment strategies across regulated industries. By embedding quantum layers within existing pipelines, firms can incrementally adopt quantum advantages while retaining familiar classical components. The reduced parameter footprint of QBMs also promises cost‑effective training on near‑term quantum devices. As research progresses, we can expect broader validation on real‑world datasets, paving the way for quantum‑backed, transparent AI solutions that satisfy both performance metrics and compliance mandates.

Comments

Want to join the conversation?