Key Takeaways

- •AI Overviews correct ~90% of answers.

- •Tens of millions of errors hourly across searches.

- •Over half accurate answers lack supporting source links.

- •Gemini 3 upgrade shows modest accuracy improvement.

- •Users must verify AI-generated information.

Pulse Analysis

Google’s AI Overviews have become a staple of modern search, surfacing concise answers directly on the results page. Their rise coincides with the company processing more than five trillion queries a year, a scale that magnifies any systematic flaw. While the technology promises speed and convenience, the Oumi study reveals that even a 90% correctness rate yields millions of incorrect snippets every hour, underscoring the hidden risk embedded in high‑volume AI deployment.

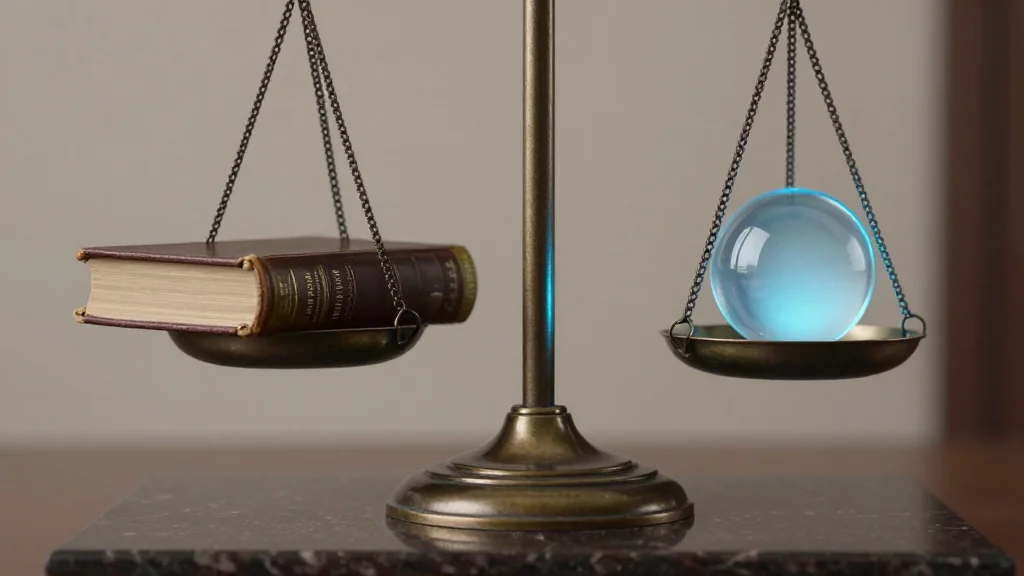

The crux of the accuracy problem lies in “ungrounded” responses—answers that appear correct but cite sources that do not fully support the information. Over half of the accurate‑looking answers fall into this category, making it difficult for users to verify claims without digging deeper. The transition from Gemini 2 to Gemini 3 showed a slight uptick in performance, but the fundamental challenge of source attribution remains. For businesses that rely on precise data, such as financial analysts or legal professionals, these inaccuracies can erode confidence and potentially lead to costly mistakes.

Looking ahead, the industry faces a pivotal decision: prioritize raw accuracy or enhance transparency. Google’s roadmap may involve tighter integration of citation mechanisms, real‑time fact‑checking, or user‑facing confidence scores. Meanwhile, experts advise users to treat AI Overviews as starting points rather than definitive answers. As AI continues to reshape information retrieval, the balance between convenience and verifiability will determine the technology’s long‑term credibility.

How Accurate Are Google’s A.I. Overviews?

Comments

Want to join the conversation?