Summary

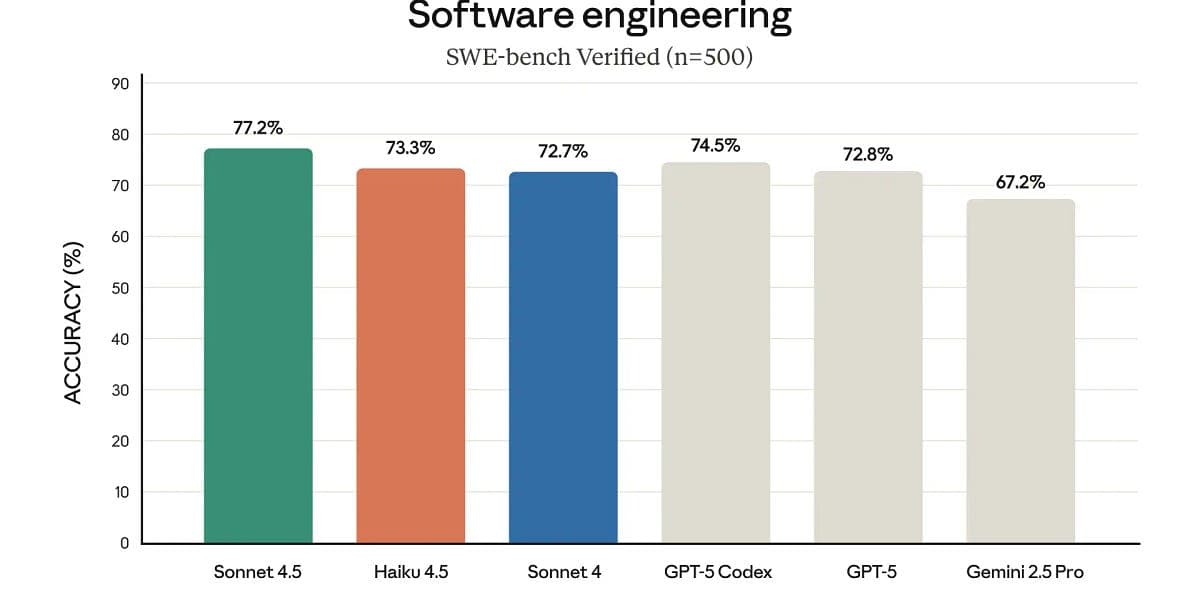

This week’s AI engineering roundup highlights three developments reshaping developer workflows and compute economics: OpenAI launched Agent Builder, a no-code drag-and-drop tool that lets non-developers assemble multi-agent workflows alongside ChatKit, Evals and reinforcement fine-tuning; NVIDIA unveiled the DGX Spark, a desktop-class petaflop workstation powered by Grace Blackwell and 128 GB unified memory for heavy local AI workloads; and Anthropic’s Claude Haiku 4.5 matches Sonnet-level reasoning and coding (73% on SWE-bench) while being twice as fast and three times cheaper. Together these moves lower barriers to AI deployment, tilt some workloads back to on-prem/local hardware for privacy and latency reasons, and accelerate the industry shift toward smaller, more efficient models that reduce cost and operational overhead. The net business implication: organizations must reassess cloud vs. local compute strategies and model selection to balance cost, performance, and control as accessible tooling expands adoption beyond traditional developer teams.

Comments

Want to join the conversation?