D-Matrix Secures $275M Funding to Accelerate AI Inference Chip Development

•January 21, 2026

•Jan 21, 2026

0

Participants

Why It Matters

By collapsing compute and memory into a single fabric, d‑Matrix dramatically lowers latency and power consumption for inference, giving enterprises a viable alternative to costly cloud APIs and large‑scale GPU farms.

Key Takeaways

- •d-Matrix raised $275 million to develop inference chips.

- •Chip uses Digital In‑Memory Compute to eliminate memory bottleneck.

- •DIMC architecture integrates compute directly into memory cells.

- •Chiplet design with RISC‑V control enables scalable acceleration.

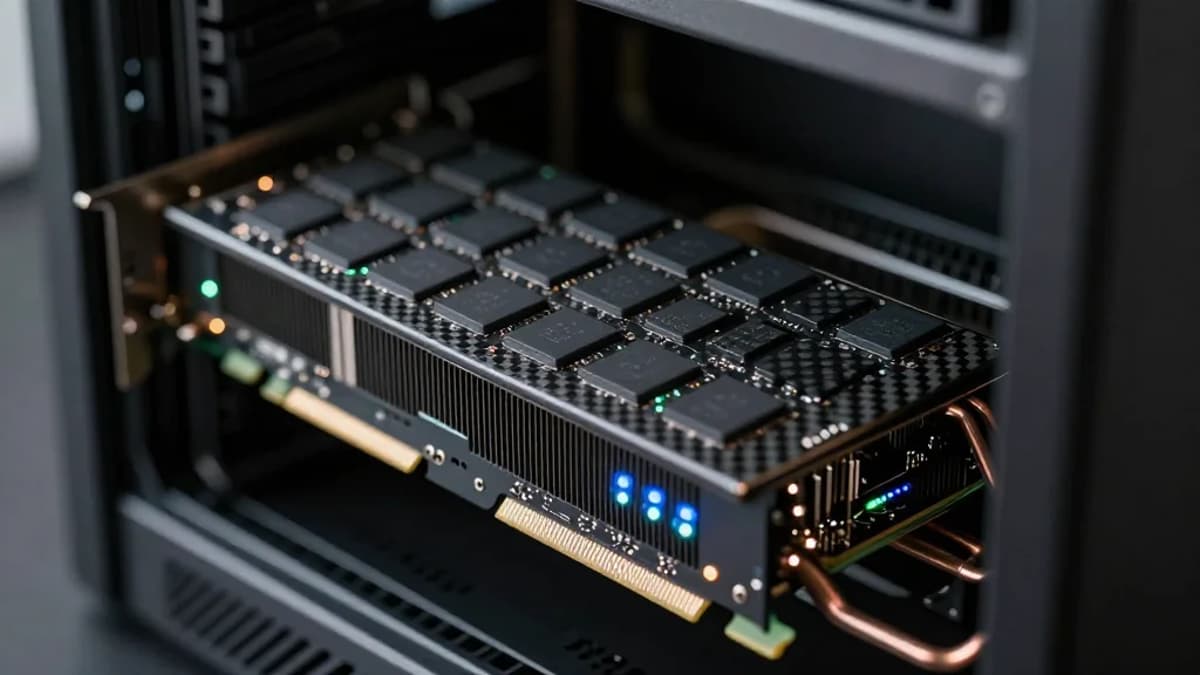

- •Jetstream PCIe cards target sub‑frontier LLM inference workloads.

Pulse Analysis

The surge in AI inference workloads is reshaping data‑center economics, as companies grapple with the high cost of running large language models in the cloud. While GPUs excel at training, their separated compute and memory pathways create latency that hampers real‑time inference, especially for fine‑tuned, domain‑specific models. d‑Matrix’s response is a hardware‑first strategy that re‑imagines the memory hierarchy, positioning the company to capture a growing market of enterprises that demand on‑premise, low‑latency AI services without the expense of massive GPU clusters.

At the heart of d‑Matrix’s Corsair platform is Digital In‑Memory Compute (DIMC), a technique that embeds matrix multiplication logic directly within SRAM cells. This eliminates the costly data shuttling between separate compute cores and memory banks, delivering higher bandwidth and lower energy per operation. The chiplet approach, orchestrated by a lightweight RISC‑V control core, allows the accelerator to scale modularly, tailoring performance to diverse model sizes. Compared with rivals like Nvidia’s GPUs or Google’s TPUs, the DIMC architecture offers a distinct advantage for transformer inference where weight movement, not raw FLOPs, is the primary bottleneck.

The commercial implications are significant. With its Jetstream PCIe cards, d‑Matrix provides a plug‑and‑play solution that integrates into existing server racks, enabling enterprises to run sub‑frontier models locally and reduce reliance on cloud APIs. This aligns with a broader industry shift toward hybrid AI deployments that blend edge inference with cloud scaling. As more startups enter the AI chip arena, d‑Matrix’s focus on memory‑centric design and rapid chiplet iteration could set a new performance‑per‑watt benchmark, accelerating the adoption of on‑premise generative AI across sectors.

Deal Summary

AI chip startup d-Matrix announced a $275 million funding round to advance its in‑memory compute platform for AI inference. The capital will support scaling of its Jetstream accelerator cards and further development of its Digital In‑Memory Compute technology. The round underscores growing investor interest in specialized AI inference hardware.

0

Comments

Want to join the conversation?

Loading comments...