AI Is Getting Better and Better at Generating Faces — but You Can Train to Spot the Fakes

•December 27, 2025

0

Why It Matters

The findings highlight a growing security risk as synthetic faces become hyperreal, while also offering a low‑cost training solution that can improve human vigilance across the workforce.

Key Takeaways

- •AI-generated faces fool even super recognizers

- •Five‑minute training boosts detection accuracy significantly

- •Training works for typical and super recognizers alike

- •Human‑in‑the‑loop may augment AI detection tools

Pulse Analysis

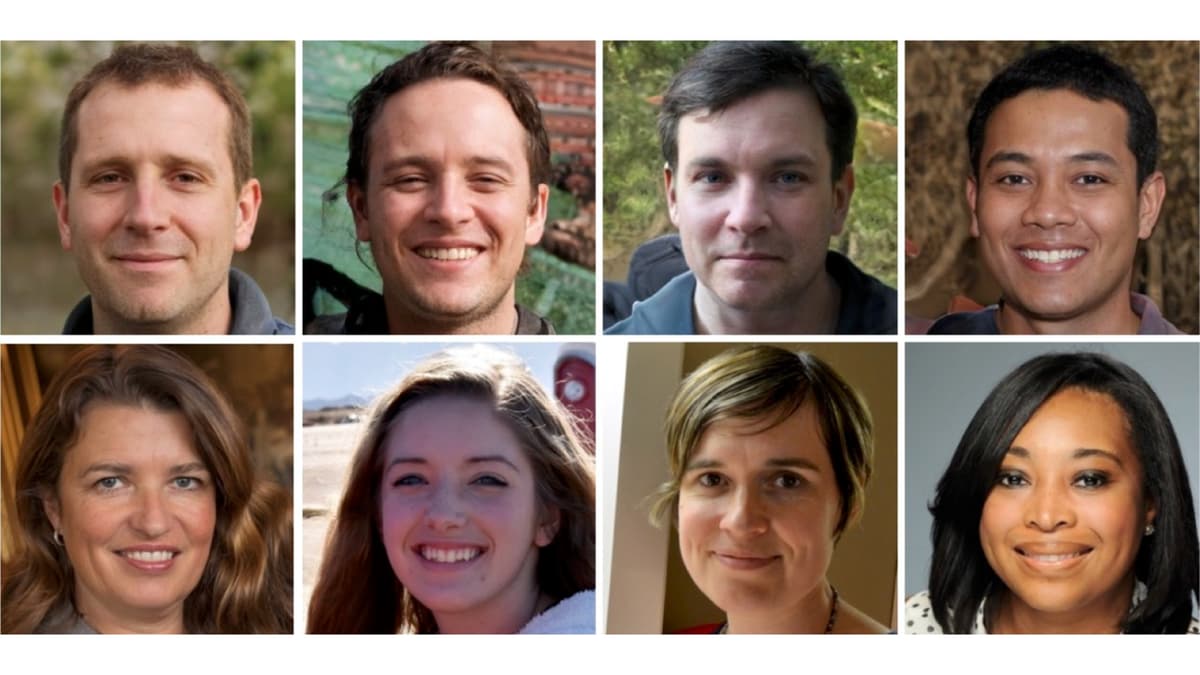

The rapid evolution of generative adversarial networks (GANs) has pushed synthetic facial imagery into a realm of hyperrealism that challenges even the most adept human observers. By iteratively refining a fake image against a discriminator, modern models produce portraits with flawless lighting, texture, and anatomical proportions, blurring the line between authentic photographs and algorithmic creations. This technical leap fuels concerns across media verification, identity fraud prevention, and deep‑fake weaponization, prompting a surge in research focused on detection methodologies.

In a November 2025 study, psychologists at the University of Reading discovered that both super recognizers—individuals with extraordinary facial‑processing abilities—and average participants could not reliably spot AI‑generated faces without guidance. Remarkably, a concise five‑minute instructional session highlighting typical rendering flaws—such as misplaced teeth, irregular hairlines, or overly smooth skin—raised accuracy rates substantially for both cohorts. The parity of improvement suggests that super recognizers rely on nuanced cues beyond obvious artefacts, and that targeted education can democratize detection skills across broader populations.

The practical implications are significant for industries reliant on visual authentication, from banking to social media moderation. Embedding trained human analysts into automated detection pipelines could mitigate false positives and adapt to evolving generative techniques faster than pure AI solutions. Moreover, scalable micro‑learning modules can equip frontline staff with the perceptual tools needed to flag synthetic media in real time. As synthetic media proliferates, combining brief, evidence‑based training with sophisticated algorithmic filters offers a balanced, resilient defense against deep‑fake threats.

AI is getting better and better at generating faces — but you can train to spot the fakes

0

Comments

Want to join the conversation?

Loading comments...