AI's GPU Problem Is Actually a Data Delivery Problem

•February 9, 2026

0

Companies Mentioned

Why It Matters

Decoupling data movement from storage prevents costly GPU starvation and protects AI pipelines from cascading failures, directly boosting ROI and operational resilience.

Key Takeaways

- •GPUs idle due to storage data starvation.

- •Direct storage coupling causes cascade failures.

- •Programmable delivery layer abstracts and optimizes data flow.

- •Improves GPU utilization and cost predictability.

- •Enhances security and traffic control for AI workloads.

Pulse Analysis

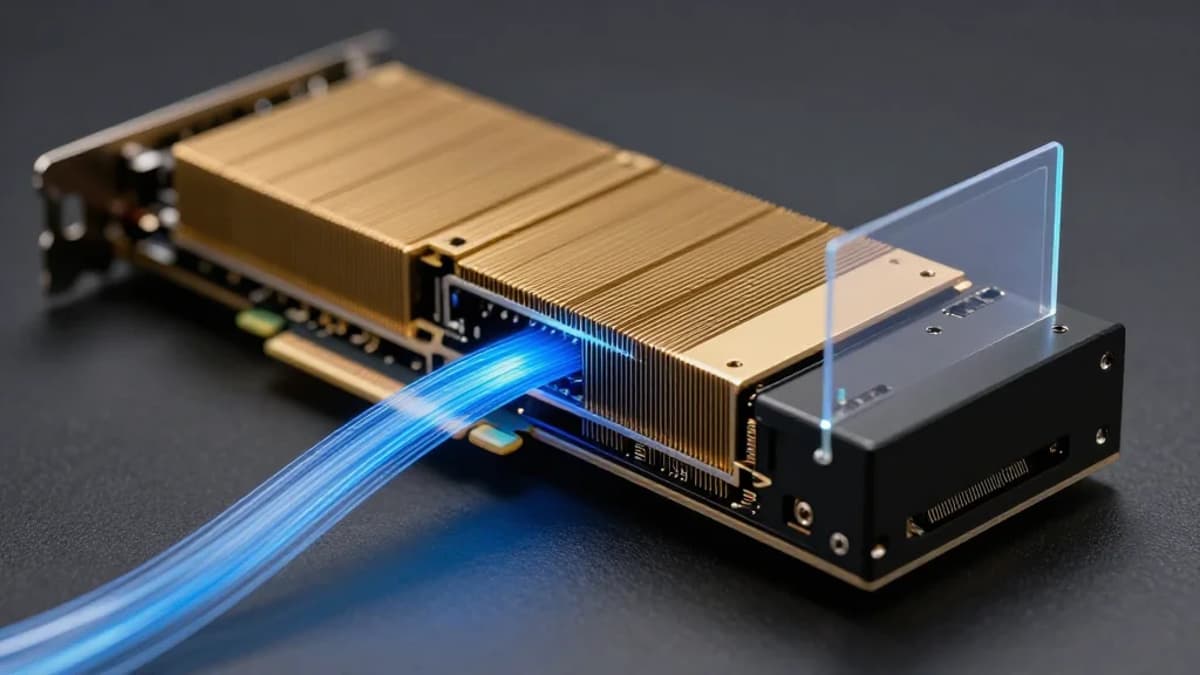

Enterprises pouring billions into GPU infrastructure are confronting a paradox: powerful processors remain under‑utilized because the data they need arrives too slowly. Traditional storage access patterns were built for transactional workloads, not the massive parallel reads, frequent checkpoint writes, and request amplification typical of large‑scale model training and Retrieval‑Augmented Generation. The resulting concurrency pressure on S3‑compatible object stores creates latency spikes and metadata bottlenecks that starve GPUs, turning capital‑intensive hardware into idle assets.

A dedicated data delivery layer acts as a programmable front door between compute and storage, insulating AI frameworks from storage idiosyncrasies. Solutions like F5’s BIG‑IP introduce health‑aware routing, intelligent caching, and protocol optimization close to the GPU, dynamically shaping traffic based on backend health signals. By abstracting storage endpoints, the layer can enforce granular security policies, encrypt traffic at line rate, and isolate misbehaving workloads without disrupting the entire service. This approach not only accelerates data ingestion and retrieval but also reduces cloud egress and storage amplification costs.

From a business perspective, the financial upside is compelling. Decoupling data delivery improves GPU utilization rates, turning idle cycles into productive compute and shortening training windows. Predictable data flow translates into steadier operating expenses, while the added resilience mitigates the risk of multi‑hour outages that can erode ROI. As AI models become more agentic and RAG‑centric, real‑time, policy‑driven data orchestration will be a competitive differentiator. Early adopters that treat data delivery as programmable infrastructure will scale faster, secure their AI pipelines, and capture market advantage.

AI's GPU problem is actually a data delivery problem

0

Comments

Want to join the conversation?

Loading comments...