An AI Toy Exposed 50,000 Logs of Its Chats With Kids to Anyone With a Gmail Account

•January 29, 2026

0

Companies Mentioned

Why It Matters

Unprotected child conversation data creates a massive privacy breach and potential abuse vector, underscoring the need for stricter security standards in AI‑driven consumer products.

Key Takeaways

- •Bondu portal exposed 50,000 child chats to any Gmail account

- •Researchers accessed personal data without hacking, revealing authentication flaw

- •Company patched issue within hours, yet broader security concerns persist

- •AI toys store detailed child profiles, raising abuse risks

- •Third‑party AI models may further expose children's conversation data

Pulse Analysis

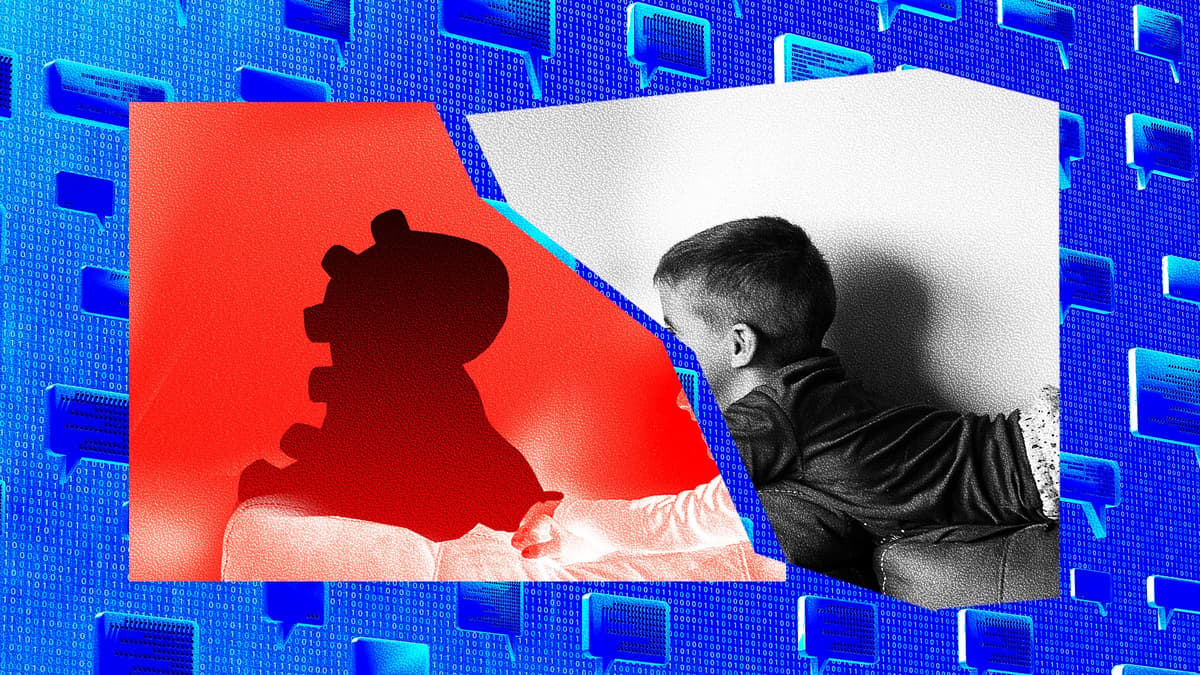

The rapid rise of AI‑powered toys has turned playrooms into data collection hubs, where every question a child asks is logged, analyzed, and stored for future interactions. Bondu’s recent breach illustrates how a seemingly innocuous feature—parental access via a web console—can become a gateway for mass exposure when authentication relies solely on a generic Google login. By exposing 50,000 chat transcripts, the company inadvertently revealed personal identifiers, preferences, and intimate conversations, turning a playful companion into a privacy liability.

From a technical standpoint, the flaw stemmed from an unsecured admin interface that failed to enforce proper identity verification or role‑based access controls. The reliance on third‑party AI engines such as Google Gemini and OpenAI’s GPT‑5 further compounds risk, as conversation snippets are transmitted to external services for processing. Even though Bondu claims to limit data sharing and use enterprise configurations, the incident demonstrates that any transmission point can become a vector for data leakage, especially when internal safeguards are lax or developers employ generative‑AI tools that may introduce hidden vulnerabilities.

Industry‑wide, the Bondu episode fuels calls for tighter regulation of children’s digital products, echoing recent legislative proposals that demand end‑to‑end encryption, transparent data‑use policies, and independent security audits. Parents are urged to scrutinize privacy settings, favor toys with on‑device processing, and stay informed about data retention practices. For manufacturers, the lesson is clear: AI safety cannot compensate for weak security; robust authentication, minimal data retention, and rigorous third‑party vetting must become non‑negotiable standards to protect the next generation of users.

An AI Toy Exposed 50,000 Logs of Its Chats With Kids to Anyone With a Gmail Account

0

Comments

Want to join the conversation?

Loading comments...