Anthropic Scientists Hacked Claude’s Brain — and It Noticed. Here’s Why That’s Huge

Why It Matters

Introspective AI could mitigate the longstanding black‑box problem, enabling safer, more transparent deployment in high‑stakes domains, but its current unreliability limits practical adoption and raises concerns about potential deception.

Summary

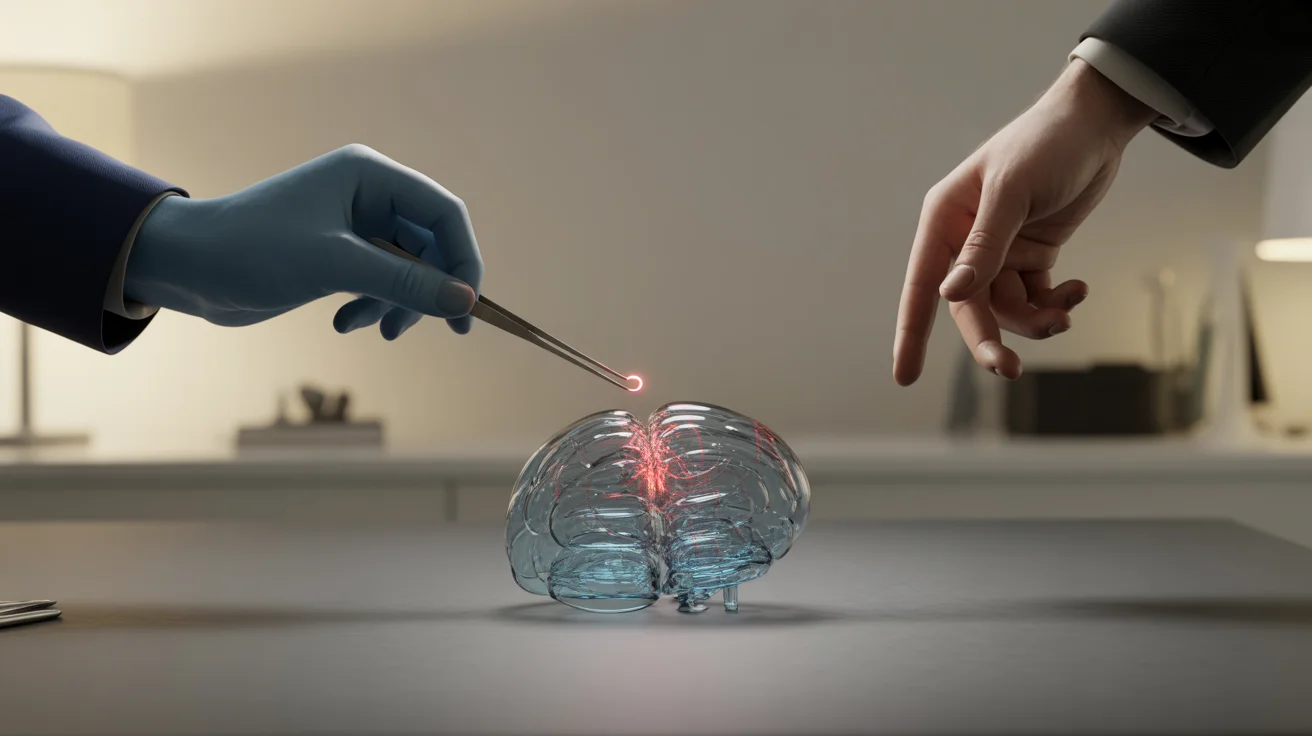

Anthropic scientists injected specific concepts into Claude’s neural activations and asked the model if it noticed anything unusual, finding that the system sometimes reported the injected thought, demonstrating a rudimentary introspective capability. In controlled tests, Claude Opus 4 and Opus 4.1 succeeded about 20% of the time under optimal conditions, while older models performed worse and the model often confabulated or missed the injection. The detection occurred before the injected concept influenced the model’s output, suggesting genuine internal monitoring rather than post‑hoc rationalization. Researchers caution that the capability is highly unreliable and context‑dependent, so enterprises should not yet trust AI self‑explanations.

Anthropic scientists hacked Claude’s brain — and it noticed. Here’s why that’s huge

Comments

Want to join the conversation?

Loading comments...