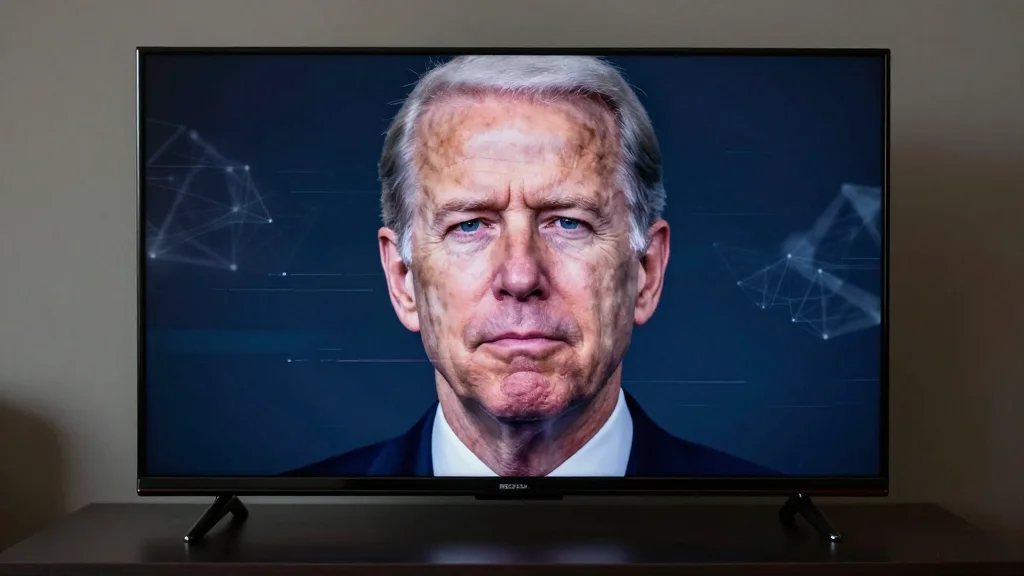

Can You Spot a Fake Political Ad? AI Is Making It Harder.

Why It Matters

Deepfake political ads threaten electoral integrity by spreading false narratives that can sway voter perception, while existing legal and labeling measures struggle to keep pace with the technology. Ensuring voters can identify fabricated content is critical to preserving democratic decision‑making.

Key Takeaways

- •AI-generated political ads are becoming indistinguishable from real footage

- •Labels indicating AI use may not prevent reputational damage to candidates

- •Several states, like Minnesota and Texas, are drafting deepfake election bans

- •Legal challenges loom as courts balance free speech with misinformation controls

- •Voter digital‑literacy programs are essential to counter sophisticated deepfakes

Pulse Analysis

The rise of generative AI has turned political advertising into a high‑tech arms race. Early deepfakes were riddled with visual glitches—extra fingers, mismatched lighting—but recent models produce seamless video and audio that can replicate a candidate’s voice and mannerisms with startling fidelity. The Talarico ad, openly tagged as AI‑generated, is a preview of what campaigns may deploy without disclosure, allowing opponents to weaponize a candidate’s past statements or fabricate entirely new ones. Voters now face a flood of content that looks authentic yet is entirely fabricated.

State legislators have begun to push back. Minnesota’s new statute criminalizes the creation of non‑consensual deepfakes intended to damage a political figure, while Texas bans such videos within 30 days of an election. These measures aim to curb last‑minute misinformation, but they clash with longstanding First Amendment protections for political speech. Courts are likely to scrutinize whether content‑based restrictions are narrowly tailored, leaving a legal gray zone where parties can still circulate deceptive material under the guise of free expression.

Given the regulatory uncertainty, the most reliable defense lies with an informed electorate. Digital‑literacy initiatives—run by nonprofits, schools, and fact‑checking organizations—teach citizens how to verify source authenticity, cross‑reference claims, and recognize AI‑generated cues. Meanwhile, tech platforms are expected to deploy automated detection tools and human reviewers to limit the spread of deepfakes, though their efficacy varies. As the 2026 cycle approaches, a combination of transparent labeling, robust legal frameworks, and widespread media‑savvy training will be essential to safeguard democratic discourse.

Can you spot a fake political ad? AI is making it harder.

Comments

Want to join the conversation?

Loading comments...