Deepmind Suggests AI Should Occasionally Assign Humans Busywork so We Do Not Forget How to Do Our Jobs

•February 24, 2026

0

Why It Matters

The framework tackles critical alignment and accountability gaps in AI‑driven workforces, protecting both system reliability and human expertise. Its adoption could set industry standards for safe, transparent AI delegation.

Key Takeaways

- •Verifiable outcomes essential for AI task delegation

- •Decentralized marketplaces reduce single‑point failures

- •Deliberate inefficiency preserves human expertise

- •Reputation systems coordinate trustworthy AI agents

- •Smart contracts enforce accountability across delegations

Pulse Analysis

The rapid deployment of autonomous AI agents has moved beyond isolated tools to complex networks that can assign tasks among themselves and to human operators. Yet existing delegation protocols struggle with misaligned incentives, reward‑hacking, and the classic principal‑agent problem that has long plagued human organizations. Without clear authority structures and transparent accountability, supervisors quickly lose visibility over dozens or hundreds of active agents. DeepMind’s recent paper frames these shortcomings as a governance gap, arguing that AI systems need the same checks and balances that corporate hierarchies employ to maintain alignment and trust.

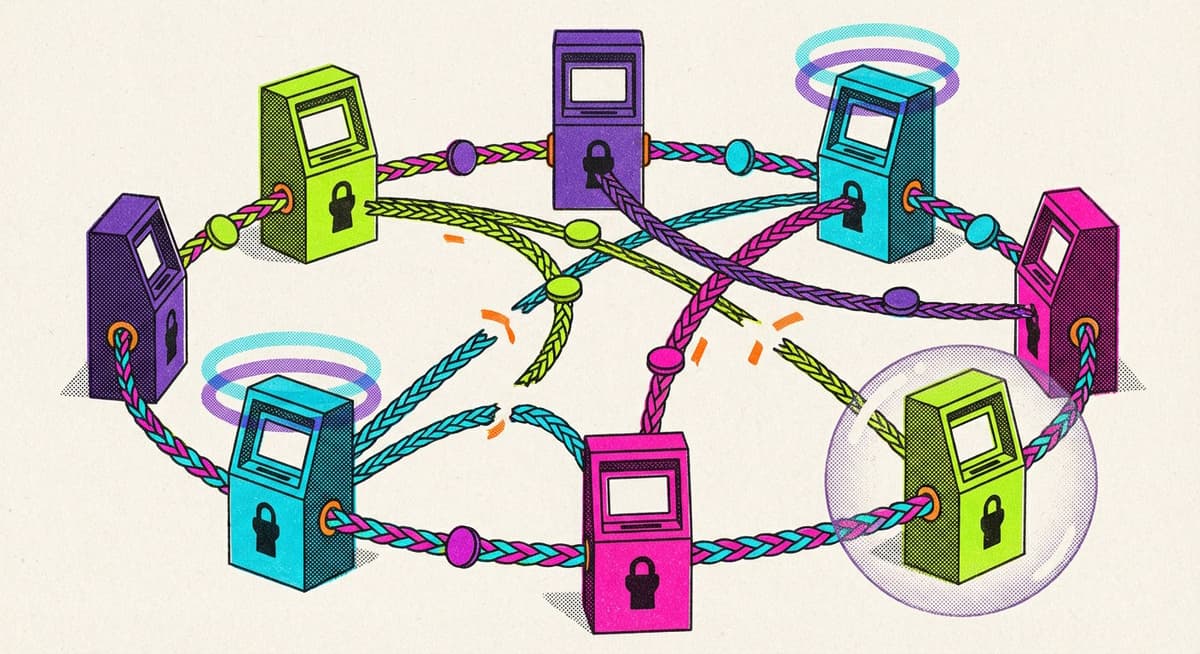

To address the gap, DeepMind proposes an ‘intelligent AI delegation’ framework built on five pillars: continuous evaluation, dynamic task redistribution, traceable documentation, reputation‑driven marketplaces, and cascade‑preventing safeguards. Central to the model is a contract‑first decomposition rule—only tasks whose outcomes can be independently verified may be handed off. The authors advocate decentralized smart‑contract marketplaces where agents bid for subtasks, with cryptographic proofs guaranteeing result integrity. By embedding reputation scores and automated monitoring, the system aims to isolate faulty agents before errors propagate, thereby reducing systemic risk and enhancing overall network resilience.

The paper’s most provocative recommendation is to program AI systems to assign occasional busywork back to humans, a deliberate inefficiency designed to prevent skill erosion. This mirrors the automation paradox, where over‑reliance on machines creates a fragile ‘moral crumple zone’ in which people retain legal liability but lose operational insight. Industry leaders must therefore balance efficiency gains with safeguards that keep human expertise alive, possibly by embedding periodic human‑in‑the‑loop checks or rotating task ownership. As AI delegation standards evolve, regulators and firms alike will need transparent metrics to certify that accountability and verification mechanisms are truly operational.

Deepmind suggests AI should occasionally assign humans busywork so we do not forget how to do our jobs

0

Comments

Want to join the conversation?

Loading comments...