Google Cloud Announces Eighth-Generation TPUs, Boasting AI Training and Inference Leaps

Why It Matters

The new TPUs dramatically shorten frontier‑model training cycles and cut inference latency, giving enterprises a cost‑effective path to scale AI agents while tightening energy consumption—a critical advantage as AI workloads explode.

Key Takeaways

- •TPU 8t delivers 2.8× training speed over Ironwood

- •TPU 8i’s Boardfly topology cuts inference latency by up to 50%

- •Virgo Network links up to 134,000 chips in a single data center

- •New chips double performance per watt, slashing AI energy costs

- •Google’s split TPU line mirrors AWS’s Trainium/Inferentia strategy

Pulse Analysis

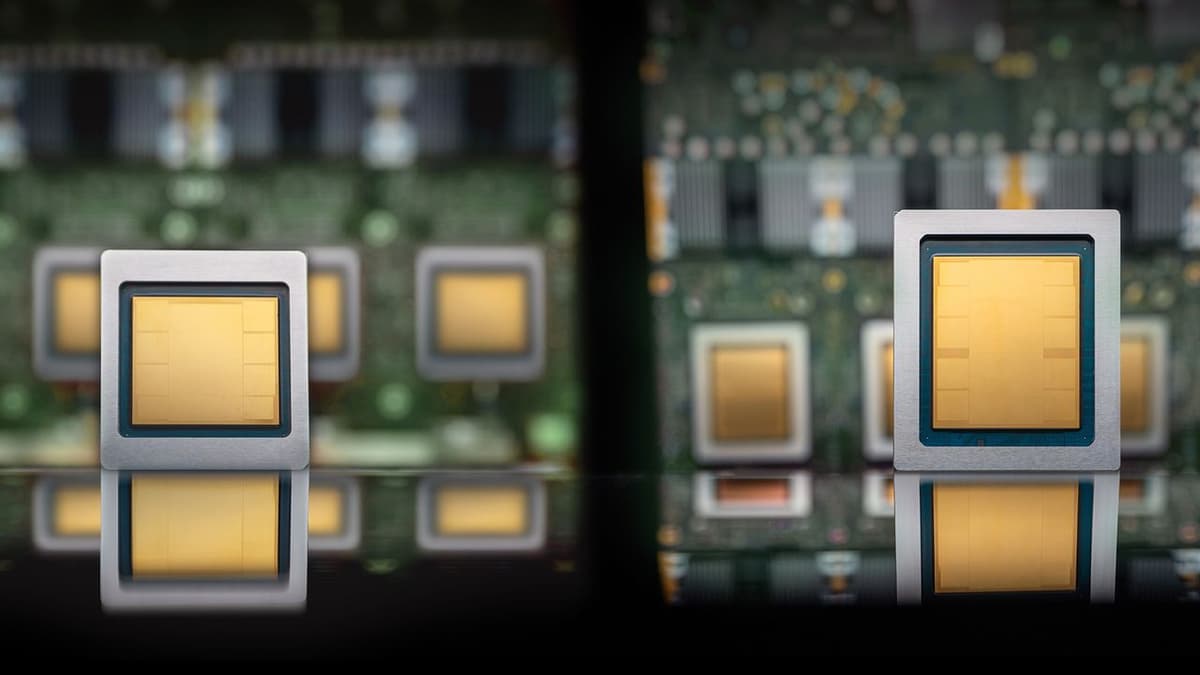

Google Cloud’s eighth‑generation TPUs arrive at a moment when the AI hardware race is intensifying. The TPU 8t, aimed at frontier‑model training, offers 121 FP4 exaflops—roughly 2.8 times the compute power of the previous Ironwood generation. By scaling pods to 9,600 chips and providing 19.2 Tbits/sec bandwidth per chip, Google promises to shrink model‑training timelines from months to weeks, a shift that could accelerate product development cycles across industries.

On the inference side, the TPU 8i introduces several architectural breakthroughs. Its 384 MB on‑chip SRAM stores short‑term agent memory, while dual Axion CPUs orchestrate data flow, reducing idle cycles. The new Boardfly network topology replaces the older 3D torus, delivering up to a 50% latency reduction for agent‑swarm workloads. Coupled with the Virgo Network fabric—capable of interconnecting 134,000 chips in a single data center and over a million across sites—Google creates a unified, low‑latency AI hypercomputer that can handle massive, multimodal workloads with sub‑millisecond telemetry.

Beyond raw performance, energy efficiency is a decisive factor for enterprise adopters. Google claims the eighth‑gen TPUs achieve double the performance per watt compared with Ironwood, translating into substantial cost savings for continuous‑inference workloads such as AI agents. Early adopters like Citadel Securities report a 30% reduction in trading‑system expenses. By segmenting its TPU portfolio into dedicated training and inference chips, Google aligns with AWS’s Trainium/Inferentia approach, positioning itself as a one‑stop provider for end‑to‑end AI infrastructure as the market anticipates billions of AI agents operating by 2028.

Google Cloud announces eighth-generation TPUs, boasting AI training and inference leaps

Comments

Want to join the conversation?

Loading comments...