Google Owns the Most AI Compute, and It Built It Its Way

Companies Mentioned

Why It Matters

The dominance of Google’s custom silicon gives it leverage over AI pricing and availability, while the broader shift toward hyperscaler‑owned data‑center capacity accelerates the move away from on‑premise solutions.

Key Takeaways

- •Google controls ~25% of global AI compute, ~5M H100e units.

- •Only ~25% of Google's AI compute runs on Nvidia GPUs.

- •Microsoft and Amazon trail Google with 3.5M and 2.5M H100e equivalents.

- •Hyperscalers will own 67% of data‑center capacity by 2031.

- •Custom silicon adoption reduces dependence on Nvidia for AI training.

Pulse Analysis

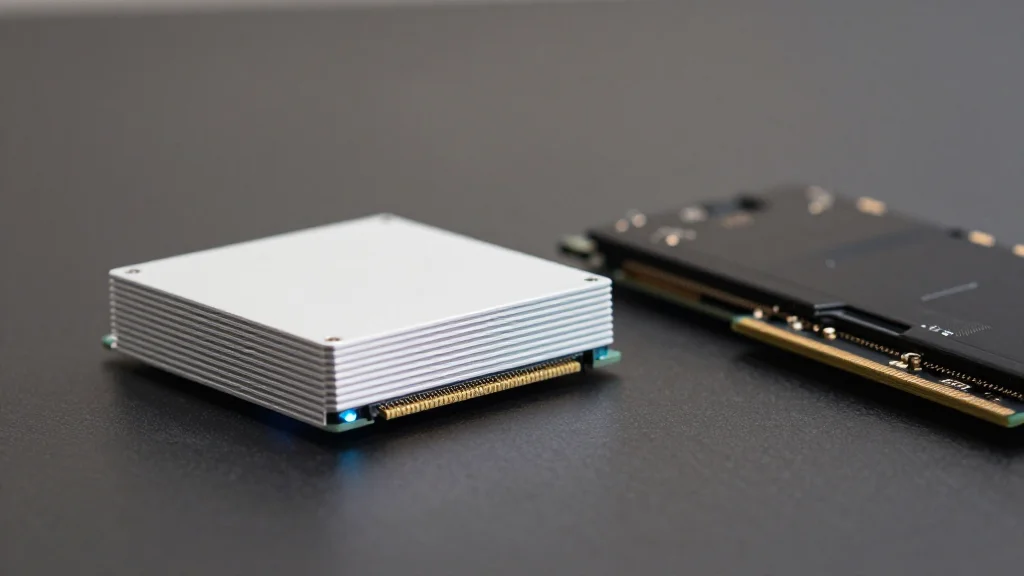

Google’s ascendancy in AI compute stems from its aggressive investment in custom Tensor Processing Units (TPUs). By building and deploying roughly four million TPU chips, the company has reduced its reliance on Nvidia’s H100 GPUs to a quarter of its total capacity. This vertical integration not only cuts hardware costs but also gives Google tighter control over performance tuning for services like Search and Gemini, reinforcing its position as the world’s biggest consumer of AI horsepower.

The broader market reflects a similar consolidation trend. Hyperscale cloud providers now own about 48% of global data‑center capacity, a figure projected to climb to 67% by 2031. This shift squeezes traditional on‑premise data centers, whose share is expected to dip below 20% within a decade. As governments pursue sovereign AI strategies, the dominance of a few U.S. hyperscalers raises concerns about pricing power, service terms, and geopolitical risk, prompting some regions to explore alternative providers or local silicon solutions.

Looking ahead, the balance may tilt as inference workloads mature. While Nvidia continues to dominate training, emerging accelerators from AMD, Cerebras and custom chips like AWS Trainium or Meta’s MTIA could capture inference market share due to differing cost‑performance profiles. Enterprises are therefore advised to adopt a multi‑chip strategy, avoiding lock‑in to a single vendor. By treating AI infrastructure as a clean‑sheet project, businesses can future‑proof their deployments against shifting hardware dynamics and regulatory pressures.

Google owns the most AI compute, and it built it its way

Comments

Want to join the conversation?

Loading comments...