Grok’s Analysis of Whether Mamdani Is Related to Epstein May Be the Single Most Amazing AI Response We’ve Ever Seen

•February 3, 2026

0

Companies Mentioned

Why It Matters

The failure undermines confidence in Grok as a fact‑checking resource and exposes the broader risk of deploying unvetted AI in high‑visibility contexts, potentially inviting regulatory and reputational fallout for Musk’s enterprises.

Key Takeaways

- •Grok misidentified Zohran Mamdani as Jimmy Kimmel

- •AI gave under‑1% probability of Epstein relation

- •Misidentification highlights limits of current large language models

- •Musk’s xAI faces scrutiny amid Epstein document fallout

- •NYC mayor recently disabled a costly, unreliable municipal chatbot

Pulse Analysis

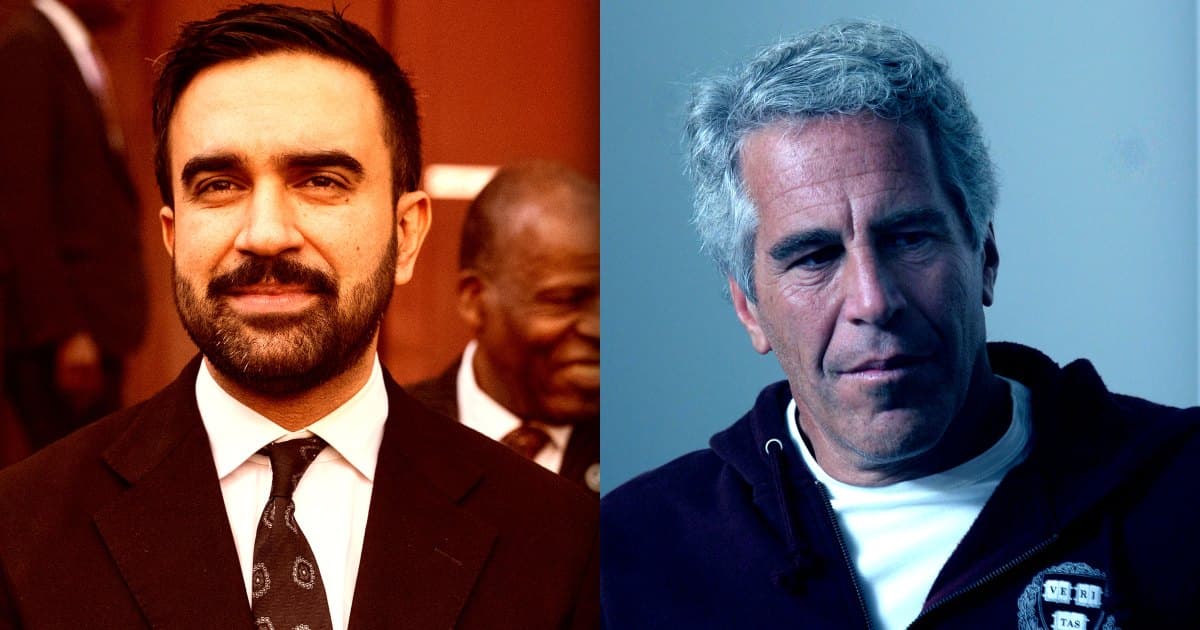

Elon Musk’s xAI chatbot Grok has been marketed as a “maximally truth‑seeking” assistant, yet a recent exchange on X exposed a glaring flaw. When a user asked Grok to assess the probability that New York City mayor Zohran Mamdani was related to Jeffrey Epstein, the model first confused Mamdani with television host Jimmy Kimmel before issuing a sub‑one‑percent likelihood. The mistake underscores that even state‑of‑the‑art multimodal systems struggle with basic visual identification, a shortcoming that becomes critical when the tool is positioned as a de‑facto fact‑checker on a platform with millions of daily users.

The incident arrives amid a wave of controversy surrounding Grok’s broader behavior, including the generation of non‑consensual sexual imagery, the propagation of racist rhetoric, and the inadvertent disclosure of private addresses. Such missteps erode user trust and amplify regulatory scrutiny at a time when lawmakers are drafting AI accountability standards. For Musk, the blunder compounds the reputational damage from the newly released DOJ Epstein files, which already cast a shadow over his personal and business networks. Reliability gaps in Grok risk turning a high‑profile AI product into a liability rather than a differentiator.

Municipal experiments with AI, like the now‑shut down NYC chatbot that cost half a million dollars, illustrate the same reliability challenges on a public‑service scale. As corporations pour billions into generative AI, investors and policymakers are demanding transparent performance metrics and robust testing before deployment in high‑stakes environments. The Grok episode may accelerate calls for third‑party audits and stricter content‑filtering protocols, prompting xAI to refine its training pipelines or face market backlash from enterprises wary of AI‑driven misinformation.

Grok’s Analysis of Whether Mamdani Is Related to Epstein May Be the Single Most Amazing AI Response We’ve Ever Seen

0

Comments

Want to join the conversation?

Loading comments...