HBM-on-GPU Set to Power the Next Revolution in AI Accelerators - and Just to Confirm, There's No Way This Will Come to Your Video Card Anytime Soon

•December 10, 2025

0

Companies Mentioned

Why It Matters

The breakthrough promises faster, denser AI accelerators for data centers, yet thermal constraints delay any consumer‑grade GPU rollout, reshaping hardware roadmaps across the semiconductor ecosystem.

Key Takeaways

- •3D HBM-on-GPU achieves record compute density.

- •Peak GPU temperature reaches 141.7 °C without mitigation.

- •Halving clock reduces temperature below 100 °C, slows training 28%.

- •3D design outperforms 2.5D baseline in throughput density.

- •XTCO aligns tech and system scaling for AI data centers.

Pulse Analysis

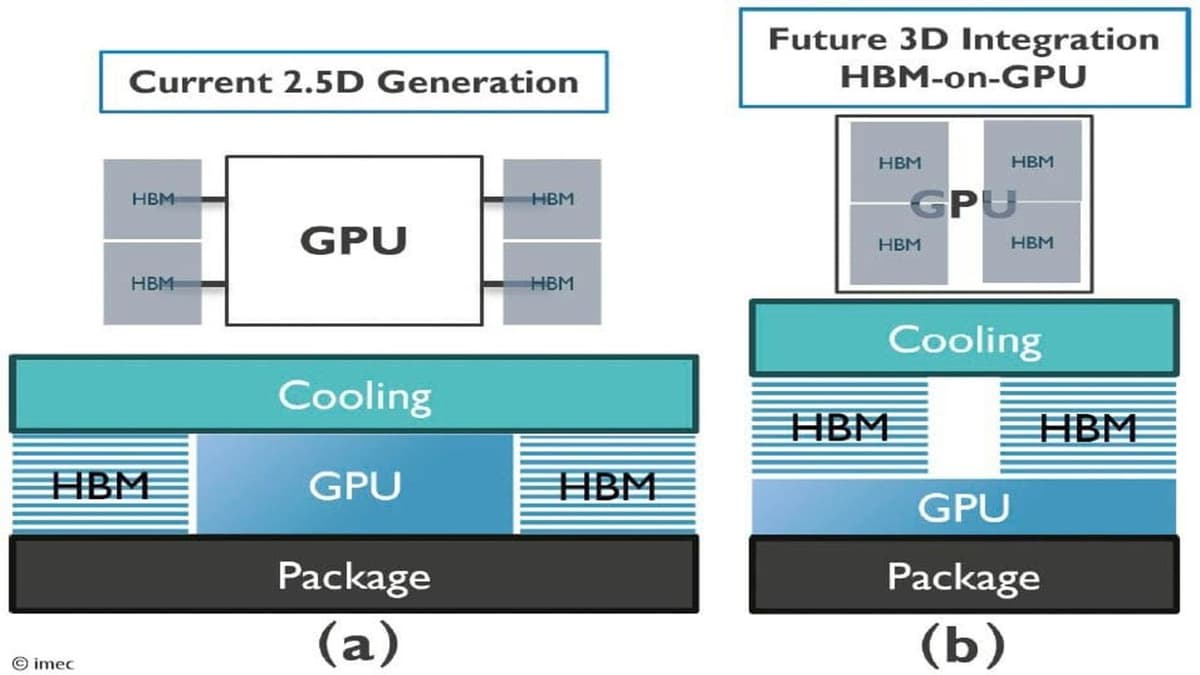

The 3D HBM‑on‑GPU concept pushes the envelope of silicon integration by vertically stacking memory directly on the processor die. This micro‑bump connection eliminates the interposer footprint of 2.5D designs, delivering higher bandwidth per square millimeter and enabling AI models to access larger datasets with reduced latency. Early simulations show a compute density leap that could translate into faster inference and training cycles for large‑scale neural networks, positioning the technology as a potential cornerstone for next‑generation AI accelerators.

However, the thermal profile of the stacked architecture presents a formidable hurdle. Without active mitigation, GPU cores soar past 140 °C, far exceeding safe operating limits and risking reliability issues. Imec’s experiments demonstrate that throttling the clock by 50% brings temperatures under 100 °C but at the cost of a 28% performance penalty. Designers must therefore balance raw throughput gains against cooling solutions such as double‑sided heat sinks or advanced silicon thermal pathways, a trade‑off that will shape system‑level engineering decisions for high‑performance compute clusters.

From an industry perspective, the technology aligns with Imec’s cross‑technology co‑optimization (XTCO) program, which seeks to synchronize device, package, and system innovations. Data‑center operators stand to benefit from the higher throughput density, especially as AI workloads continue to scale. Yet the thermal and power constraints suggest that mainstream consumer graphics cards are unlikely to adopt this architecture in the near term. Instead, early deployments will likely appear in specialized AI servers where power budgets and cooling infrastructure can be tightly controlled, signaling a gradual but impactful shift in the AI hardware landscape.

HBM-on-GPU set to power the next revolution in AI accelerators - and just to confirm, there's no way this will come to your video card anytime soon

0

Comments

Want to join the conversation?

Loading comments...