HHS Is Making an AI Tool to Create Hypotheses About Vaccine Injury Claims

•February 4, 2026

0

Companies Mentioned

Why It Matters

The AI could reshape vaccine safety monitoring, but misuse may amplify misinformation and affect regulatory decisions. Ensuring rigorous human review is critical to preserve public health credibility.

Key Takeaways

- •HHS building LLM to analyze VAERS reports

- •Tool still in development, not yet deployed

- •Critics fear it could amplify anti‑vaccine narratives

- •VAERS data unverified, prone to false alerts

- •Human review essential to validate AI hypotheses

Pulse Analysis

The Department of Health and Human Services announced that it is engineering a generative‑AI system to scan the Vaccine Adverse Event Reporting System (VAERS) and automatically generate hypothesis statements about possible vaccine‑related injuries. The effort, listed in HHS’s 2025 AI inventory, builds on earlier natural‑language‑processing projects that have been used to flag patterns in the noisy, self‑reported VAERS dataset. By leveraging large‑language models, the agency hopes to accelerate signal detection and reduce the manual labor required to sift through millions of reports.

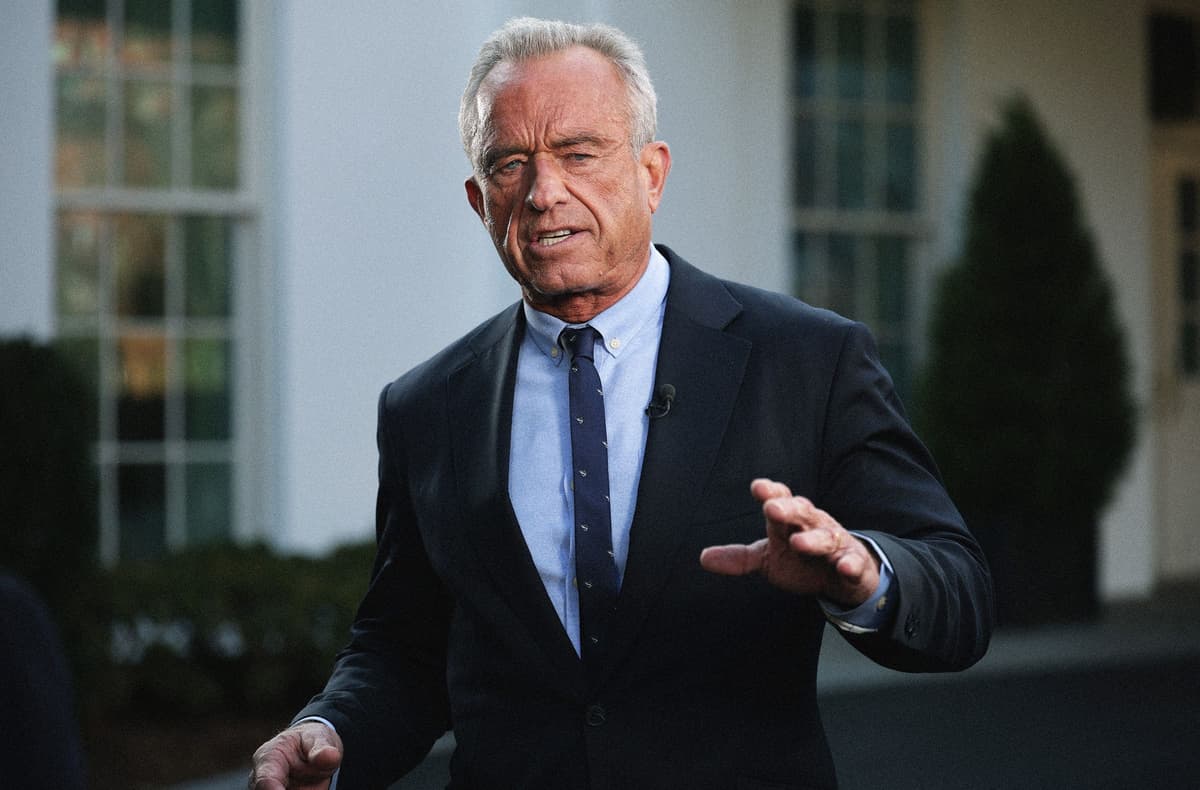

The rollout has sparked immediate pushback from public‑health experts and political observers who warn the tool could be weaponized by Secretary Robert F. Kennedy Jr. to reinforce his anti‑vaccine agenda. VAERS entries are not verified and lack denominator data, making them prone to misinterpretation. Large‑language models are also notorious for fabricating plausible‑sounding but inaccurate conclusions, a risk that could generate false alerts and fuel misinformation. Critics therefore stress that any AI‑generated hypotheses must be vetted by epidemiologists before influencing policy or litigation.

If deployed responsibly, the AI platform could uncover rare safety signals that traditional analyses miss, as VAERS has previously identified clotting disorders and myocarditis cases. However, the system’s success hinges on robust human oversight, interdisciplinary expertise, and integration with complementary data sources that provide vaccination rates. With recent CDC staffing cuts, the agency will need to allocate resources for the necessary follow‑up investigations. Properly managed, the technology could modernize vaccine surveillance while preserving scientific rigor and public trust.

HHS Is Making an AI Tool to Create Hypotheses About Vaccine Injury Claims

0

Comments

Want to join the conversation?

Loading comments...