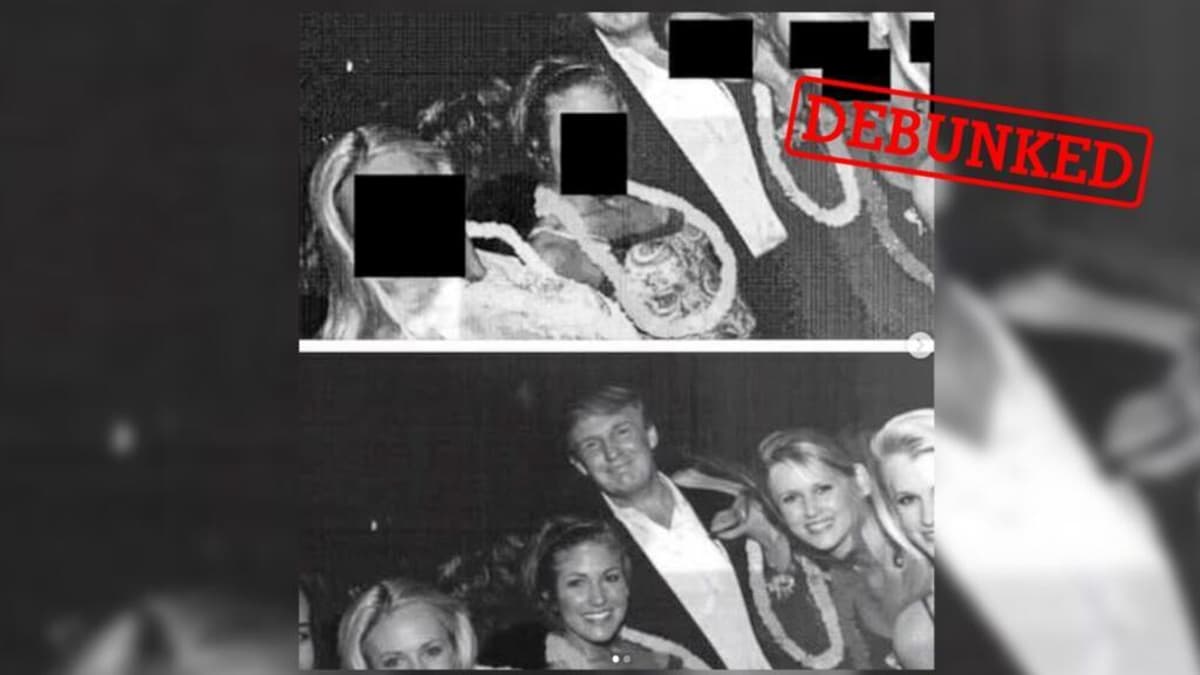

How a Real Photo of Donald Trump Linked to Jeffrey Epstein Was Doctored Using AI

•December 24, 2025

0

Companies Mentioned

Why It Matters

The episode underscores the growing risk of AI‑driven deepfakes shaping public perception and influencing political narratives, prompting calls for stronger verification standards.

Key Takeaways

- •AI image shows deformed hand, indicating synthetic origin

- •Google Nano Banana watermark tags image as AI‑generated

- •Original photo featured adult models for Hawaiian Tropic

- •AI “uncensoring” creates hallucinated faces, not real data

- •Misinformation risk rises as deepfakes enter politics

Pulse Analysis

The proliferation of AI‑generated imagery is reshaping how political scandals are framed. In this case, a real 1990s photograph of Donald Trump at a Hawaiian Tropic event was altered by a generative model, inserting a distorted hand and removing one of the six women. The presence of a Nano Banana watermark—Google’s proprietary AI signature—provides a forensic clue that the image was not captured by a camera but synthesized by an algorithm. Such technical fingerprints are becoming essential tools for journalists and fact‑checkers seeking to separate authentic documentation from fabricated content.

Beyond the technical details, the episode illustrates a broader strategic use of deepfakes in partisan battles. Pro‑Trump supporters leveraged the AI‑altered picture to argue that Democrats were manipulating visual evidence to suggest illicit behavior, while opponents pointed to the manipulation as proof of the dangers inherent in AI‑enhanced “uncensoring.” This tug‑of‑war over visual truth amplifies public distrust and complicates the media’s role as an arbiter of fact. As AI models become more accessible, the speed at which false imagery can be produced and disseminated will outpace traditional verification methods unless new standards and automated detection pipelines are adopted.

For businesses, policymakers, and media organizations, the lesson is clear: investing in AI‑aware verification infrastructure is no longer optional. Tools that detect invisible watermarks, analyze anatomical inconsistencies, and cross‑reference reverse‑image searches can mitigate the spread of deceptive content. Moreover, transparent labeling of AI‑generated media can help preserve credibility in an environment where visual manipulation is increasingly sophisticated. The Trump‑Epstein photo saga serves as a cautionary tale, reminding stakeholders that the line between authentic documentation and fabricated narrative is rapidly blurring, demanding proactive governance and public education.

How a real photo of Donald Trump linked to Jeffrey Epstein was doctored using AI

0

Comments

Want to join the conversation?

Loading comments...