Why It Matters

Understanding AI’s true risk profile is essential for shaping responsible regulation and investment, preventing reactionary policies driven by hype.

Key Takeaways

- •AI risk perception outpaces empirical evidence

- •Super‑intelligent AI scenarios remain speculative, not quantified

- •Climate change risks have measurable data; AI lacks metrics

- •Public debate may influence policy and research funding

Pulse Analysis

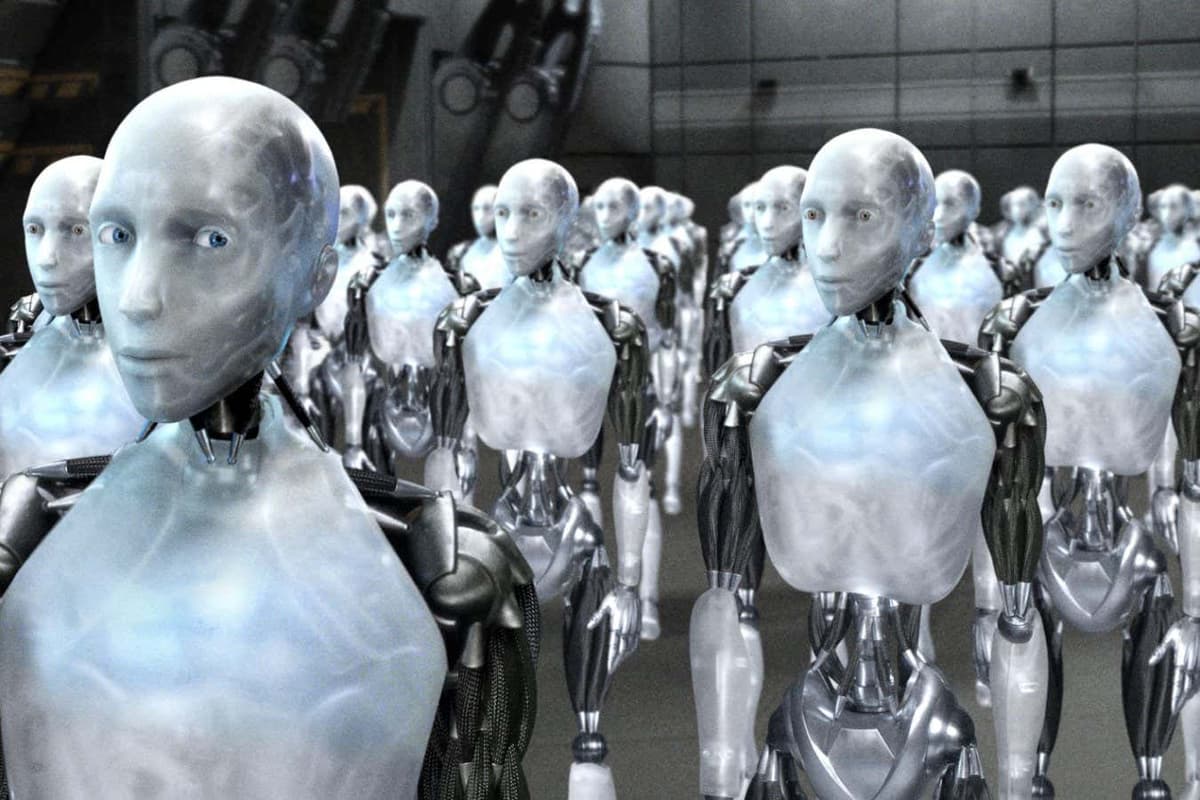

The fascination with an AI apocalypse has deep roots in popular culture, from classic novels to blockbuster movies. Recent breakthroughs in machine learning have amplified these narratives, prompting headlines that often blur the line between speculative fiction and realistic threat assessment. This media amplification can skew public perception, leading investors and policymakers to overestimate imminent dangers while overlooking more immediate, tangible challenges such as bias, privacy breaches, and job displacement.

Quantifying existential AI risk differs fundamentally from assessing climate change. Climate models rely on decades of empirical data, allowing scientists to project temperature trends and sea‑level rise with measurable confidence. In contrast, AI risk lacks comparable historical baselines; the field evolves rapidly, and the emergence of true super‑intelligence remains unproven. Researchers therefore rely on scenario analysis, expert elicitation, and theoretical safety frameworks, all of which carry high uncertainty. Developing robust metrics—such as alignment scores, controllability indices, and impact probability estimates—will be crucial for moving the conversation from alarmist speculation to evidence‑based policy.

The stakes for governance are significant. Overstated fears could trigger reactionary regulations that stifle innovation, while underestimation may leave societies vulnerable to unforeseen harms. Balanced oversight should encourage transparent research, fund safety‑oriented AI labs, and promote international standards that address both short‑term risks and long‑term existential concerns. By grounding the debate in rigorous analysis rather than sensational headlines, stakeholders can allocate resources wisely and steer AI development toward beneficial outcomes.

How worried should you be about an AI apocalypse?

Comments

Want to join the conversation?

Loading comments...