Huawei, DeepSeek Strengthen China’s AI Self-Reliance with Collaboration on V4 Model

Companies Mentioned

Why It Matters

The joint effort accelerates China’s push for AI self‑reliance, reducing dependence on U.S. semiconductors and positioning domestic hardware for the growing inference market projected to outstrip training demand by 2030.

Key Takeaways

- •Huawei's Ascend 950PR/950DT chips support DeepSeek V4 at launch

- •Full Ascend SuperNode line adapted for V4 inference out‑of‑the‑box

- •Collaboration boosts China's AI self‑reliance amid US export restrictions

- •Inference demand projected to outpace training by 2030, per McKinsey

- •DeepSeek V4 throughput issues expected to resolve with 950PR scale‑ship

Pulse Analysis

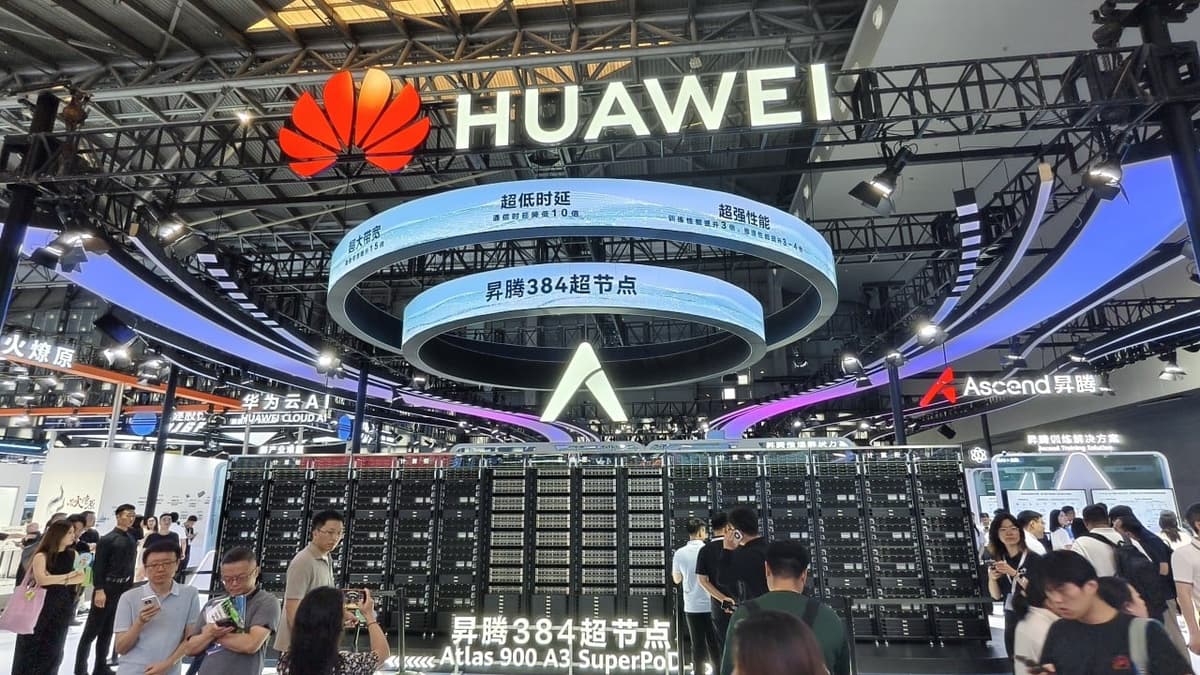

The integration of Huawei’s Ascend 950PR and 950DT chips with DeepSeek’s V4 model represents a rare "day‑zero" rollout, where hardware and software are synchronized before the model’s public release. Engineers showcased how Huawei’s Compute Architecture for Neural Networks (CANN) mirrors Nvidia’s CUDA, enabling seamless inference on the Ascend SuperNode platform. This tight coupling not only shortens time‑to‑market for Chinese AI solutions but also demonstrates the maturity of domestic toolchains that can support cutting‑edge large language models without foreign dependencies.

Amid escalating U.S. export controls that limit China’s access to advanced semiconductors, the collaboration underscores Beijing’s strategic emphasis on AI self‑reliance. While training of massive models still leans on imported chips, the inference phase—where models serve real‑world queries—offers a more attainable target for domestic hardware. McKinsey projects global inference demand to surpass training demand by 2030, creating a lucrative niche for locally produced accelerators. By adapting its entire Ascend SuperNode line for DeepSeek V4, Huawei positions itself to capture a share of this emerging market and mitigate supply‑chain vulnerabilities.

Looking ahead, Huawei plans to ship the 950PR and 950DT chips at scale by year‑end, coinciding with DeepSeek’s roadmap to resolve V4 throughput bottlenecks in the second half of the year. This timing could shift a segment of AI workloads away from incumbent players like Nvidia, especially in Chinese data centers prioritizing homegrown solutions. As inference workloads grow, the performance‑per‑watt and cost efficiencies of Ascend chips will be closely watched, potentially reshaping competitive dynamics in the global AI accelerator arena.

Huawei, DeepSeek strengthen China’s AI self-reliance with collaboration on V4 model

Comments

Want to join the conversation?

Loading comments...