I Tested Gemini 3, ChatGPT 5.1, and Claude Sonnet 4.5 – and Gemini Crushed It in a Real Coding Task

Companies Mentioned

Why It Matters

The test highlights Gemini 3 Pro’s advanced code‑generation and iterative reasoning, suggesting it could accelerate low‑code development and give Google a competitive edge in AI‑assisted software creation.

Summary

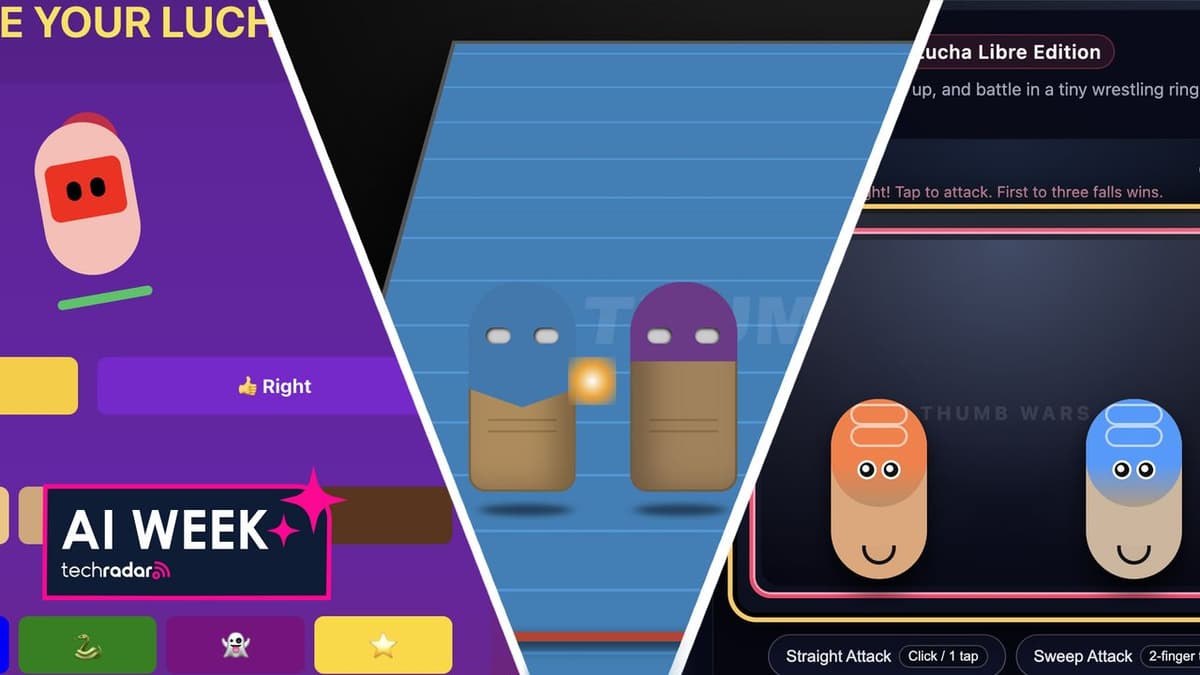

Google unveiled Gemini 3 Pro, and the author put it to the test by prompting it to build a web‑based Thumb Wars game. Gemini generated functional HTML/CSS/JS code in seconds, iteratively adding features such as customizable masks, 3‑D ring perspective, keyboard controls, and depth‑based hit detection, producing a playable demo that outperformed comparable attempts with OpenAI's ChatGPT 5.1 and Anthropic's Claude Sonnet 4.5. While ChatGPT and Claude also produced prototypes, they lagged in speed, feature completeness, and adaptability, requiring more detailed prompts and still lacking robust controls. The author concludes that Gemini 3 Pro demonstrated superior “vibe‑coding” capabilities, delivering a near‑complete game with minimal guidance.

I tested Gemini 3, ChatGPT 5.1, and Claude Sonnet 4.5 – and Gemini crushed it in a real coding task

Comments

Want to join the conversation?

Loading comments...