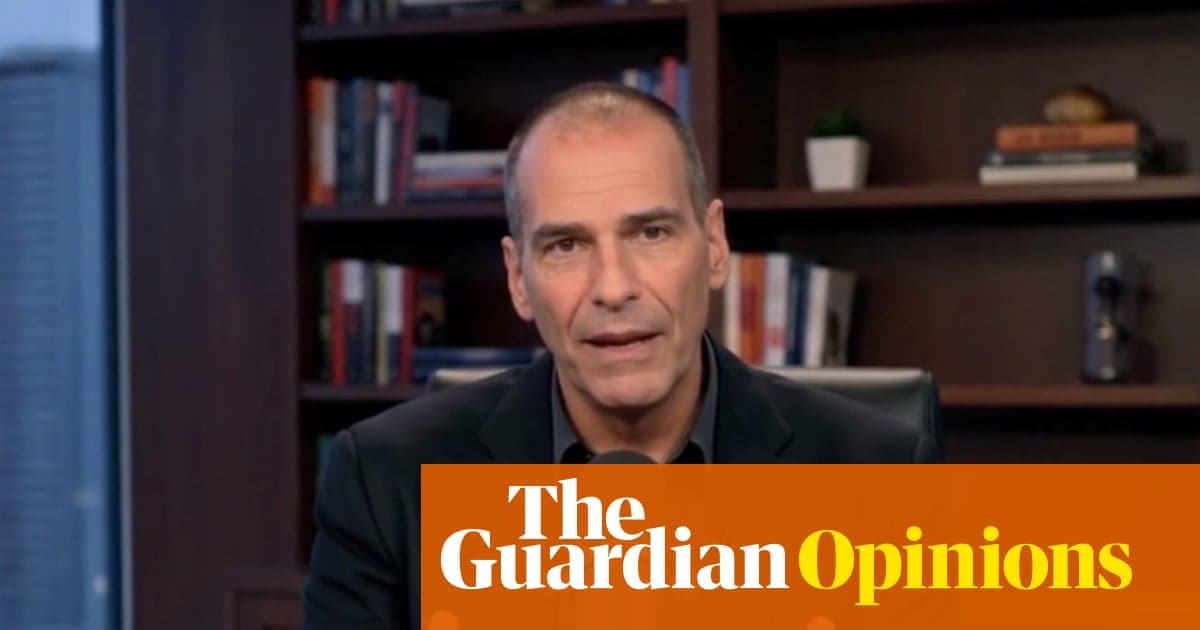

I’m Watching Myself on YouTube Saying Things I Would Never Say. This Is the Deepfake Menace We Must Confront | Yanis Varoufakis

•January 5, 2026

0

Companies Mentioned

Why It Matters

Deepfakes erode trust in digital discourse, threatening democratic debate and corporate reputations while exposing the power imbalance between individuals and platform owners.

Key Takeaways

- •Deepfake videos of Varoufakis proliferate across YouTube.

- •Platforms delete videos, but copies reappear under new guises.

- •Deepfakes expose technofeudal ownership of personal likeness.

- •Authenticity crisis threatens democratic deliberation and trust.

- •Potential shift to argument‑based judgment over speaker identity.

Pulse Analysis

The surge of AI‑generated deepfakes featuring public figures like Yanis Varoufakis underscores a new frontier of digital risk. While platforms such as YouTube and Meta claim to enforce content policies, the rapid re‑upload of altered videos demonstrates the limits of reactive takedowns. For businesses, the spread of synthetic media can dilute brand integrity, create legal exposure, and amplify misinformation that skews market sentiment. Understanding the mechanics of generative models and the incentives that drive their misuse is now a core component of corporate risk management.

Varoufakis labels this environment "technofeudalism," where cloud infrastructure and algorithmic control function as modern fiefs. In this model, personal data—including facial and vocal signatures—becomes a rent‑seeking asset owned by platform lords. The erosion of authentic identity not only threatens individual agency but also destabilizes democratic processes that rely on credible sources. By conflating truth with platform‑verified signals, deepfakes amplify the asymmetry between content creators and the owners of the digital agora, turning discourse into a commodity rather than a public good.

The challenge invites proactive solutions: robust verification standards, decentralized identity frameworks, and regulatory mandates for watermarking AI‑generated media. Encouraging audiences to evaluate arguments on merit, as ancient isegoria suggested, could mitigate the persuasive power of fabricated personas. For policymakers and tech firms, establishing transparent provenance trails and liability rules will be essential to preserve trust, protect reputations, and ensure that the digital public sphere remains a venue for genuine debate rather than a mirror hall of synthetic voices.

I’m watching myself on YouTube saying things I would never say. This is the deepfake menace we must confront | Yanis Varoufakis

0

Comments

Want to join the conversation?

Loading comments...