Japan's Lower House Election Becomes a Testing Ground for Generative AI Misinformation

•February 7, 2026

0

Why It Matters

The surge of AI‑fabricated content erodes voter trust and undermines election integrity, signaling a global challenge for democratic institutions.

Key Takeaways

- •AI‑generated videos proliferate on YouTube, TikTok during election.

- •51.5% of surveyed voters trust fake news as true.

- •AI tools mislabel authentic rally footage as synthetic.

- •Liar’s dividend lets politicians dismiss genuine evidence.

- •Conservative groups abroad also weaponize AI in campaigns.

Pulse Analysis

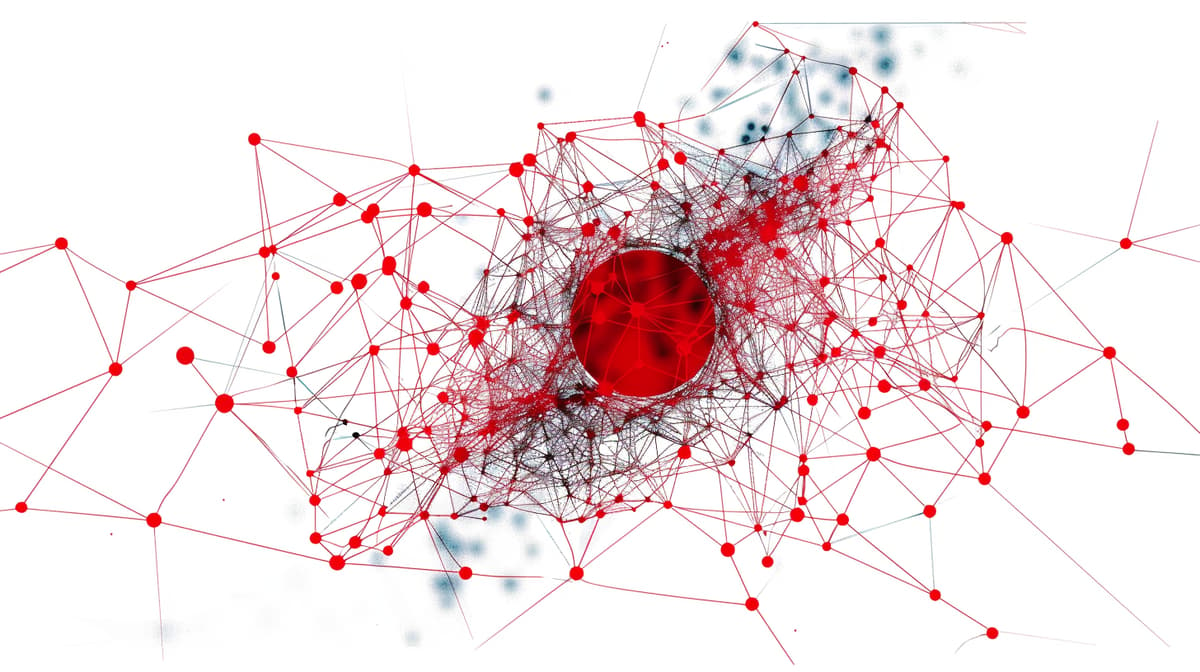

The Japanese lower‑house race illustrates how generative AI can amplify misinformation at unprecedented speed. Social platforms reward high‑engagement content, allowing AI‑crafted videos—such as a fabricated montage of party leaders with a communist‑style logo—to go viral within hours. The Japan Times’ survey, showing over half of respondents mistaking fake news for fact, underscores a widening credibility gap that threatens informed voting and democratic legitimacy.

Technical safeguards are struggling to keep pace. Automated detection tools like the Grok chatbot flagged a genuine rally video as AI‑generated, exposing the false‑positive dilemma that can be weaponized by political actors. This feeds the so‑called liar’s dividend, where the mere existence of AI‑fabricated media gives candidates an excuse to discredit authentic evidence, further muddying the factual landscape and complicating fact‑checking efforts.

Globally, the Japanese experience foreshadows a broader trend of AI misuse in elections. Conservative groups in the United States and Europe have already deployed AI‑generated content, sometimes openly, to sway public opinion. Policymakers must consider stricter platform accountability, transparent labeling standards, and robust media‑literacy programs to mitigate the erosion of trust. As AI tools become more accessible, the onus falls on regulators, tech firms, and civil society to safeguard the integrity of democratic processes before misinformation becomes the norm.

Japan's lower house election becomes a testing ground for generative AI misinformation

0

Comments

Want to join the conversation?

Loading comments...