Liquid Cooling vs Air Cooling: The Five Key Differences for Data Centers

•January 11, 2026

0

Companies Mentioned

Why It Matters

Improved cooling efficiency directly reduces operational costs and carbon footprints, giving AI‑intensive data centers a strategic edge. Faster adoption accelerates industry sustainability goals while supporting higher compute density in limited footprints.

Key Takeaways

- •Liquid cooling up to 40% less power consumption.

- •Water removes heat 3,000 times more efficiently than air.

- •Enables up to 98% heat removal, higher compute density.

- •Achieves PUE as low as 1.04, boosting sustainability.

- •Hybrid air‑water designs simplify maintenance and adoption.

Pulse Analysis

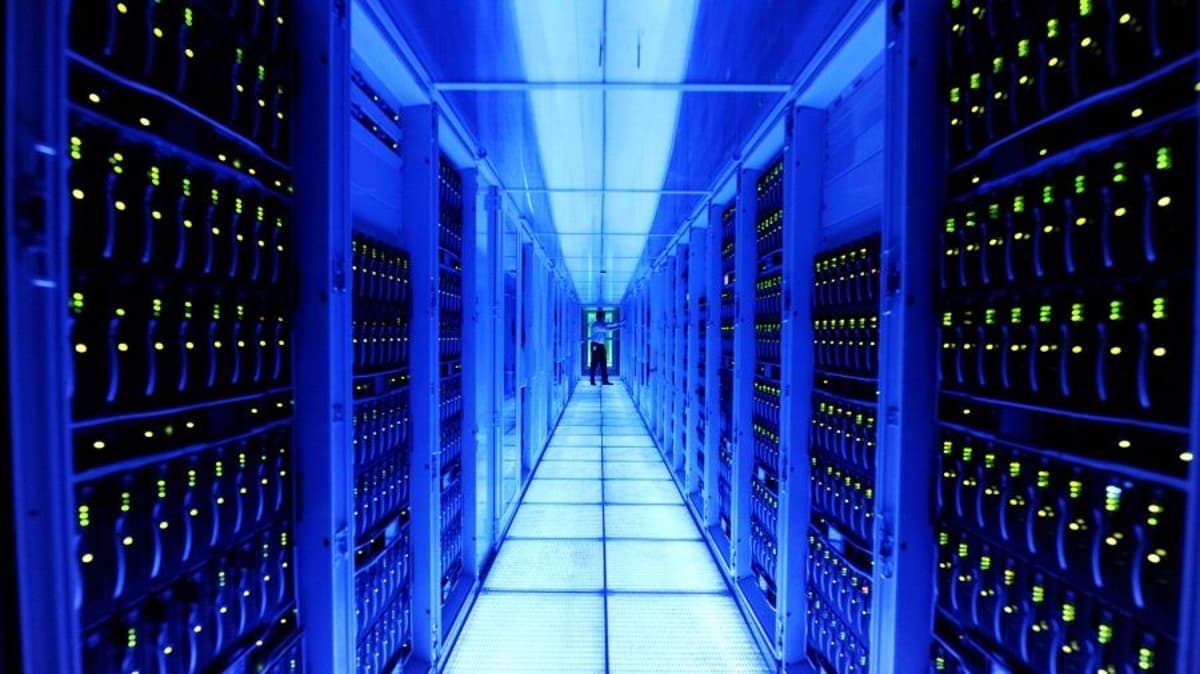

The surge in AI, high‑performance computing and cloud services is pushing data‑center power consumption toward unprecedented levels. While air‑cooling has been the industry standard, its reliance on large fans and low thermal conductivity makes it increasingly inefficient as rack densities climb beyond 70 kW. Liquid cooling leverages water’s superior heat‑transfer properties—over 3,000 times that of air—to dramatically lower the energy required for temperature control, translating into up to a 40% reduction in overall power draw.

Beyond raw efficiency, liquid‑cooling architectures deliver tangible sustainability benefits. Closed‑loop systems capture warm water that can be redirected to heat office buildings or district‑level heating networks, effectively turning waste into a resource. This approach drives Power Usage Effectiveness (PUE) scores toward the theoretical optimum of 1.0, with leading installations reporting values as low as 1.04. By cutting both electricity and water consumption, operators meet tightening ESG mandates while lowering operating expenses.

Looking ahead, the scalability of liquid cooling positions it as a cornerstone of future‑ready data centers. Higher compute density enables more AI and HPC workloads per square foot, easing real‑estate constraints. Modern hybrid solutions blend air and water pathways, reducing the specialized expertise traditionally required for liquid‑only setups and simplifying maintenance. As investors and hyperscale providers prioritize energy‑efficient infrastructure, liquid cooling is poised to become the default cooling paradigm for the next generation of high‑performance data centers.

Liquid cooling vs air cooling: the five key differences for data centers

0

Comments

Want to join the conversation?

Loading comments...