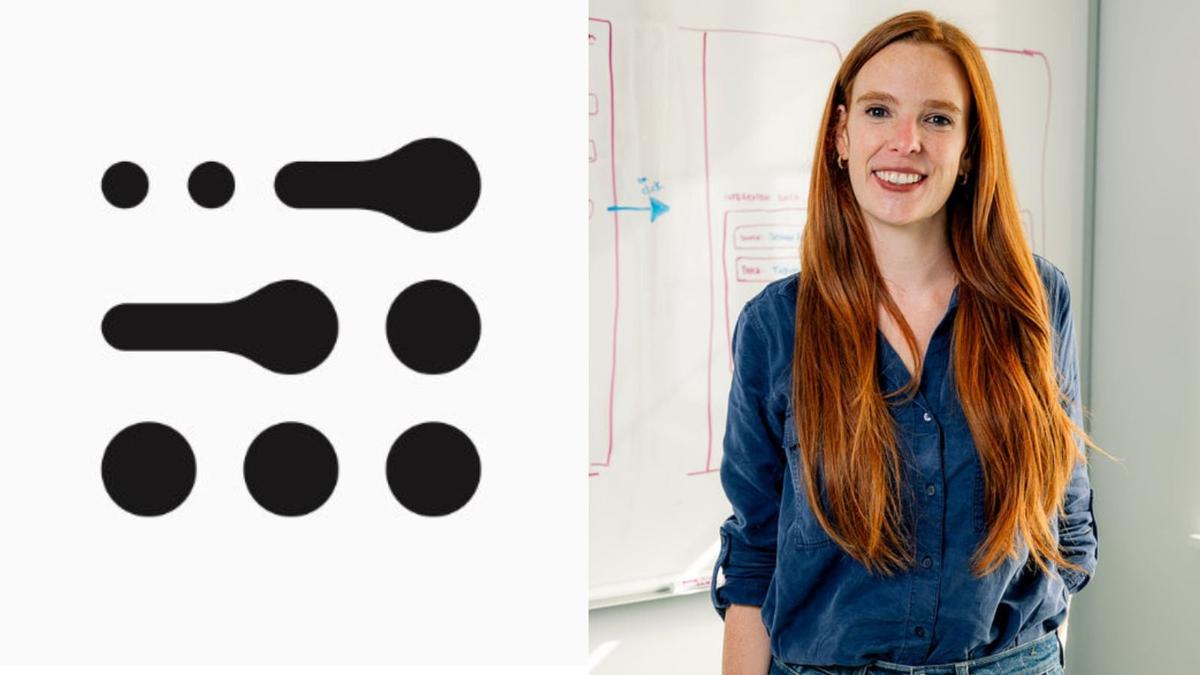

‘Making Models Bigger Won’t Get Us Far, AI Must Adapt Like Humans’: Adaption Labs CEO Sara Hooker

•February 20, 2026

0

Why It Matters

The shift from brute‑force scaling to adaptive learning could reshape AI economics, lowering barriers for smaller players and accelerating real‑world applicability. It signals a strategic pivot for enterprises and governments investing in AI infrastructure.

Key Takeaways

- •Scaling laws no longer yield performance gains.

- •Adaptive, continual learning reduces compute costs.

- •RL post‑training offers higher ROI than pre‑training.

- •Infrastructure remains crucial for diverse AI innovation ecosystems.

Pulse Analysis

The AI community has long equated progress with larger models, but recent evidence suggests that the era of exponential scaling is waning. Researchers like Sara Hooker point out that transformer architectures are hitting a performance ceiling, making additional GPUs increasingly inefficient. This realization is prompting a re‑evaluation of research priorities, with a growing emphasis on algorithms that enable models to learn continuously from new data rather than relying on massive pre‑training runs. By focusing on adaptive mechanisms, firms can achieve comparable or superior outcomes while dramatically cutting energy consumption and hardware costs.

Post‑training reinforcement learning (RL) and test‑time scaling are emerging as the most promising avenues for extracting value from existing models. Unlike traditional pre‑training, RL fine‑tunes behavior using task‑specific feedback, delivering higher returns on compute investment. Gradient‑free adjustment techniques further simplify deployment, allowing developers to modify model outputs without costly retraining cycles. These methods democratize AI development, enabling startups and research labs with limited GPU budgets to compete with industry giants, thereby fostering a more diverse innovation landscape.

Infrastructure considerations remain pivotal despite the architectural shift. Data‑center investments, especially in regions like India, provide the backbone for both traditional and next‑generation AI workloads. While future models may diverge from classic transformer designs, the physical hardware—servers, networking, and cooling—will continue to support a broad spectrum of AI applications. Consequently, policymakers and investors should view AI‑specific infrastructure as a long‑term strategic asset that underpins both current scaling efforts and the forthcoming wave of adaptive, continual‑learning systems.

‘Making models bigger won’t get us far, AI must adapt like humans’: Adaption Labs CEO Sara Hooker

0

Comments

Want to join the conversation?

Loading comments...