Physical Intelligence Shows Robot Model with LLM-Like Generalization, Flaws Included

Companies Mentioned

Why It Matters

π0.7 demonstrates that robot foundation models can achieve multi‑task competence with a single architecture, lowering data collection costs and accelerating deployment across diverse hardware. Its reliance on contextual metadata reshapes how the robotics community evaluates generalization versus memorization.

Key Takeaways

- •π0.7 merges 4B‑parameter Gemma3 with 860M action expert

- •Rich metadata lets model learn from low‑quality demonstrations

- •Single model matches specialist performance on three distinct tasks

- •80% zero‑shot folding success on UR5e without task data

- •Debate persists on true compositional generalization versus data remix

Pulse Analysis

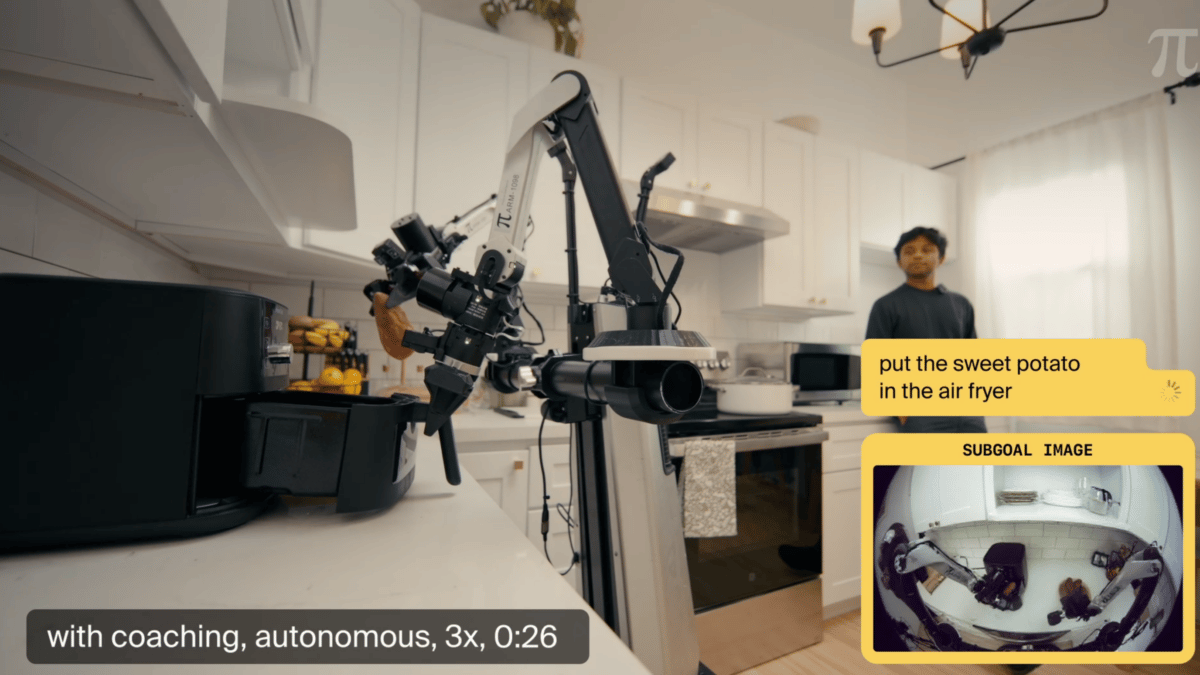

The launch of Physical Intelligence’s π0.7 marks a turning point for robot learning, echoing the trajectory of large language models. By pairing a 4 billion‑parameter Gemma3 backbone with a lightweight 860 million‑parameter motion expert, the system can ingest a spectrum of signals—natural‑language instructions, quality tags, control mode labels, and even subgoal images generated on‑the‑fly. This multimodal recipe enables the model to absorb imperfect demonstrations, turning failed or slow attempts into valuable training signals rather than discarding them. The result is a foundation model that can be fine‑tuned once and then applied across disparate tasks and hardware, dramatically reducing the data‑collection overhead that has long hampered robotic deployment.

From a business perspective, π0.7’s ability to replace multiple specialist models with a single generalist offers clear cost efficiencies. Companies can now train one model to handle laundry folding, espresso preparation, and box assembly, and even achieve an 80% success rate on a new UR5e manipulator without any task‑specific data. This cross‑embodiment transfer lowers the barrier to entry for manufacturers seeking to automate varied processes, potentially accelerating adoption in sectors ranging from consumer goods to logistics. Moreover, the model’s reliance on contextual prompts mirrors the prompt‑engineering practices that have become standard in AI, suggesting that robotics teams will soon need similar expertise to extract optimal performance.

However, the debate over genuine compositional generalization remains unresolved. Critics point out that π0.7’s success on the air‑fryer task may stem from near‑duplicate examples in its training set, raising questions akin to data‑contamination concerns in language‑model evaluation. The authors acknowledge this ambiguity, emphasizing that whether a skill emerges from true abstraction or clever remixing may be less important than the practical outcome. As the field advances, robust benchmarks that can disentangle these phenomena will be essential, and future iterations may incorporate reasoning modules to enable robots to plan ahead rather than rely solely on pattern recombination.

Physical Intelligence shows robot model with LLM-like generalization, flaws included

Comments

Want to join the conversation?

Loading comments...