Runway’s New Gen-4.5 Text-to-Video Model that Beats OpenAI’s Sora 2, Google’s Veo 3

•December 4, 2025

0

Companies Mentioned

Why It Matters

Gen‑4.5 raises the performance ceiling for AI‑generated video, giving creators higher fidelity tools and pressuring rivals to accelerate their own research. Its broad accessibility could accelerate adoption of text‑to‑video workflows in media and advertising.

Key Takeaways

- •Gen‑4.5 tops Sora 2 and Veo 3 in benchmarks.

- •Elo score 1247, beating Veo 3 (1226) and Sora 2 (1206).

- •Handles cinematic and photorealistic styles with fluid motion.

- •Trained on Nvidia Blackwell and Hopper GPUs.

- •Struggles with causality and object permanence.

Pulse Analysis

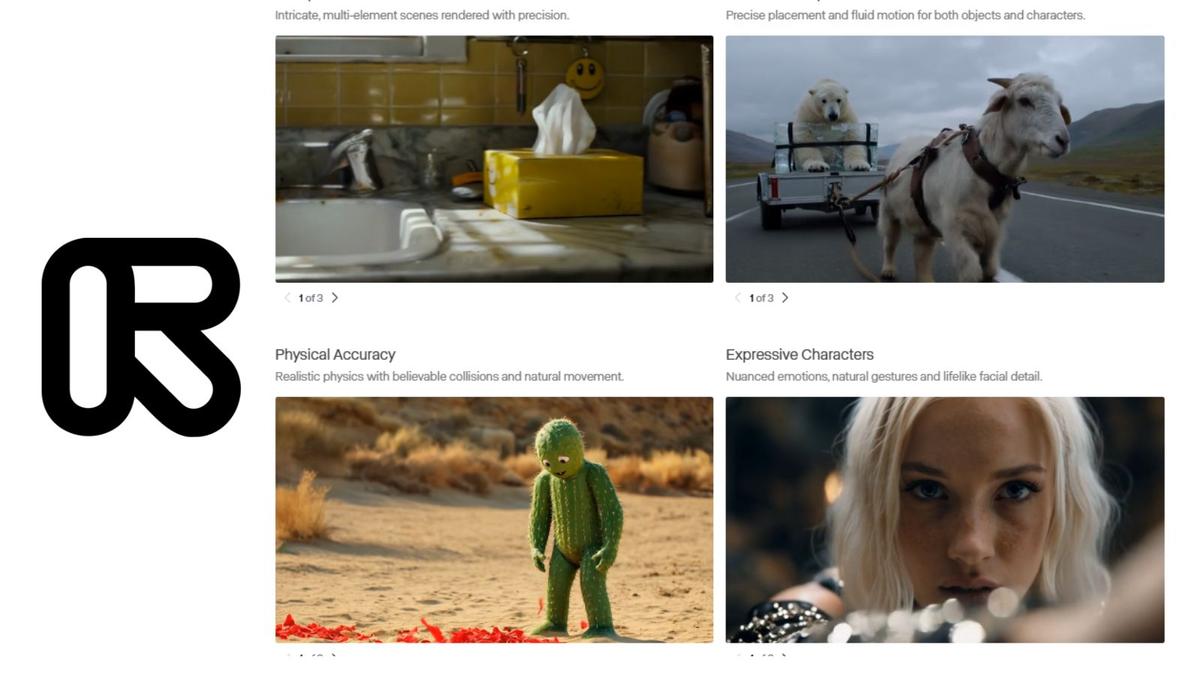

The rapid evolution of text‑to‑video AI has moved from experimental demos to production‑ready tools, and Runway’s Gen‑4.5 exemplifies this shift. Leveraging massive pre‑training datasets and novel post‑training techniques, the model delivers unprecedented visual fidelity, capturing subtle details like hair strands and material weaves that stay consistent across frames. By partnering with Nvidia and exploiting the latest Blackwell and Hopper architectures, Runway achieved higher data efficiency without sacrificing inference speed, positioning Gen‑4.5 as a practical solution for creators who need quick turnaround.

Benchmark results underscore Gen‑4.5’s competitive edge. Scoring 1247 on the Elo scale, it narrowly outperformed Google’s Veo 3 and OpenAI’s Sora 2 Pro, marking a measurable leap in realism and motion accuracy. The model’s ability to render fluid dynamics and maintain object continuity sets a new standard, even as it grapples with known AI pitfalls such as causality errors and success bias. These limitations highlight ongoing research priorities for building more reliable world models.

From a market perspective, Runway’s decision to bundle Gen‑4.5 across all subscription plans democratizes access to high‑end video generation, potentially reshaping content pipelines in advertising, entertainment, and e‑learning. Competitors will need to respond with comparable quality or differentiated features, accelerating innovation across the sector. As enterprises experiment with AI‑driven video at scale, the balance between creative freedom, technical robustness, and ethical safeguards will define the next wave of adoption.

Runway’s new Gen-4.5 text-to-video model that beats OpenAI’s Sora 2, Google’s Veo 3

0

Comments

Want to join the conversation?

Loading comments...