Sakana AI Introduces Doc-to-LoRA and Text-to-LoRA: Hypernetworks that Instantly Internalize Long Contexts and Adapt LLMs via Zero-Shot Natural Language

•February 27, 2026

0

Why It Matters

By amortizing adaptation costs, these hypernetworks make real‑time, long‑context LLM customization feasible for enterprises, cutting memory footprints and latency dramatically. The approach opens new pathways for cross‑modal AI without costly retraining.

Key Takeaways

- •Hypernetworks generate LoRA adapters in a single forward pass

- •T2L creates task adapters from natural language descriptions

- •D2L compresses 128K‑token documents into <50 MB memory

- •Sub‑second adaptation replaces minutes‑long context distillation

- •Enables zero‑shot visual knowledge transfer to text‑only LLMs

Pulse Analysis

The rapid adoption of large language models has exposed a fundamental trade‑off: in‑context learning offers flexibility but incurs quadratic attention costs, while context distillation and supervised fine‑tuning demand heavy compute and lengthy retraining cycles. Sakana AI’s hypernetwork strategy sidesteps this dilemma by front‑loading the learning effort. A single meta‑training phase equips a lightweight network to synthesize LoRA matrices on demand, turning what used to be a multi‑hour fine‑tuning job into an instantaneous forward pass.

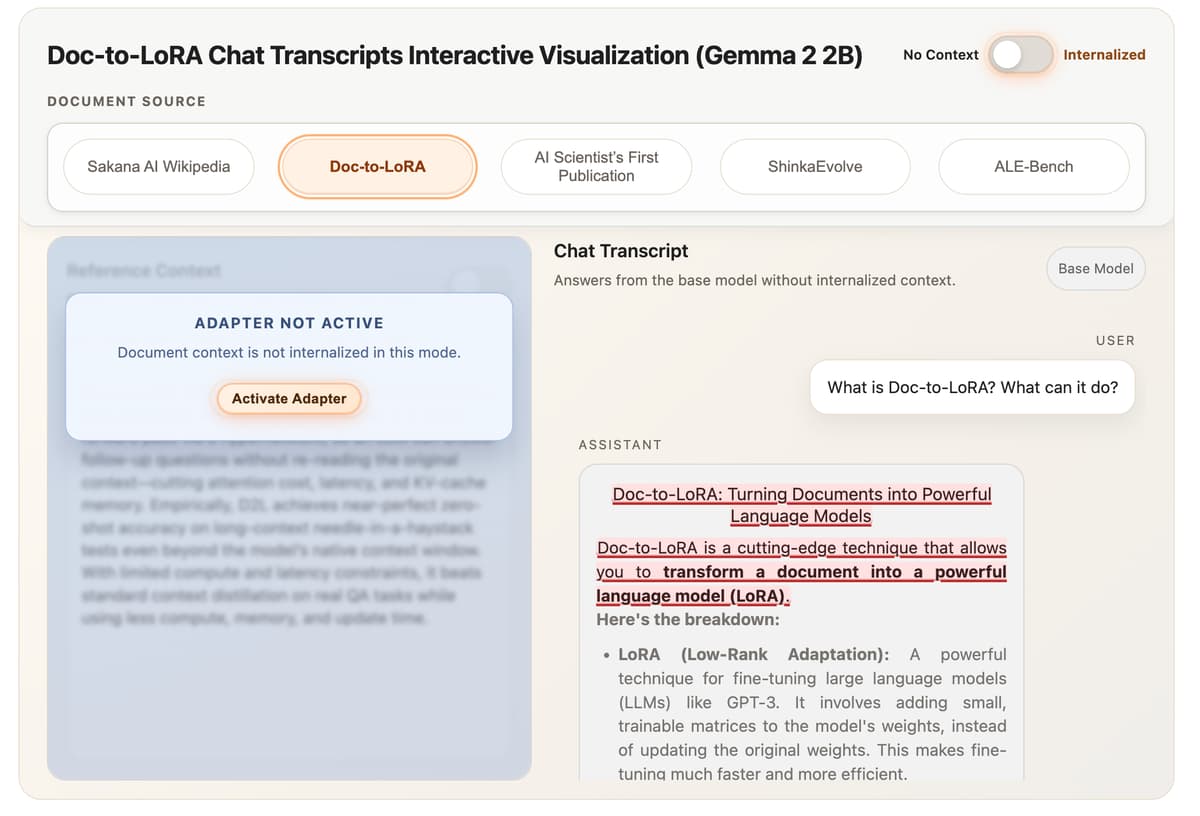

Text‑to‑LoRA (T2L) leverages a task encoder that translates plain‑language prompts into the A and B matrices required for LoRA adaptation. Trained via LoRA reconstruction and multi‑task supervised fine‑tuning, T2L consistently matches or exceeds specialist adapters on benchmarks such as GSM8K and ARC‑Challenge, while delivering more than a four‑fold reduction in adaptation latency compared with three‑shot in‑context learning. Doc‑to‑LoRA (D2L) extends this concept to whole documents, using a Perceiver‑style cross‑attention module to map variable‑length token streams into fixed‑shape adapters. Its chunk‑wise processing enables handling of sequences four times longer than a model’s native window, shrinking VRAM usage from over 12 GB to under 50 MB for 128K‑token inputs and achieving sub‑second internalization.

The commercial implications are significant. Enterprises can now embed extensive knowledge bases, legal contracts, or product manuals directly into LLM weights, eliminating costly prompt engineering and memory bottlenecks. Moreover, D2L’s ability to ingest visual embeddings from a vision‑language model paves the way for multimodal assistants that learn from images without retraining the text backbone. As hypernetwork‑driven adaptation matures, we can expect a wave of plug‑and‑play AI services that deliver domain‑specific expertise on the fly, accelerating AI deployment across finance, healthcare, and customer support.

Sakana AI Introduces Doc-to-LoRA and Text-to-LoRA: Hypernetworks that Instantly Internalize Long Contexts and Adapt LLMs via Zero-Shot Natural Language

0

Comments

Want to join the conversation?

Loading comments...