Spotify Denies It’s Pushing Unwanted AI Slop Onto Subscribers, Claiming It ‘Does Not Promote or Penalize Tracks Created Using AI Tools’

•January 14, 2026

0

Companies Mentioned

Why It Matters

The dispute highlights the tension between emerging AI creativity and listener expectations, potentially shaping platform policies and artist royalties across the streaming market.

Key Takeaways

- •Spotify says it neither promotes nor penalizes AI tracks.

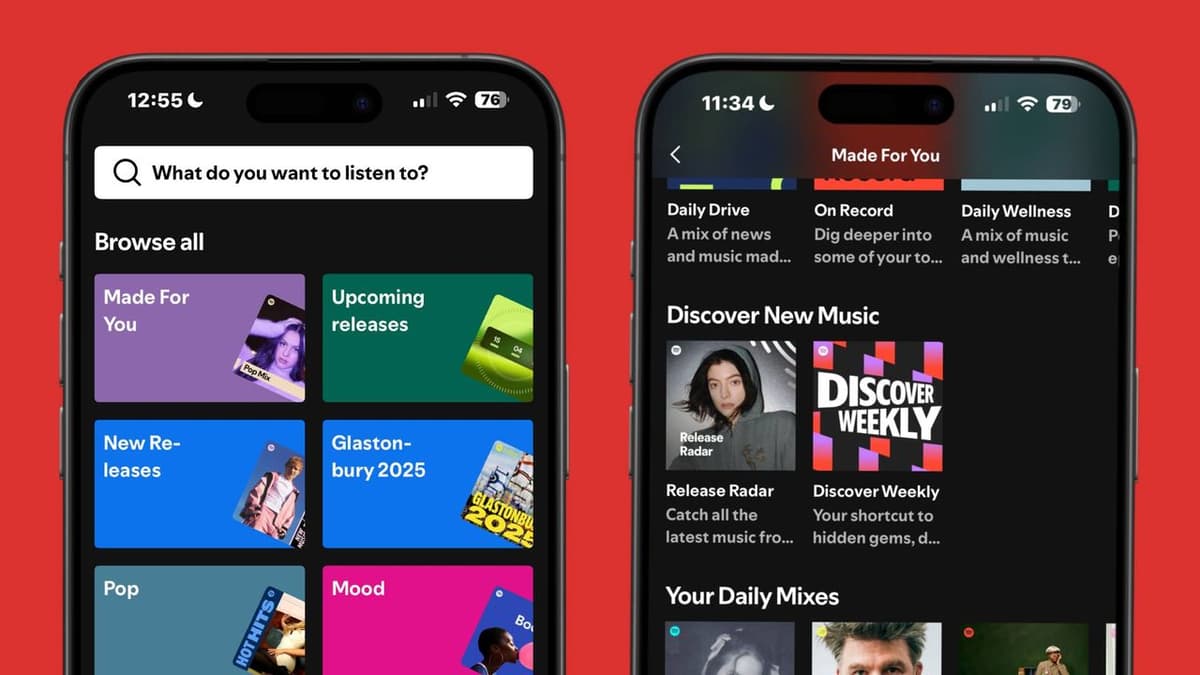

- •Users report AI songs appearing in Discover Weekly, Release Radar.

- •Bandcamp bans AI-generated music, urges users to flag content.

- •Spotify announced AI spam filters and industry‑standard credit disclosures.

- •Distinguishing AI from human-made tracks remains technically difficult.

Pulse Analysis

The rise of AI‑generated music has forced streaming services to confront a new content moderation dilemma. Spotify argues that its role is limited to policing harmful uses—such as impersonation or deceptive spam—while allowing creators to experiment with AI tools. Its September announcement of enhanced AI protections, including a dedicated spam‑filtering system and mandatory AI disclosures in credits, signals an effort to balance innovation with user experience, yet the platform admits drawing a clear line between AI and non‑AI tracks is challenging.

Competitors are taking divergent approaches. Deezer has already implemented filters that label AI‑produced songs, and Bandcamp has gone further by outright banning AI‑generated releases, relying on community reporting to enforce the rule. These contrasting strategies underscore the technical difficulty of detecting AI‑crafted audio, which can mimic human production closely. As AI models improve, the industry may see a patchwork of standards, prompting calls for unified metadata protocols that can reliably flag AI content across services.

For artists and listeners, the stakes are high. Unwanted AI tracks can dilute curated playlists, erode trust, and potentially divert royalties away from human creators. Regulators may soon intervene, demanding transparent labeling and consistent enforcement to protect intellectual property and consumer choice. Spotify’s current stance—neither promoting nor penalizing AI music—places it at a crossroads, where future policy decisions will likely influence market dynamics, competitive positioning, and the broader discourse on AI’s role in the music ecosystem.

Spotify denies it’s pushing unwanted AI slop onto subscribers, claiming it ‘does not promote or penalize tracks created using AI tools’

0

Comments

Want to join the conversation?

Loading comments...