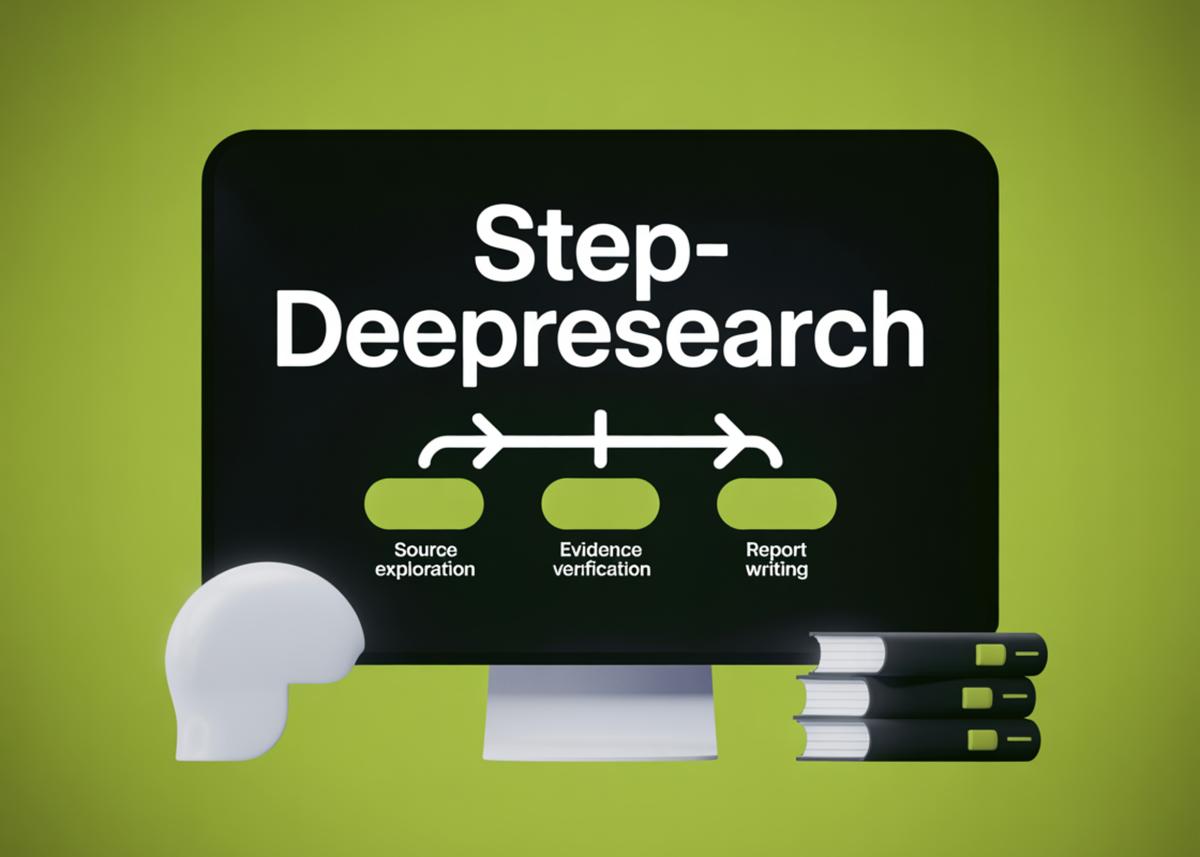

StepFun AI Introduce Step-DeepResearch: A Cost-Effective Deep Research Agent Model Built Around Atomic Capabilities

•January 25, 2026

0

Why It Matters

By delivering deep‑research quality at a fraction of the compute cost, Step‑DeepResearch lowers barriers for enterprises seeking reliable, citation‑rich analysis without deploying multiple specialized agents.

Key Takeaways

- •Single 32B model integrates planning, search, verification, reporting.

- •Atomic capability pipelines train each skill with specialized data.

- •Long-context training up to 128k tokens reduces inference cost.

- •ReAct loop uses curated 600+ trusted domains for reliable sources.

- •Benchmarks show 61% rubric compliance, rivaling larger research agents.

Pulse Analysis

The rise of AI‑driven research assistants has been hampered by fragmented toolchains and high inference expenses. Step‑DeepResearch tackles this by collapsing the typical multi‑agent architecture into a single, 32‑billion‑parameter model that can plan, retrieve, verify, and author reports autonomously. This consolidation not only streamlines deployment but also reduces latency, as the model no longer needs to hand off context between disparate services. By leveraging a ReAct‑style reasoning loop, the agent can interleave thought, tool invocation, and observation, mirroring human research workflows while maintaining tight control over token usage.

A distinctive feature of Step‑DeepResearch is its focus on atomic capabilities, each nurtured through dedicated data synthesis pipelines. Planning data derives from real technical reports, while deep‑information‑seeking training uses graph‑based queries over massive knowledge bases like Wikidata5m. Reflection and verification benefit from multi‑agent teacher traces that enforce fact‑checking before report generation. Training progresses through three stages: mid‑training with up to 32k‑token contexts, fine‑tuning with 128k‑token windows and explicit tool calls, and reinforcement learning with a rubric‑driven reward model. This staged approach yields robust long‑horizon reasoning without the token explosion that typically plagues large language models.

From a market perspective, Step‑DeepResearch’s cost‑effective architecture could democratize access to high‑quality research automation. Its 61.42% compliance on Scale AI’s rubric places it on par with heavyweight offerings from OpenAI and Google, yet it runs on a comparatively modest 32B model. Enterprises in finance, law, and academia stand to benefit from faster, citation‑rich analyses without the overhead of multiple specialized agents. As the model matures, its curated search index and external memory mechanisms may set a new standard for trustworthy AI‑assisted research, prompting competitors to adopt similar atomic‑capability frameworks.

StepFun AI Introduce Step-DeepResearch: A Cost-Effective Deep Research Agent Model Built Around Atomic Capabilities

0

Comments

Want to join the conversation?

Loading comments...