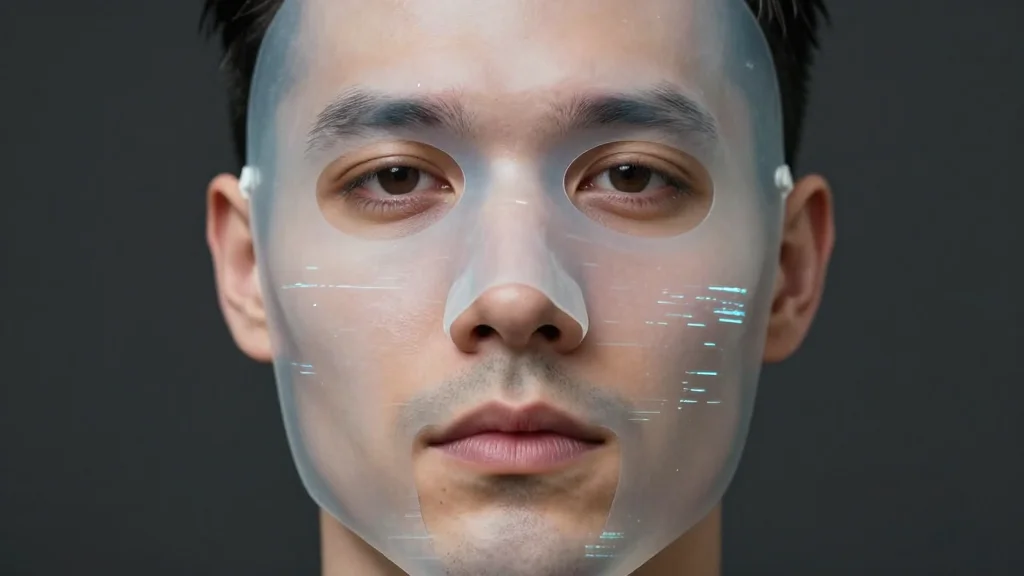

The Deepfake Dilemma: From Financial Fraud to Reputational Crisis

Why It Matters

Deepfakes erode trust in real‑time communications, exposing firms to multi‑million‑dollar fraud and severe brand harm, making resilient verification processes a strategic imperative.

Key Takeaways

- •43% of security leaders reported audio deepfake attacks last year

- •Arup lost about $25 million after a CFO deepfake scam

- •Fake CEO videos forced BSE to issue urgent investor warnings

- •Rapid forensic analysis and legal takedowns are essential response steps

Pulse Analysis

The surge of generative AI has turned deepfakes from a novelty into a mainstream security concern. While early synthetic media suffered obvious flaws—odd blinking or distorted limbs—today’s models, such as Nano Banana Pro, produce seamless audio and video that can fool even seasoned executives. Gartner’s 2025 data, indicating that nearly half of cybersecurity leaders have encountered deepfakes, underscores how quickly the threat surface has expanded across industries, from finance to public infrastructure.

Financial fraud remains the most immediate danger, as illustrated by the Arup incident where a fabricated CFO appearance prompted a $25 million wire transfer. The root cause is the lack of authentication for voice and video channels, allowing attackers to bypass traditional controls with a single convincing call. Simultaneously, deepfakes are being weaponized to sabotage reputations; the Bombay Stock Exchange’s emergency alert after a fake CEO endorsement demonstrates how quickly false narratives can destabilize markets and erode investor confidence. These attacks exploit human heuristics, rendering instinctive trust in familiar faces or voices unreliable.

Mitigating the deepfake dilemma requires a layered approach. Technical forensics can quickly flag manipulated media, while legal teams coordinate takedowns and enforce watermarking standards. Clear, timely communication with stakeholders helps contain reputational fallout. In the long run, industry‑wide authentication—akin to HTTPS lock icons—combined with embedded digital watermarks will create a verifiable trust framework. Until such standards are universal, organizations must invest in rapid verification tools and cross‑functional response playbooks to stay ahead of synthetic media threats.

The deepfake dilemma: From financial fraud to reputational crisis

Comments

Want to join the conversation?

Loading comments...