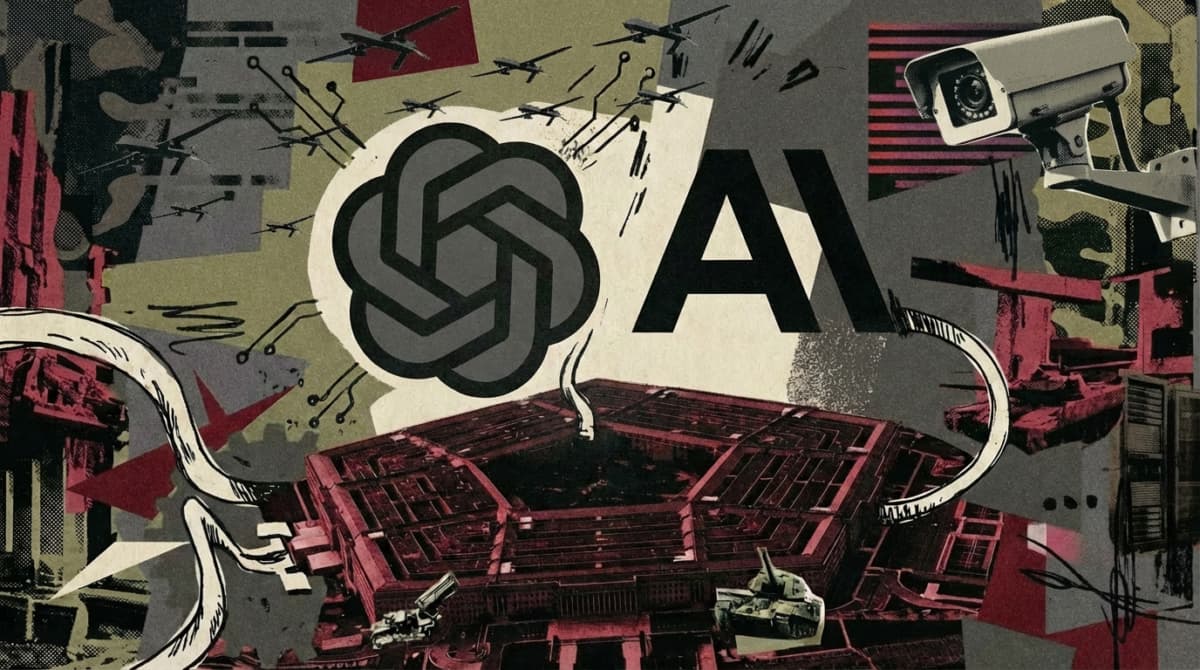

The Pentagon-OpenAI-Anthropic Fallout Comes Down to Three Words: "All Lawful Use"

•March 1, 2026

0

Why It Matters

The deal sets a precedent for how AI firms negotiate military access, influencing future governance of AI in national security and shaping public trust in tech‑government partnerships.

Key Takeaways

- •OpenAI signed Pentagon deal hours after Anthropic ban.

- •Contract permits “all lawful use” with three red‑line restrictions.

- •Vague language leaves loopholes for surveillance and autonomous weapons.

- •Industry collective pushback weakened; OpenAI undercut Anthropic stance.

- •Debate raises AI governance and military oversight concerns.

Pulse Analysis

The Pentagon’s rapid pivot to OpenAI after President Trump’s directive to drop Anthropic underscores the growing strategic value of generative AI for defense. While OpenAI’s contract promises to restrict domestic mass surveillance, autonomous lethal systems, and high‑risk automated decisions, the reliance on the phrase “all lawful purposes” transfers interpretive power to existing statutes and Department of War directives. This legal framing allows the government to exploit gray areas—such as commercial data analysis that skirts surveillance definitions—raising questions about the adequacy of current AI policy frameworks.

Technical safeguards outlined by OpenAI, including cloud‑only deployment and human‑in‑the‑loop requirements, aim to mitigate misuse. However, the Department of War’s Directive 3000.09 only mandates “appropriate levels of human judgment,” a standard that remains undefined and could be satisfied by minimal oversight. Critics argue that without explicit prohibitions, AI models could still influence targeting decisions in semi‑autonomous weapons, blurring the line between advisory tools and decision‑makers. The contract therefore illustrates how ambiguous language can create operational loopholes, prompting calls for clearer statutory language and enforceable oversight mechanisms.

The industry reaction reveals a fracture in collective resistance to military AI integration. Anthropic’s refusal to accept the same terms galvanized user support, propelling its Claude platform to the App Store’s summit, while OpenAI’s solo agreement diluted the bargaining power of smaller labs. This episode may embolden the Pentagon to pursue similar arrangements with other providers, potentially normalizing a permissive stance toward AI in warfare. Stakeholders now face a pivotal choice: push for unified red‑line standards across the sector or risk a fragmented landscape where individual firms set divergent precedents for AI’s role in national security.

The Pentagon-OpenAI-Anthropic fallout comes down to three words: "all lawful use"

0

Comments

Want to join the conversation?

Loading comments...