What if We Treated the Nvidia GB10 as an Employee: AI Could Remove Reporting Roles Entirely From Businesses with Thousands of Job Losses, Here's How This Reviewer Did It

•February 7, 2026

0

Companies Mentioned

Why It Matters

The proof‑of‑concept shows that reliable on‑premise AI can displace entire reporting functions, reshaping cost structures and talent allocation across data‑driven enterprises.

Key Takeaways

- •Nvidia GB10 AI automates reporting workflows.

- •Larger models achieve >99.9% step accuracy.

- •Automation can replace dedicated reporting staff.

- •On‑premise AI keeps data secure.

- •Cost recovery within twelve months.

Pulse Analysis

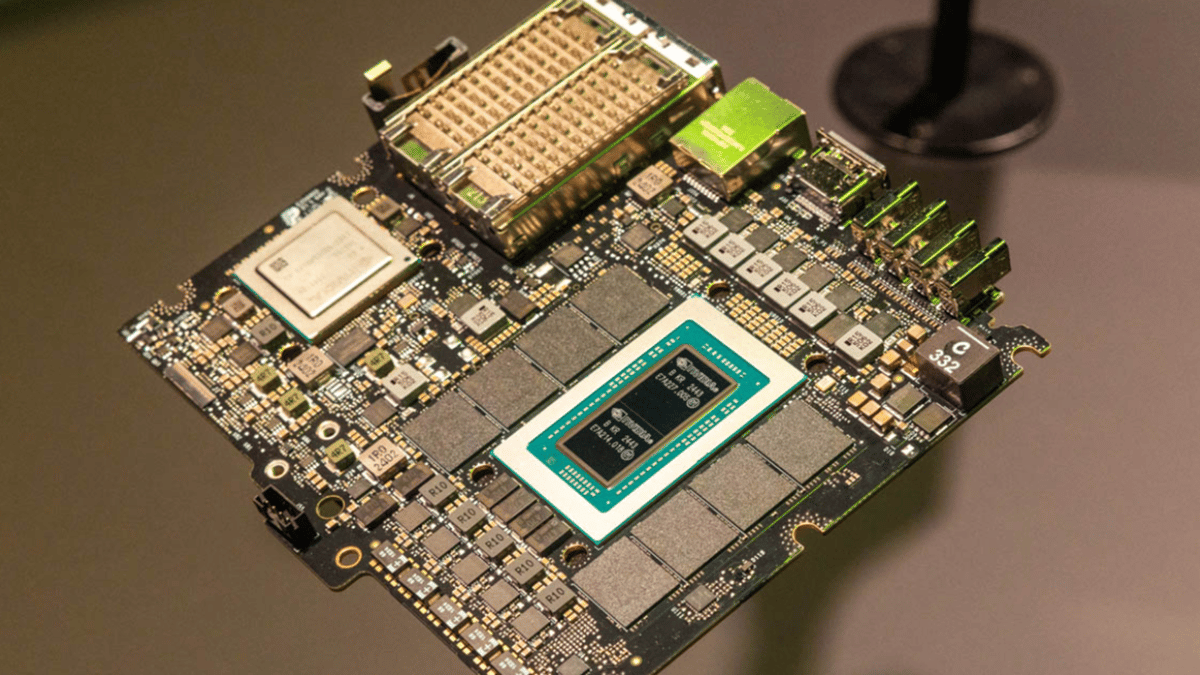

The rise of on‑premise AI platforms like Nvidia's GB10 marks a turning point for organizations that rely heavily on manual data reporting. By leveraging local GPU acceleration, companies can sidestep latency and privacy concerns associated with cloud services, while still tapping into powerful large‑language models. This shift aligns with a broader industry trend toward edge computing, where processing power is brought closer to the data source, enabling faster decision‑making and tighter security controls.

In the Serve The Home experiment, the integration of n8n—a low‑code workflow orchestrator—with GPT‑OSS models demonstrated how complex, multi‑source queries can be handled end‑to‑end without human oversight. The larger 120B FP8 model delivered near‑perfect step accuracy, reducing error rates from weekly to annual incidents. Crucially, the system’s cost structure proved favorable: the capital outlay for two Dell Pro Max servers equipped with GB10 units was amortized within twelve months, thanks to the elimination of a full‑time reporting role and the ability to repurpose staff for strategic initiatives such as editorial management.

The broader implication is a potential redefinition of the reporting function across sectors ranging from media to finance. As AI models become more reliable, the skill set required for data teams will shift from manual extraction to workflow design, model supervision, and exception handling. Companies that adopt this technology early can gain a competitive edge by reallocating human talent to higher‑impact analysis while maintaining rigorous data governance. However, success hinges on careful model selection, robust workflow testing, and strict on‑premise data controls to mitigate risks associated with automation errors and security breaches.

What if we treated the Nvidia GB10 as an employee: AI could remove reporting roles entirely from businesses with thousands of job losses, here's how this reviewer did it

0

Comments

Want to join the conversation?

Loading comments...