Is Your Solar Generator’s 200-Watt Panel Not Delivering 200 Watts? Here’s Why the Actual Output Is Often Much Lower.

•March 6, 2026

0

Why It Matters

Understanding the gap between rated and actual wattage prevents under‑performance and protects equipment, guiding consumers to size and pair solar panels correctly for reliable off‑grid power.

Key Takeaways

- •Rated wattage assumes ideal sunlight and angle

- •Real output often 50‑75% of spec

- •Mismatch can overload power stations

- •Connector type (MC4, XT60) must match

- •Choose panels matched to station’s input range

Pulse Analysis

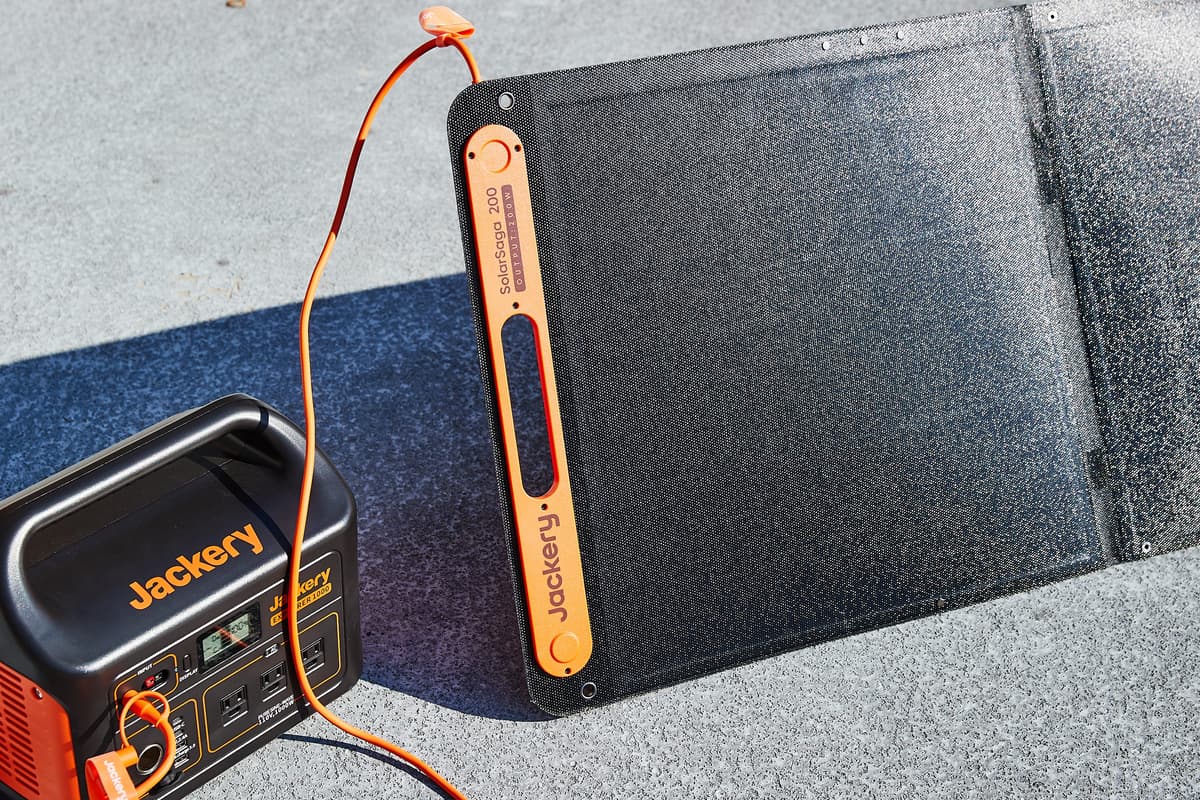

When manufacturers quote a solar panel’s wattage, they are describing the maximum power the cell can generate under standard test conditions—clear skies, 25 °C temperature and a perpendicular sun angle. In practice, clouds, shading, sub‑optimal tilt and higher temperatures can shave off half of that potential, leaving most users with only 100‑150 watts from a nominal 200‑watt panel. This discrepancy matters because charging times for portable power stations are directly tied to the actual input, influencing how quickly you can restore critical devices during an outage or on a campsite.

Equally important is matching the panel’s output to the power station’s input specifications. Each battery‑powered station lists a maximum solar input, often far lower than the panel’s peak rating; exceeding this limit can trigger protective shutdowns or, in worst cases, damage the charge controller. Consumers should verify the station’s accepted voltage and current range and select a panel whose peak output stays comfortably within those bounds. Connector compatibility also plays a role—while MC4 and XT60 are common, mismatched plugs require adapters that can introduce resistance or safety concerns.

The market now offers bundled kits where manufacturers pair a power station with a panel engineered for optimal performance, simplifying the buying decision. For users who prefer custom setups, the rule of thumb is to size the panel at 70‑80% of the station’s maximum input and to factor in typical sun exposure for the intended location. By accounting for realistic output, input limits, and connector standards, buyers can ensure their solar generator delivers reliable, efficient power when it matters most.

Is Your Solar Generator’s 200-Watt Panel Not Delivering 200 Watts? Here’s Why the Actual Output Is Often Much Lower.

0

Comments

Want to join the conversation?

Loading comments...