Recent Posts

Video•Feb 25, 2026

AI Companies Don't Want You to Know This | VS Code + Continue

The video walks viewers through building a completely free, agentic integrated development environment (IDE) by pairing Visual Studio Code with the open‑source Continue.dev extension and locally hosted large language models (LLMs). Instead of relying on paid services such as GitHub Copilot, Google Gemini, or Amazon CodeWhisperer, the presenter shows how to run models on‑premise via Ollama, eliminating external API calls and associated fees. A core insight is the three‑model architecture required by Continue.dev: a chat model for conversational code queries, an autocomplete model for real‑time suggestions, and an embedding model that indexes the project’s source files for fast semantic search. The speaker demonstrates installing these models—e.g., Llama 3, a lightweight autocomplete model, and a Nomic embedding model—configuring permissions, and connecting them to VS Code, all within roughly ten minutes. The demo uses the Argo CD repository to illustrate the workflow. The chat model explains the entire project in seconds, the extension can highlight and describe individual functions, and the edit mode can automatically insert comments or suggest improvements, mirroring Copilot’s in‑IDE experience. Additional features such as multi‑session handling, MCP server integration, and customizable model selection are highlighted, underscoring the flexibility of the setup. By enabling developers to run powerful AI assistants locally, this approach democratizes access to advanced coding tools, cuts subscription costs, and safeguards proprietary code. Organizations can adopt AI‑driven development without exposing intellectual property to third‑party APIs, potentially reshaping standard IDE workflows.

By Abhishek Veeramalla

Video•Feb 23, 2026

Setup Claude Code for FREE in 3 Simple Steps.

In the tutorial, Abhishek Veeramalla demonstrates how to run Claude Code for free by integrating it with Ollama and leveraging open‑source LLMs such as gpt‑oss, Qwen3‑Coder‑Next, and DeepSeek. The three‑step process eliminates the need for paid API keys, allowing developers...

By Abhishek Veeramalla

Video•Feb 19, 2026

This FREE Tool Can Help You Backup and Restore Anything at Enterprise Level.

Plakar is an open‑source backup solution aimed at DevOps engineers who need enterprise‑level data resilience. The video explains how traditional object storage like S3 lacks point‑in‑time recovery and built‑in encryption, leaving critical workloads exposed to accidental deletion, ransomware, or corruption. Plakar...

By Abhishek Veeramalla

Video•Feb 18, 2026

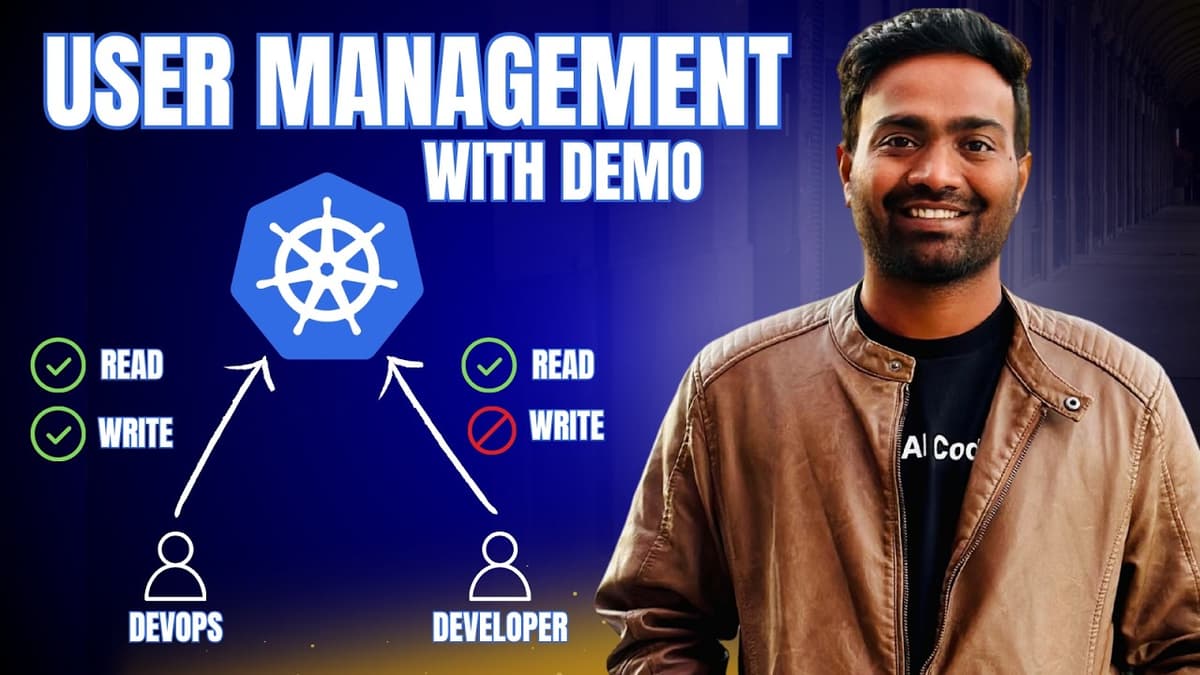

Realtime Kubernetes User Management with Demo | Must Watch

The video tackles the persistent pain points of Kubernetes user management, highlighting how authentication (kubeconfig) and authorization (RBAC) become unwieldy at scale. It explains that distributed kubeconfig files expose cluster IPs, certificates, and tokens, while the native RBAC model forces...

By Abhishek Veeramalla

Video•Feb 10, 2026

Complete FREE Practical Hands-On Labs | KodeKloud FREE Week

In 2026, mastering DevOps or cloud engineering hinges on a clear roadmap and hands‑on practice, which Abhishek highlights in his latest video. He promotes KodeKloud’s limited‑time Free Week, granting unrestricted access to its Standard plan for seven days without credit‑card...

By Abhishek Veeramalla

Video•Feb 9, 2026

I Tested 30 DevOps Tasks with AI to See if AI Can Replace DevOps.

The video documents a two‑day experiment where creator Abishank evaluated 20‑30 real‑world DevOps tasks—ranging from beginner to advanced—using several popular large language models (LLMs). He leveraged GitHub Copilot’s ability to switch among models such as Anthropic’s Opus 4.6, OpenAI’s Sonnet 4.5, and...

By Abhishek Veeramalla