Recent Posts

Social•Feb 14, 2026

Language Feedback Can Substitute Rewards in Reinforcement Learning

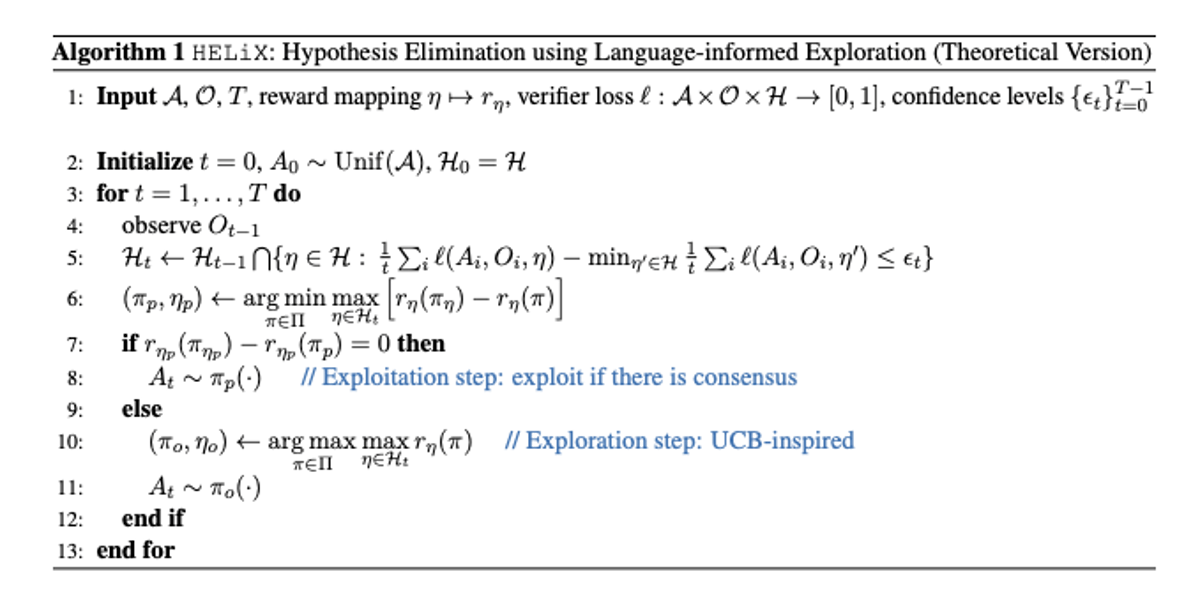

Can natural language replace scalar rewards in reinforcement learning? A new paper from researchers at Stanford, UMD, Netflix, and Microsoft Research presents a formal structure for utilizing natural language as feedback in a reinforcement learning setting they call Learning from Language Feedback (LLF). The premise of the paper is that an agent has access to a hypothesis space that maps meaningfully to the environment's reward and feedback functions and can use a "verifier" function to interpret natural language feedback on its behavior to produce a "verifier loss" over [0, 1]. After the agent takes some action, it receives natural language feedback on it, and the verifier loss measures the feedback's consistency with a specific candidate hypothesis (loss = 0 means the feedback is maximally consistent with a hypothesis, loss = 1 maximally inconsistent). Their regret bounds scale with a new complexity notion called the "transfer eluder dimension." The paper proposes three assumptions: 1. The agent can map hypotheses to reward functions (even though it doesn't directly observe the rewards) 2. A verifier exists that can quantify hypothesis consistency as loss 3. Hypotheses are identifiable and produce distinct feedback distributions under the verifier. I found this quite abstract but interpreted it similarly to full-rank identifiability in GLM. With these assumptions, the paper makes the case that natural language feedback can provide richer information than scalar rewards under standard RL, leading to faster learning. The authors implement an algorithm, HELiX (Hypothesis Elimination with Linguistic eXploration), deployed under three games: Wordle, Battleship, and Minesweeper. In Wordle, the hypothesis space H can be considered to be all five-letter words, with each iteration of the game eliminating candidate words. The paper is analytical and abstract, with the "verifier" concept (proposed largely like an oracle) doing a tremendous amount of work, but it represents an interesting framing of language as feedback in the RL framework. Paper linked below.

By Eric Seufert