Recent Posts

News•Mar 1, 2026

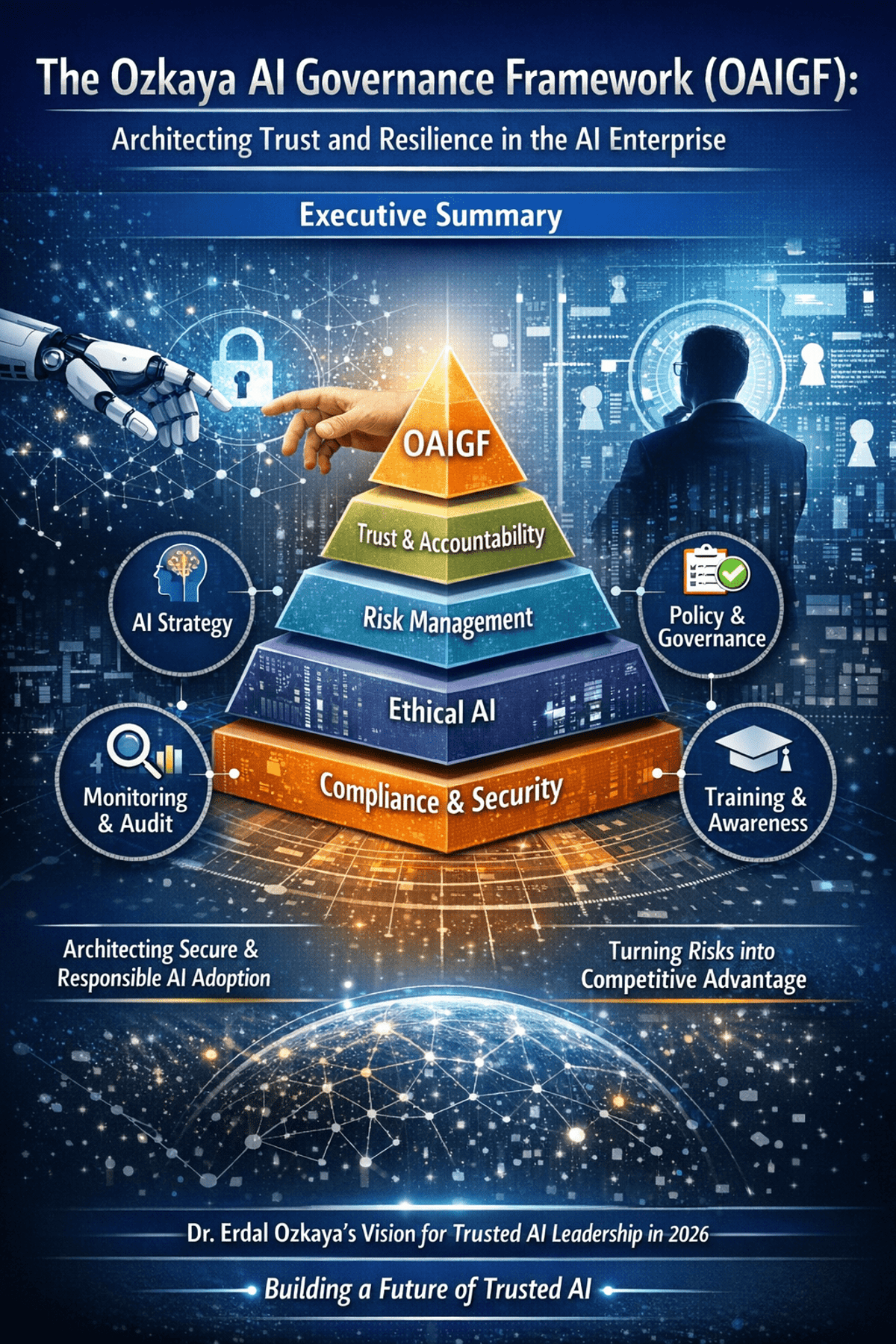

The Ozkaya AI Governance Framework (OAIGF): Architecting Trust and Resilience in the AI Enterprise

The Ozkaya AI Governance Framework (OAIGF) is a practitioner‑driven methodology that equips CISOs with a comprehensive blueprint for secure, ethical, and compliant AI deployment at enterprise scale. Building on standards such as NIST AI RMF and ISO/IEC 42001, the framework defines five immutable principles and seven operational pillars covering risk management, secure development, data and model governance, regulatory compliance, human‑AI teaming, and continuous monitoring. By embedding security‑by‑design, transparency, accountability, resilience, and ethical alignment into every AI lifecycle stage, OAIGF transforms potential vulnerabilities into a strategic differentiator. Its actionable mandates—like AI risk registers and dedicated AISOC functions—enable organizations to proactively defend against adversarial attacks while meeting evolving AI regulations.

By Erdal Ozkaya’s Cybersecurity Blog

News•Feb 27, 2026

Beyond the CLI: 5 Governance Questions Every CISO Must Ask Before Deploying Claude Code

Anthropic’s Claude Code introduces a CLI‑based AI agent that can navigate repositories, draft patches, and run tests, turning code remediation into a near‑instant process. While the speed gains are compelling, the tool also grants autonomous execution rights that blur traditional...

By Erdal Ozkaya’s Cybersecurity Blog

News•Feb 19, 2026

Auto Draft

Veteran CISOs are urged to abandon technical dashboards and become business risk leaders who speak the board’s language. By translating security concepts into revenue‑impact terms, aligning initiatives with corporate growth plans, and quantifying cyber risk in monetary values, they secure...

By Erdal Ozkaya’s Cybersecurity Blog

News•Feb 2, 2026

AI Didnt Break Cybersecurity

The author argues that AI did not break cybersecurity; longstanding governance failures did. AI merely amplified existing shadow‑IT practices and unclear risk ownership, exposing gaps that boards and CISOs have ignored. The piece calls for a shift from treating security...

By Erdal Ozkaya’s Cybersecurity Blog

News•Jan 28, 2026

Bridging Compliance and Cybersecurity in Financial Reporting in 2026

The SEC is drafting rules that will require public companies to disclose their cybersecurity controls as part of regular financial reporting. This links cyber risk directly to compliance, forcing firms to treat security as a core reporting element. The article...

By Erdal Ozkaya’s Cybersecurity Blog

News•Jan 22, 2026

Governing Cybersecurity in the AI Era -Pwc Workshop 2026

PwC‑affiliated firm A.F. Ferguson & Co. hosted a one‑day masterclass titled “Governing Cybersecurity in the AI Era – Digital Trust, Risk & Resilience” on 22 January 2026 in Karachi. More than 100 senior technology and business leaders, including CISOs, CIOs and CFOs,...

By Erdal Ozkaya’s Cybersecurity Blog

News•Dec 29, 2025

The Definitive 2025 Cyber Rewind & 2026 Roadmap

At SECON’s 2025 and 2026 conferences, the author highlighted a seismic shift in cyber risk, moving from classic phishing to automated, credential‑based attacks and AI‑driven threats. Data shows MFA bypass rates soaring to 45%, ransomware focusing on data theft, and...

By Erdal Ozkaya’s Cybersecurity Blog

News•Dec 17, 2025

Securing the Road Ahead: The Intersection of Cybersecurity and Intelligent Transportation

The blog highlights the growing convergence of cybersecurity and intelligent transportation, emphasizing that autonomous vehicles and connected infrastructure are becoming "data centers on wheels." It outlines three core risk areas—V2X communication vulnerabilities, AI‑driven sensor attacks, and infrastructure resilience—and presents strategic...

By Erdal Ozkaya’s Cybersecurity Blog