Robohub

Publication

0 followers

Non-profit platform sharing robotics news and expert perspectives from research, startups, industry, and education

Recent Posts

News•Feb 26, 2026

I Developed an App that Uses Drone Footage to Track Plastic Litter on Beaches

University of Limerick researchers have created a drone‑based system that uses computer‑vision AI to locate plastic litter on beaches and feeds the data into a free mobile app. The platform can identify objects as small as 1 cm from 30 m altitude and provides precise GPS coordinates for volunteers, turning clean‑ups into a targeted, gamified activity. Pilot trials with Irish community groups have detected an average of 30 pieces per ten‑minute flight and revealed litter hotspots up to ten times higher than nearby stretches. The technology, now part of the EU‑funded BluePoint project, has been rolled out to more than 30 drones across Europe, engaging over 2,000 participants.

By Robohub

News•Feb 25, 2026

Translating Music Into Light and Motion with Robots

University of Waterloo researchers unveiled a swarm of soccer‑ball‑sized robots that paint with coloured light trails in response to musical cues such as tempo and chord progressions. The system captures the robots' movements on a floor‑mounted camera, creating a visual...

By Robohub

News•Feb 20, 2026

Robot Talk Episode 145 – Robotics and Automation in Manufacturing, with Agata Suwala

Technology manager Agata Suwala at the Manufacturing Technology Centre (MTC) is steering advanced robotics projects that modernise aerospace production lines. Her team integrates automation to replace labour‑intensive steps, cutting cycle times and error rates. The latest initiative targets a circular‑economy...

By Robohub

News•Feb 17, 2026

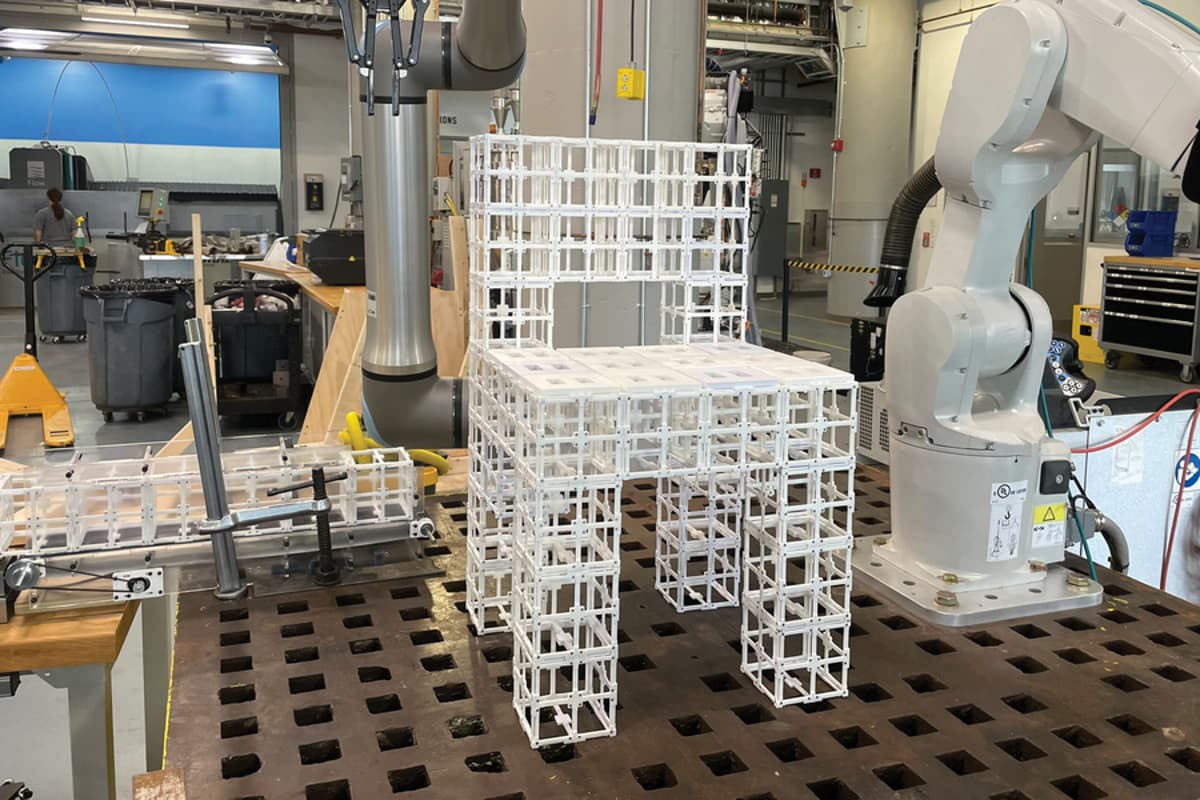

“Robot, Make Me a Chair”

MIT researchers unveiled an AI‑driven robotic assembly platform that turns natural‑language prompts into physical objects. A generative AI creates a 3‑D mesh, while a vision‑language model determines component placement, enabling a robot to assemble furniture from reusable parts. The system...

By Robohub

News•Feb 13, 2026

Robot Talk Episode 144 – Robot Trust in Humans, with Samuele Vinanzi

In a recent Robot Talk episode, senior lecturer Samuele Vinanzi discussed how robots can evaluate human trustworthiness using behavioral cues. His work in cognitive robotics merges AI, psychology, and cognitive science to give machines social awareness. Vinanzi’s research emphasizes emotional...

By Robohub

News•Feb 6, 2026

Robot Talk Episode 143 – Robots for Children, with Elmira Yadollahi

Robot Talk’s Episode 143 featured Elmira Yadollahi, an assistant professor at Lancaster University, discussing how children interact with robots. Yadollahi’s research focuses on explainability, multimodal perception, and managing expectations to build trust and AI literacy among young users. She has...

By Robohub

News•Jan 30, 2026

Robot Talk Episode 142 – Collaborative Robot Arms, with Mark Gray

Universal Robots' Mark Gray discussed the company's lightweight collaborative robot arms on Robot Talk Episode 142. The cobots, weighing under 20 kg, are designed for safe, direct interaction with human workers and have been deployed in leading UK research centers such...

By Robohub

News•Jan 23, 2026

Robot Talk Episode 141 – Our Relationship with Robot Swarms, with Razanne Abu-Aisheh

Claire interviews Razanne Abu‑Aisheh, a senior researcher at the University of Bristol, about how people perceive and interact with robot swarms. Abu‑Aisheh explains that collective robot behaviours heavily influence human attitudes, and she advocates for community‑centred, inclusive design to shape...

By Robohub

News•Jan 23, 2026

Vine-Inspired Robotic Gripper Gently Lifts Heavy and Fragile Objects

Engineers at MIT and Stanford have created a vine‑inspired robotic gripper that inflates thin pneumatic tubes to grow, wrap around, and lift objects. The system can transition from an open‑loop growth phase to a closed‑loop sling, enabling it to retrieve...

By Robohub

News•Jan 16, 2026

Robot Talk Episode 140 – Robot Balance and Agility, with Amir Patel

In Robot Talk episode 140, UCL Associate Professor Amir Patel discusses designing robots that emulate the cheetah’s remarkable speed and agility. He explains how sensor fusion, computer vision, mechanical modelling, and optimal control are combined to decode high‑speed predator locomotion and...

By Robohub

News•Jan 9, 2026

Robot Talk Episode 139 – Advanced Robot Hearing, with Christine Evers

Claire interviews Associate Professor Christine Evers of the University of Southampton about her work advancing robot hearing. Evers is embedding insights from human auditory processing into deep‑learning audio models, shifting away from massive internet‑scale networks. Her bio‑inspired approach emphasizes compute...

By Robohub

News•Jan 7, 2026

Meet the AI-Powered Robotic Dog Ready to Help with Emergency Response

Texas A&M engineering students have built an AI‑powered robotic dog that combines a multimodal large language model with visual memory to navigate chaotic environments. The prototype processes voice commands, interprets camera data, and plans paths while recalling previously traversed routes,...

By Robohub

News•Dec 31, 2025

MIT Engineers Design an Aerial Microrobot that Can Fly as Fast as a Bumblebee

MIT engineers have created an aerial microrobot that matches bumblebee‑level speed and agility. By pairing a model‑predictive controller with a deep‑learning policy, the robot achieved a 447% boost in speed and a 255% rise in acceleration. The AI‑driven system allowed...

By Robohub

News•Dec 24, 2025

The Science of Human Touch – and Why It’s so Hard to Replicate in Robots

Roboticists are confronting the complexity of human touch as they develop soft, sensor‑filled skins that can perceive pressure, vibration, stretch and texture. Researchers at Oxford highlight that touch is an active, distributed sense, with mechanoreceptors and embodied intelligence similar to...

By Robohub