Deep Learning Achieves Superior Quantum Error Mitigation for up to Five Qubits

•January 21, 2026

0

Why It Matters

The advance dramatically improves the accuracy of near‑term quantum hardware, lowering the overhead for error mitigation and accelerating applications in chemistry, materials science and other data‑intensive fields.

Key Takeaways

- •246k five‑qubit circuits trained deep‑learning models.

- •Attention models outperformed SPAM, Repolarizer, Thresholding.

- •Noisy probability distribution is most critical input.

- •Transfer learning succeeded between IBM Algiers and Hanoi.

- •Transfer fails across different circuit classes.

Pulse Analysis

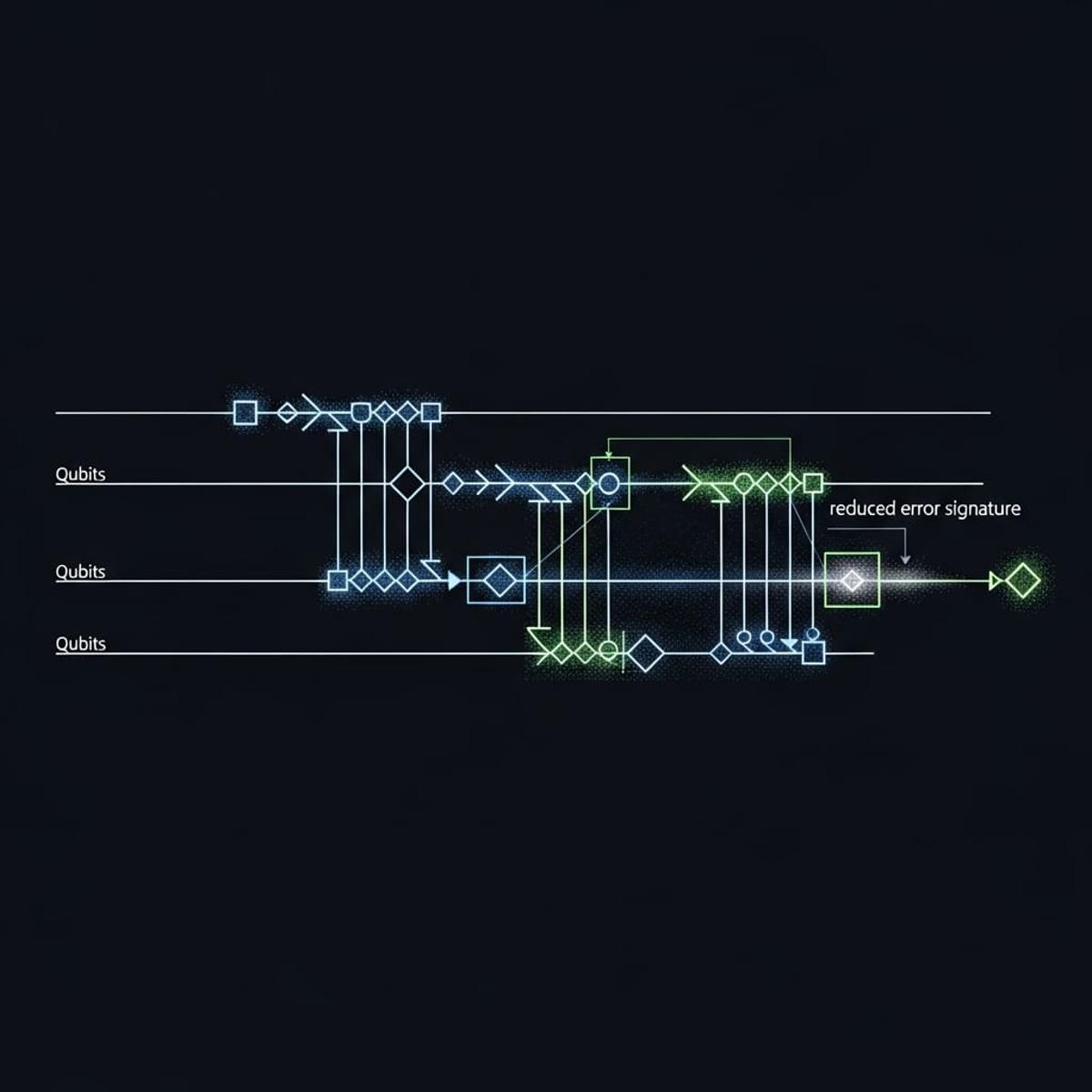

Quantum error mitigation remains a bottleneck for extracting useful results from noisy intermediate‑scale quantum (NISQ) devices. Conventional methods rely on hardware calibration or post‑processing heuristics that struggle with drift and device‑specific noise patterns. By framing mitigation as a supervised learning problem, deep‑learning architectures—particularly sequence‑to‑sequence and transformer‑style attention models—can learn complex mappings from noisy output distributions to corrected probabilities, effectively internalizing the stochastic behavior of superconducting transmons.

The study leveraged an unprecedented corpus of 246,000 five‑qubit circuits, spanning random‑gate and Pauli‑gadget families, collected over months via the Quantinuum Nexus platform. Models ingested circuit encodings, noisy probability vectors, and real‑time device parameters, with ablation tests confirming that the noisy distribution alone drives performance. Attention‑based generators displayed the highest resilience to dataset shifts and achieved superior fidelity compared with baseline SPAM correction, Repolarizer and thresholding techniques. Crucially, the same pretrained network transferred between IBM Algiers and IBM Hanoi processors with minimal fine‑tuning, provided that up‑to‑date hardware characterization data were supplied.

For industry, these findings suggest a scalable, software‑centric route to enhance quantum computation without extensive hardware redesign. Companies targeting quantum‑accelerated drug discovery or materials modeling can deploy pre‑trained mitigation models across heterogeneous QPU fleets, reducing calibration cycles and improving throughput. Future work must address expectation‑value mitigation and extend the approach to larger qubit counts, but the current results already signal a shift toward AI‑augmented quantum error correction as a cornerstone of commercial quantum workflows.

Deep Learning Achieves Superior Quantum Error Mitigation for up to Five Qubits

0

Comments

Want to join the conversation?

Loading comments...