Exponentially Improved Multiphoton Interference Benchmarking Advances Quantum Technology Scalability

•January 20, 2026

0

Key Takeaways

- •QFT protocol achieves O(1) sample complexity for prime photons

- •Sub‑polynomial scaling for non‑prime photon counts

- •Validated on Quandela’s reconfigurable photonic processor

- •Outperforms cyclic interferometer methods in runtime and precision

- •No photon‑number‑resolving detectors required

Summary

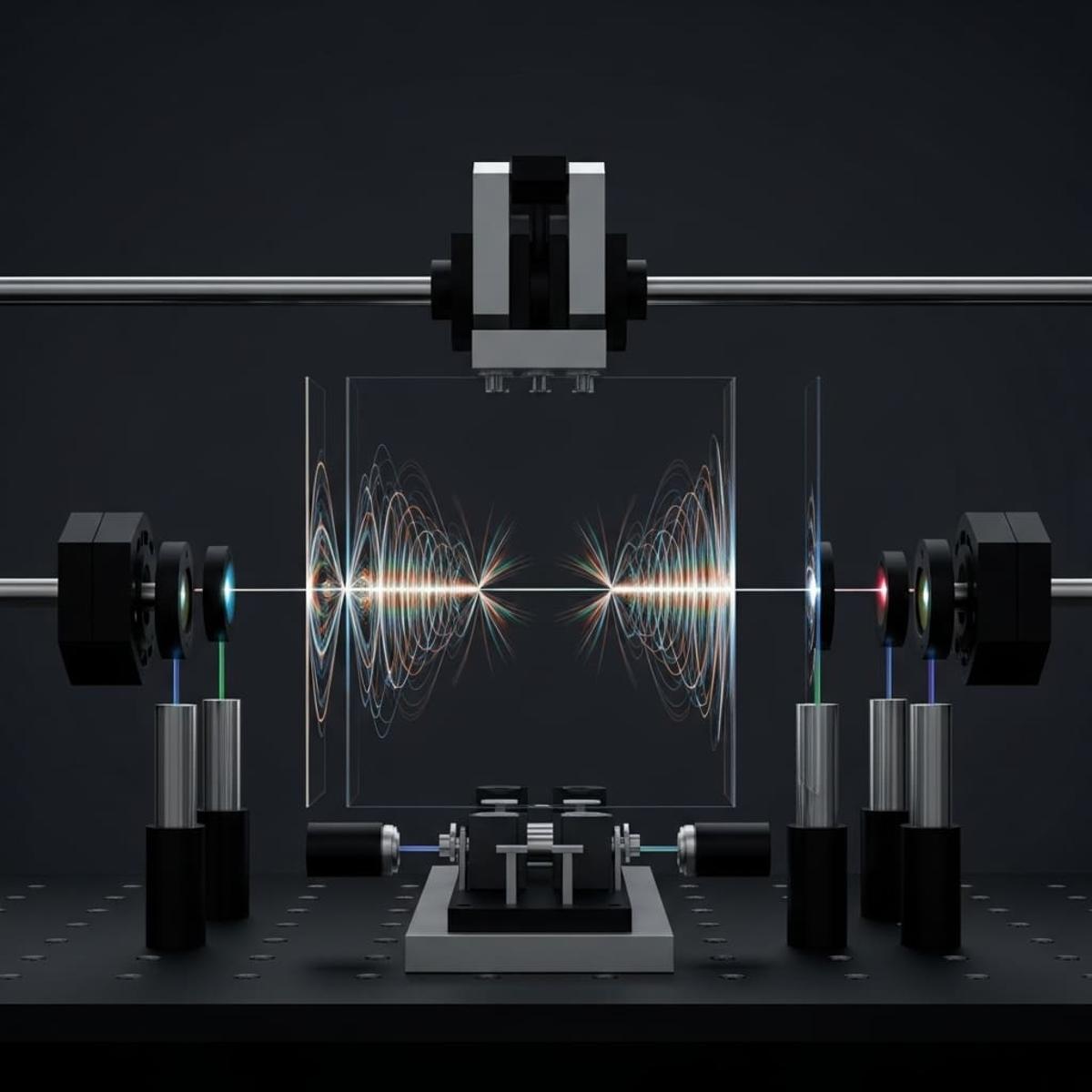

Researchers led by Sanz, Annoni, and Wein introduced a quantum Fourier‑transform (QFT) interferometer protocol that dramatically reduces the sample complexity of genuine n‑photon indistinguishability benchmarking. The method attains constant O(1) complexity for prime‑photon counts and sub‑polynomial scaling for other photon numbers, an exponential improvement over prior O(4ⁿ) approaches. Experimental tests on Quandela’s reconfigurable photonic processor confirmed faster runtimes and higher precision without photon‑number‑resolving detectors. This marks the first scalable technique for multi‑photon indistinguishability, unlocking new pathways for photonic quantum technologies.

Pulse Analysis

Multiphoton interference lies at the heart of photonic quantum computing, yet assessing genuine n‑photon indistinguishability has long been hampered by exponential sample requirements. Traditional benchmarking methods demand O(4ⁿ) measurements, quickly becoming infeasible as systems scale beyond a handful of photons. This limitation has constrained experimental progress and slowed the transition from laboratory prototypes to practical quantum processors, making efficient verification techniques a critical research priority.

The newly proposed quantum Fourier‑transform (QFT) interferometer protocol leverages suppression laws inherent to the QFT to sidestep the exponential blow‑up. By post‑selecting output configurations that are uniformly populated when photons are partially distinguishable, the method extracts the indistinguishability metric with constant O(1) complexity for prime‑photon ensembles and sub‑polynomial growth otherwise. Theoretical analysis proves optimality across numerous scenarios, establishing a rigorous foundation that outperforms the previously dominant cyclic integrated interferometer approach.

Experimental validation on Quandela’s reconfigurable photonic quantum processor demonstrates the protocol’s practical advantages: faster runtimes, higher precision, and the ability to operate without photon‑number‑resolving detectors. These gains directly translate into more scalable hardware testing pipelines, enabling developers to certify larger photonic circuits with reduced overhead. As the quantum industry pushes toward fault‑tolerant, high‑dimensional photonic architectures, such efficient benchmarking will be indispensable for both academic research and commercial deployment, accelerating the roadmap toward viable quantum advantage.

Comments

Want to join the conversation?