Light-Based System Recalls Past Data Without Training

•February 25, 2026

0

Why It Matters

The architecture lowers experimental overhead for quantum reservoir computing, making it a viable hardware accelerator for high‑frequency forecasting and pattern‑recognition workloads across finance, communications, and scientific modeling.

Key Takeaways

- •Programmable linear-optical reservoir uses measurement feedback, no weight training

- •Achieves NRMSE 0.15–0.21 on standard time-series benchmarks

- •Memory capacity 4.2 bits, peaks near stability edge

- •Scales with limited phase updates, compatible with silicon photonics

- •Photon loss reduces memory by 12% and accuracy by 7%

Pulse Analysis

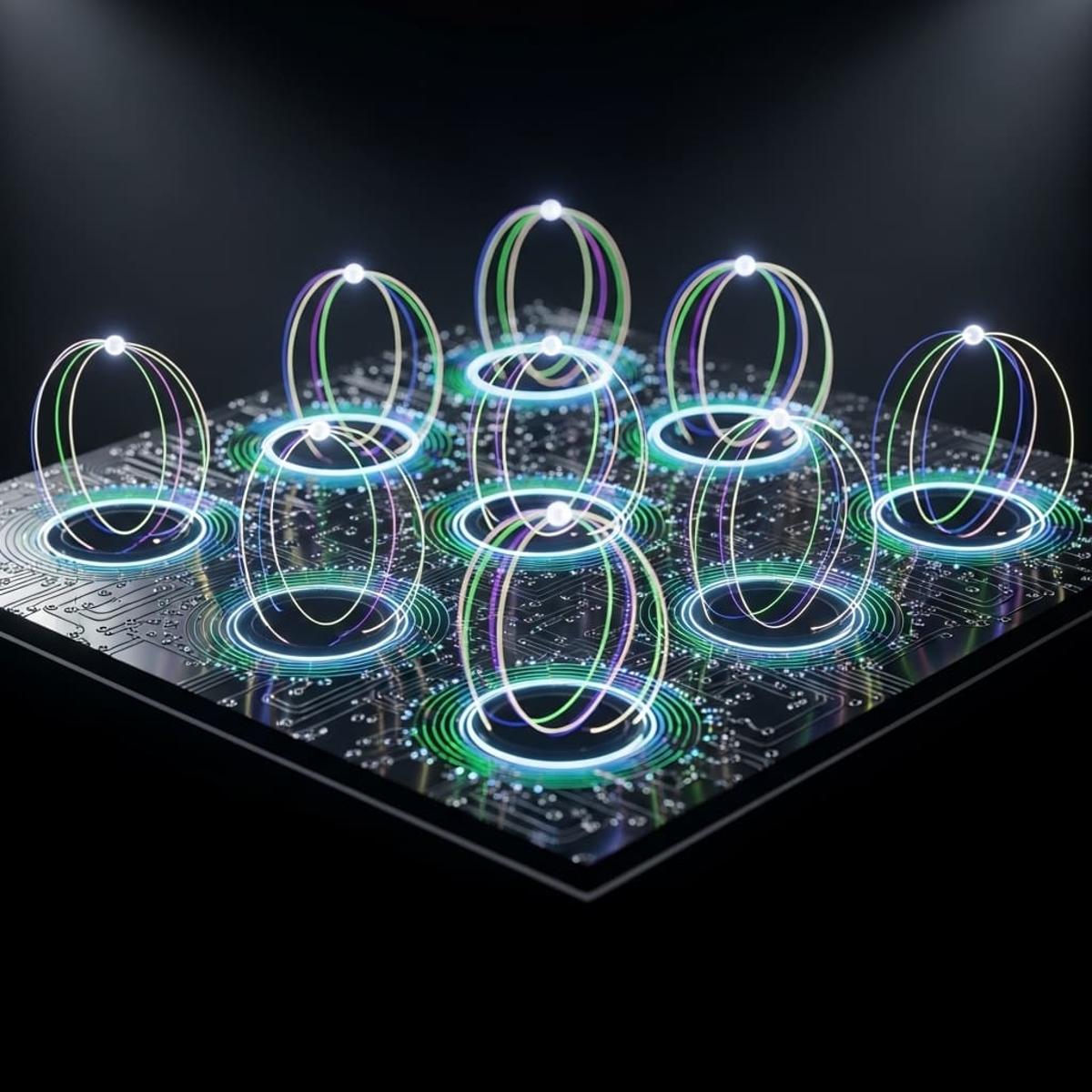

Quantum reservoir computing (QRC) has emerged as a bridge between classical recurrent networks and quantum hardware, promising high‑dimensional nonlinear processing with minimal training. The new linear‑optical implementation distinguishes itself by embedding a feedback loop directly into a reconfigurable interferometer mesh, allowing the system to recall past inputs through photon coincidence patterns rather than explicit weight updates. This design sidesteps the demanding qubit control requirements of gate‑based quantum computers, positioning photonic platforms as immediate candidates for near‑term quantum‑enhanced AI.

Performance metrics underscore the practical relevance of the approach. On canonical chaotic benchmarks such as the Mackey‑Glass series and NARMA‑3, the reservoir recorded normalized‑RMSE scores of 0.18 and 0.21 respectively, while a non‑integrable Ising chain simulation yielded an even lower 0.15 error. A linear memory capacity of 4.2 bits, peaking near the edge‑of‑chaos transition, demonstrates that the system can retain information over multiple time steps, a critical attribute for financial market prediction and speech‑recognition pipelines. Even with realistic photon loss (0.1 dB per component) and limited measurement samples, accuracy degradation remained modest, confirming robustness against experimental imperfections.

Scalability and integration are the next frontiers. By restricting programmable updates to a structured subset of Mach‑Zehnder interferometers, the architecture reduces control complexity and aligns with existing silicon‑photonic foundry processes, eliminating the need for individual qubit calibration. This hardware‑friendly model opens pathways for large‑scale deployment in data‑center accelerators or edge devices where low latency and energy efficiency are paramount. Future research will likely focus on extending sequence length handling, optimizing loss mitigation, and coupling the reservoir with classical machine‑learning layers to create hybrid quantum‑classical pipelines that can tackle increasingly sophisticated temporal inference tasks.

Light-Based System Recalls Past Data Without Training

0

Comments

Want to join the conversation?

Loading comments...