Mixture of Experts Vision Transformer Achieves High-Fidelity Surface Code Decoding

•January 23, 2026

0

Why It Matters

Higher‑fidelity decoding reduces the overhead for error‑corrected qubits, accelerating the path to practical quantum processors. The method demonstrates that vision‑transformer and MoE techniques can overcome longstanding scalability bottlenecks in quantum error correction.

Key Takeaways

- •QuantumSMoE uses vision transformer with MoE for decoding

- •Plus-shaped embeddings capture toric code geometry

- •Outperforms MWPM, BP-LSD, QECCT in logical error rate

- •SoftMoE layer ensures stable expert routing

- •Scalable real-time decoding advances fault-tolerant quantum computers

Pulse Analysis

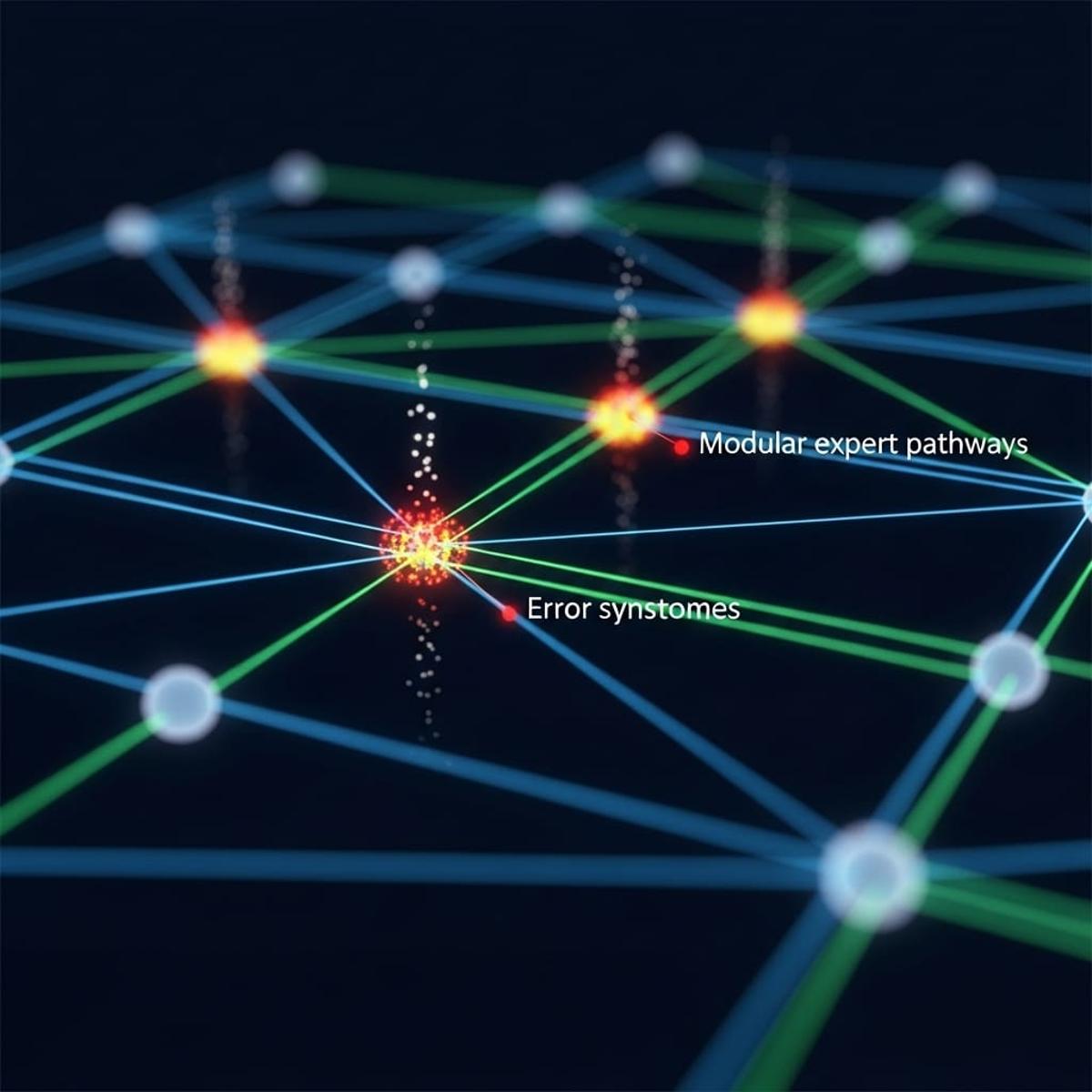

Quantum error correction remains the linchpin for reliable quantum computation, especially when using topological surface codes such as the toric code. Traditional decoders either rely on heavyweight classical algorithms that struggle with scaling or on machine‑learning models that ignore the code’s spatial structure. By borrowing the spatial inductive biases of vision transformers, QuantumSMoE bridges this gap, embedding syndrome data in plus‑shaped patches that respect lattice connectivity and applying adaptive masking to focus on relevant neighborhoods.

The core innovation lies in the mixture‑of‑experts (MoE) architecture, specifically the SoftMoE module, which routes syndrome patches to specialized experts without incurring the routing instability typical of conventional MoE systems. An auxiliary slot‑orthogonality loss further refines token‑to‑expert assignments, ensuring that similar error patterns are processed by the same expert group. This design delivers high representational capacity while keeping inference latency low, a prerequisite for real‑time decoding in quantum processors where syndrome data must be processed within microseconds.

Benchmarking on the toric code under depolarizing noise reveals that QuantumSMoE achieves markedly lower logical error rates than established baselines like MWPM, MWPM‑Corr, BP‑LSD, and the QECCT decoder. The reduction in logical errors translates directly into fewer physical qubits needed for a given logical fidelity, cutting hardware costs and simplifying error‑correction overhead. As the field moves toward more complex codes and realistic noise models, the vision‑transformer‑MoE paradigm offers a scalable pathway to fault‑tolerant quantum computing, positioning QuantumSMoE as a pivotal step toward practical quantum advantage.

Mixture of Experts Vision Transformer Achieves High-Fidelity Surface Code Decoding

0

Comments

Want to join the conversation?

Loading comments...