Topology-Aware Block Coordinate Descent Achieves Faster Qubit Frequency Calibration for Superconducting Quantum Processors

•January 19, 2026

0

Key Takeaways

- •BCD-NNA matches BFS accuracy, cuts runtime dramatically

- •Linear per‑epoch complexity with qubit count

- •Robust against measurement noise and moderate crosstalk

- •Formulated as Sequence‑Dependent TSP solved by nearest‑neighbor heuristic

- •Enables scalable calibration for NISQ‑era processors

Summary

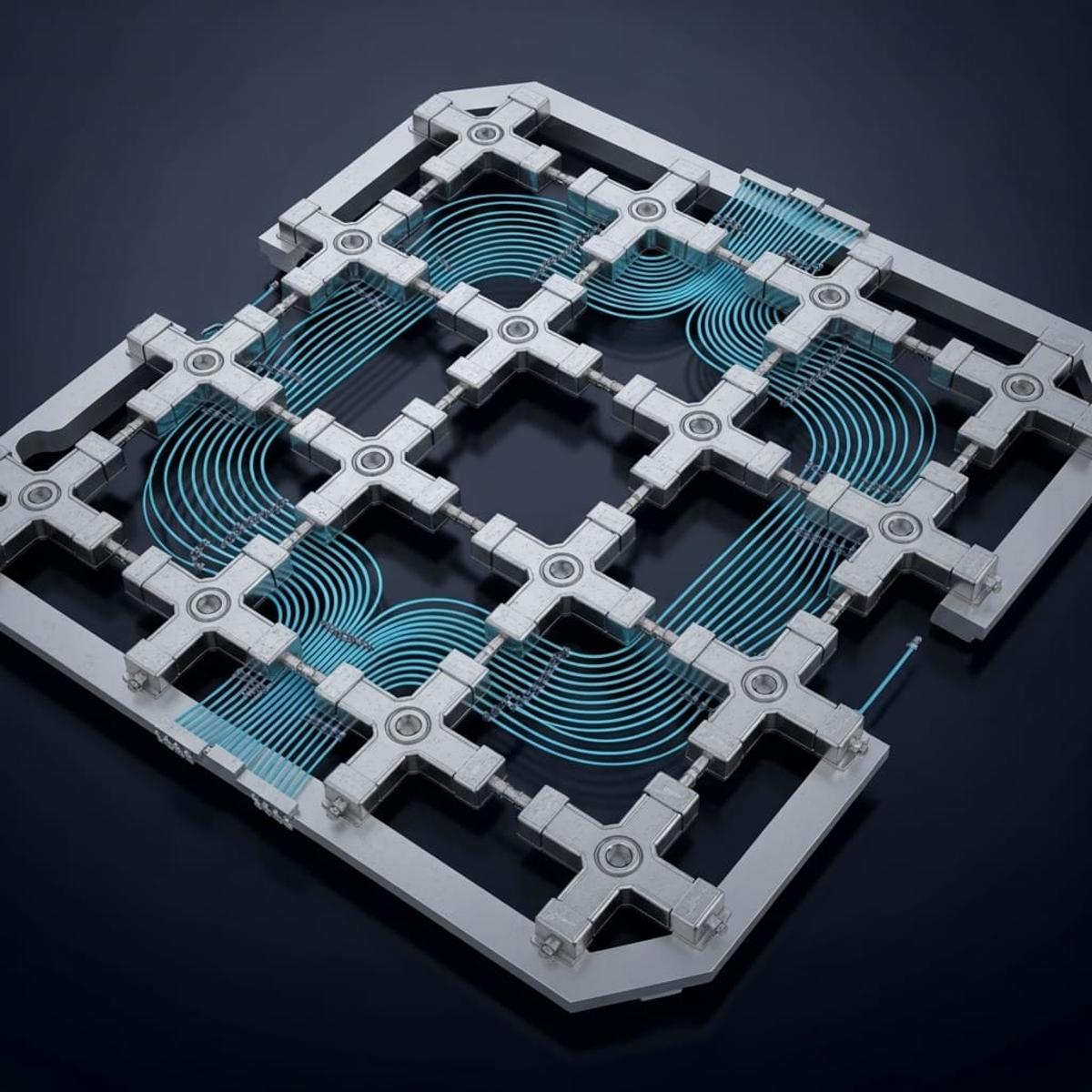

Researchers from Tsinghua University and the Beijing Academy of Quantum Information Sciences have shown that the popular Snake optimizer is mathematically equivalent to Block Coordinate Descent (BCD) for superconducting qubit frequency calibration. By casting the block ordering problem as a Sequence‑Dependent Traveling Salesman Problem and solving it with a nearest‑neighbor heuristic, they created a topology‑aware BCD‑NNA algorithm. The method achieves linear per‑epoch complexity with qubit count, dramatically reducing calibration runtime while preserving accuracy. Simulations demonstrate robustness to measurement noise and moderate non‑local crosstalk, offering a scalable workflow for NISQ‑era processors.

Pulse Analysis

Accurate qubit frequency calibration remains a bottleneck for superconducting quantum computers, where inter‑qubit crosstalk and hardware variability can degrade performance. Traditional approaches rely on exhaustive sweeps or graph‑based heuristics such as breadth‑first or depth‑first search, which scale poorly as qubit counts rise. The new study reframes calibration as an optimization problem, establishing a formal equivalence between the widely used Snake optimizer and Block Coordinate Descent (BCD). This theoretical bridge allows the quantum community to leverage decades of classical optimization research, providing a rigorous foundation for future algorithmic improvements.

The core innovation lies in a topology‑aware block ordering strategy. By modeling the order of block evaluations as a Sequence‑Dependent Traveling Salesman Problem, the authors apply a nearest‑neighbor heuristic (BCD‑NNA) that respects the physical connectivity of the processor. This yields linear complexity per epoch, meaning the computational effort grows only proportionally with the number of qubits. Crucially, the algorithm maintains calibration fidelity, matching the accuracy of BFS and DFS heuristics while delivering substantially lower runtime. Extensive simulations on multi‑qubit models confirm the method’s resilience to measurement noise and its tolerance of moderate non‑local crosstalk, two common sources of error in real‑world devices.

For industry and research labs, the implications are immediate. Reduced calibration time translates to higher system availability and lower operational costs, essential as quantum processors scale beyond a few dozen qubits. The scalability of BCD‑NNA positions it as a practical tool for NISQ‑era devices, where rapid re‑calibration is needed between experiments. Moreover, the formalization of the calibration objective opens pathways for adaptive crosstalk detection and parallel optimization, promising further gains as hardware architectures evolve. By marrying classical optimization theory with quantum hardware constraints, this work paves the way for more efficient, reliable quantum computing pipelines.

Comments

Want to join the conversation?